aimed to be the representative of one of the six accused in the December 16 gangrape case who has sought shifting of t...

angrape-case-two-lawyers-claim-to-be-engaged-by-accused/article4332680.ece">

distribution companies – the Anil Ambani-owned BRPL and BYPL and the Tatas-owned Tata Powe...

discoms-demand-yet-another-hike-in-charges/article4331482.ece">

discoms-demand-yet-another-hike-in-charges/article4331482.ece#comments">

我需要得到< a href =>具有类article-additional-info的所有div的值

我是BeautifulSoup的新手

所以我需要网址

"http://www.thehindu.com/news/national/gangrape-case-two-lawyers-claim-to-be-engaged-by-accused/article4332680.ece"

"http://www.thehindu.com/news/cities/Delhi/power-discoms-demand-yet-another-hike-in-charges/article4331482.ece"

实现这一目标的最佳方法是什么?

最佳答案

根据您的标准,它返回三个URL(而不是两个) – 您想要过滤掉第三个吗?

基本思想是迭代HTML,只抽取你的类中的那些元素,然后迭代该类中的所有链接,拉出实际的链接:

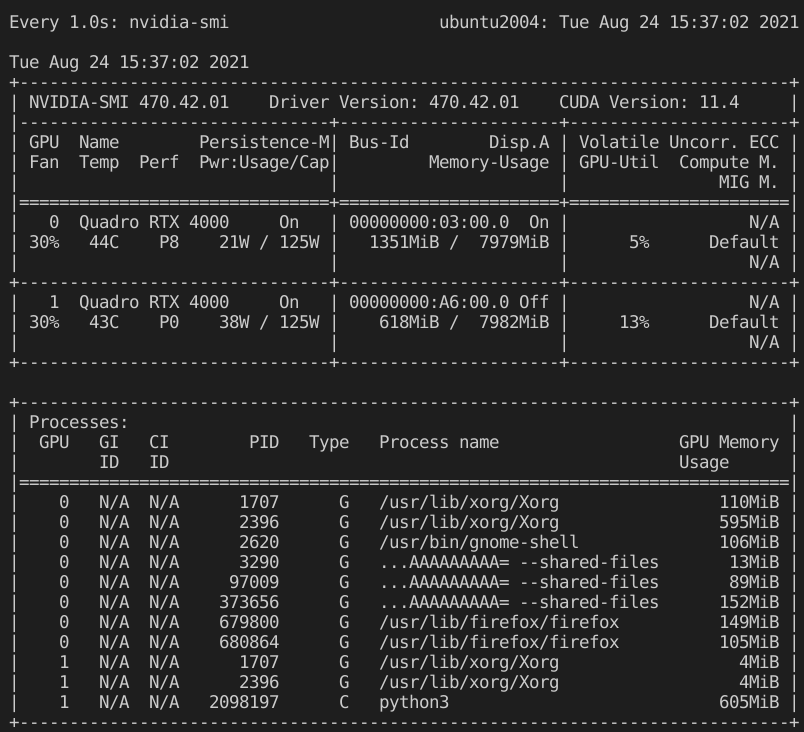

In [1]: from bs4 import BeautifulSoup

In [2]: html = # your HTML

In [3]: soup = BeautifulSoup(html)

In [4]: for item in soup.find_all(attrs={'class': 'article-additional-info'}):

...: for link in item.find_all('a'):

...: print link.get('href')

...:

http://www.thehindu.com/news/national/gangrape-case-two-lawyers-claim-to-be-engaged-by-accused/article4332680.ece

http://www.thehindu.com/news/cities/Delhi/power-discoms-demand-yet-another-hike-in-charges/article4331482.ece

http://www.thehindu.com/news/cities/Delhi/power-discoms-demand-yet-another-hike-in-charges/article4331482.ece#comments

这会将您的搜索范围限制为仅包含article-additional-info类标记的元素,并在其中查找所有锚点(a)标记并获取其相应的href链接.