2019年4月16日零基础入门Python,第二天就给自己找了一个任务,做网站文章的爬虫小项目,因为实战是学代码的最快方式。所以从今天起开始写Python实战入门系列教程,也建议大家学Python时一定要多写多练。

目标

1,学习Python爬虫2,爬取新闻网站新闻列表3,爬取图片4,把爬取到的数据存在本地文件夹或者数据库5,学会用pycharm的pip安装Python需要用到的扩展包一,首先看看Python是如何简单的爬取网页的1,准备工作

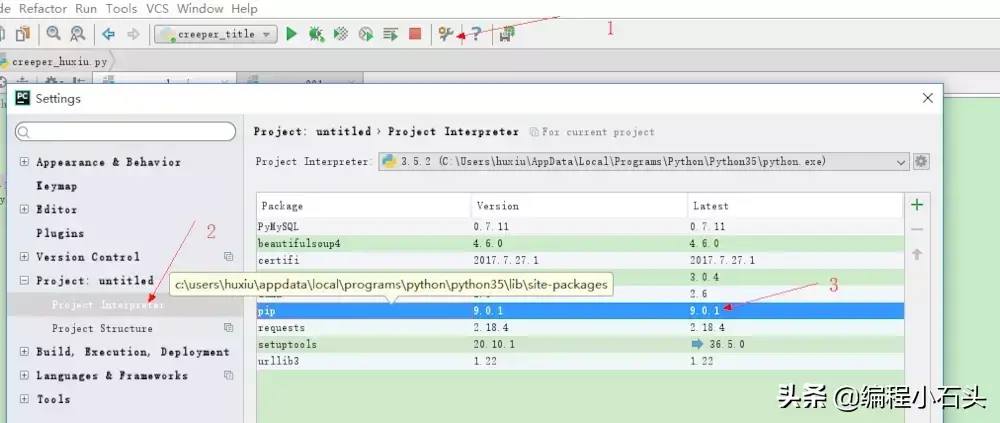

项目用的BeautifulSoup4和chardet模块属于三方扩展包,如果没有请自行pip安装,我是用pycharm来做的安装,下面简单讲下用pycharm安装chardet和BeautifulSoup4

在pycharm的设置里按照下图的步骤操作

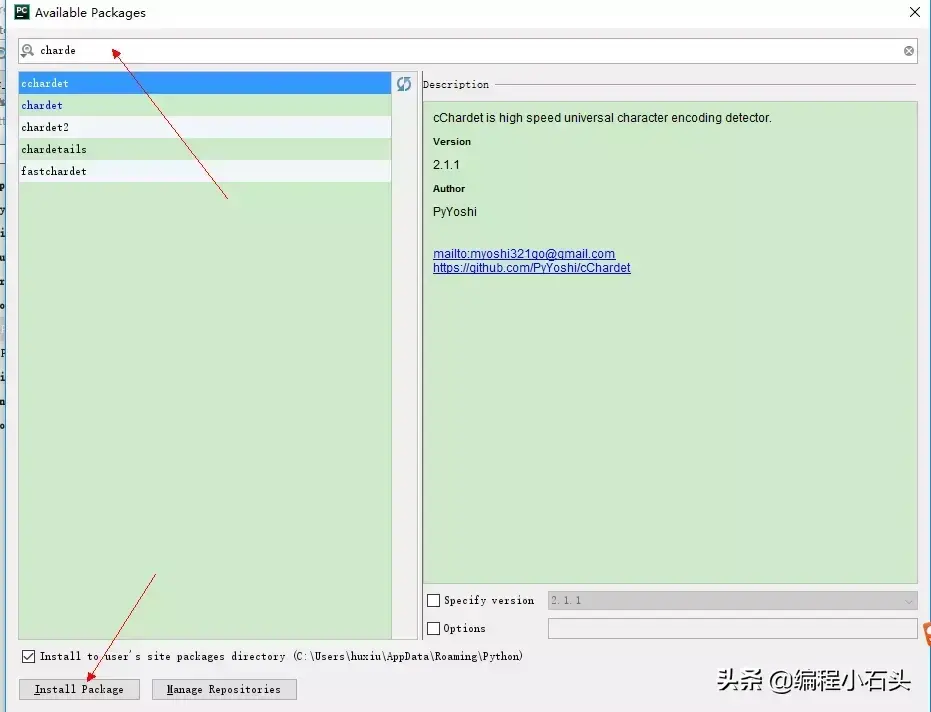

如下图搜索你要的扩展类库,如我们这里需要安装chardet直接搜索就行,然后点击install package, BeautifulSoup4做一样的操作就行

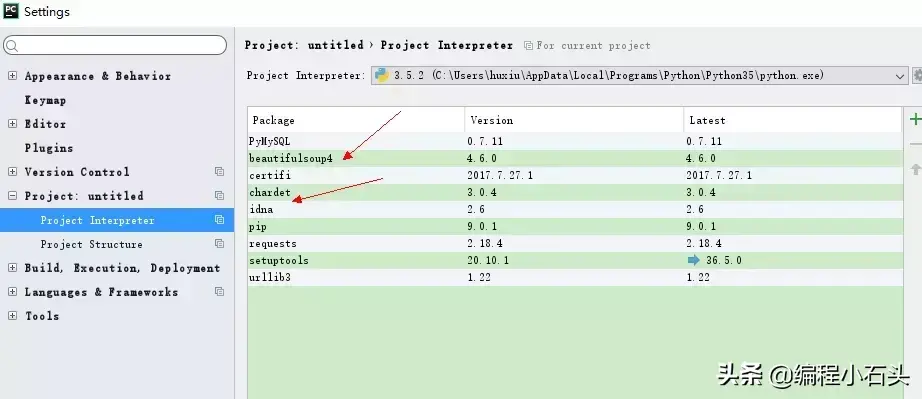

安装成功后就会出现在在安装列表中,到此就说明我们安装网络爬虫扩展库成功

二,由浅入深,我们先抓取网页

我们这里以抓取简书首页为例:http://www.jianshu.com/

# 简单的网络爬虫

from urllib import request

import chardet

response = request.urlopen("http://www.jianshu.com/")

html = response.read()

charset = chardet.detect(html)# {'language': '', 'encoding': 'utf-8', 'confidence': 0.99}

html = html.decode(str(charset["encoding"])) # 解码

print(html)

由于抓取的html文档比较长,这里简单贴出来一部分给大家看下

<!DOCTYPE html>

<!--[if IE 6]><html class="ie lt-ie8"><![endif]-->

<!--[if IE 7]><html class="ie lt-ie8"><![endif]-->

<!--[if IE 8]><html class="ie ie8"><![endif]-->

<!--[if IE 9]><html class="ie ie9"><![endif]-->

<!--[if !IE]><!--> <html> <!--<![endif]-->

<head>

<Meta charset="utf-8">

<Meta http-equiv="X-UA-Compatible" content="IE=Edge">

<Meta name="viewport" content="width=device-width, initial-scale=1.0,user-scalable=no">

<!-- Start of Baidu Transcode -->

<Meta http-equiv="Cache-Control" content="no-siteapp" />

<Meta http-equiv="Cache-Control" content="no-transform" />

<Meta name="applicable-device" content="pc,mobile">

<Meta name="MobileOptimized" content="width"/>

<Meta name="HandheldFriendly" content="true"/>

<Meta name="mobile-agent" content="format=html5;url=http://localhost/">

<!-- End of Baidu Transcode -->

<Meta name="description" content="简书是一个优质的创作社区,在这里,你可以任性地创作,一篇短文、一张照片、一首诗、一幅画……我们相信,每个人都是生活中的艺术家,有着无穷的创造力。">

<Meta name="keywords" content="简书,简书官网,图文编辑软件,简书下载,图文创作,创作软件,原创社区,小说,散文,写作,阅读">

..........后面省略一大堆

这就是python3的爬虫简单入门,是不是很简单,建议大家多敲几遍

目标

爬取百度贴吧里的图片把图片保存到本地,都是妹子图片奥不多说,直接上代码,代码里的注释很详细。大家仔细阅读注释就可以理解了import re

import urllib.request

#爬取网页html

def getHtml(url):

page = urllib.request.urlopen(url)

html = page.read()

return html

html = getHtml("http://tieba.baidu.com/p/3205263090")

html = html.decode('UTF-8')

def getImg(html):

# 利用正则表达式匹配网页里的图片地址

reg = r'src="([.*S]*.jpg)" pic_ext="jpeg"'

imgre=re.compile(reg)

imglist=re.findall(imgre,html)

return imglist

imgList=getImg(html)

imgCount=0

#for把获取到的图片都下载到本地pic文件夹里,保存之前先在本地建一个pic文件夹

for imgPath in imgList:

f=open("../pic/"+str(imgCount)+".jpg",'wb')

f.write((urllib.request.urlopen(imgPath)).read())

f.close()

imgCount+=1

print("全部抓取完成")

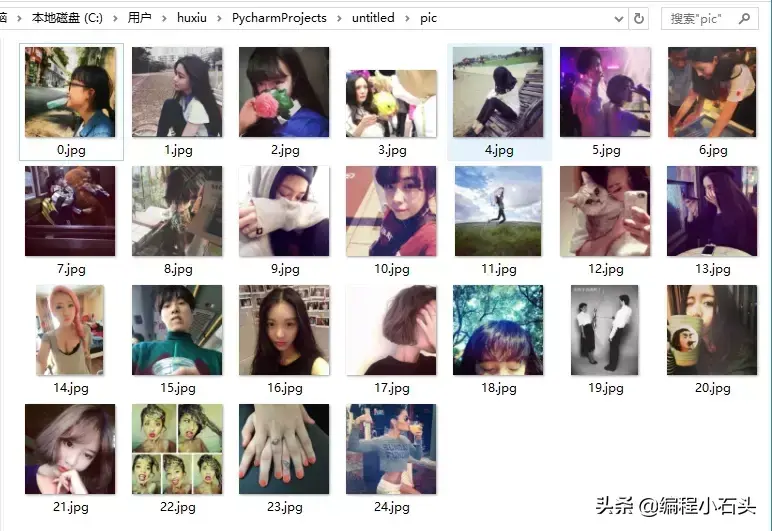

迫不及待的看下都爬取到了些什么美图

就这么轻易的爬取到了24个妹子的图片。是不是很简单。

四,python3爬取新闻网站新闻列表

这里我们只爬取新闻标题,新闻url,新闻图片链接。爬取到的数据目前只做展示,等我学完Python操作数据库以后会把爬取到的数据保存到数据库。到这里稍微复杂点,就分布给大家讲解

1 这里我们需要先爬取到html网页上面第一步有讲怎么抓取网页2分析我们要抓取的html标签

分析上图我们要抓取的信息再div中的a标签和img标签里,所以我们要想的就是怎么获取到这些信息

这里就要用到我们导入的BeautifulSoup4库了,这里的关键代码

# 使用剖析器为html.parser

soup = BeautifulSoup(html, 'html.parser')

# 获取到每一个class=hot-article-img的a节点

allList = soup.select('.hot-article-img')

上面代码获取到的allList就是我们要获取的新闻列表,抓取到的如下

[<div class="hot-article-img">

<a href="/article/211390.html" target="_blank">

</a>

</div>, <div class="hot-article-img">

<a href="/article/214982.html" target="_blank" title="TFBOYS成员各自飞,商业价值天花板已现?">

</a>

</div>, <div class="hot-article-img">

<a href="/article/213703.html" target="_blank" title="买手店江湖">

</a>

</div>, <div class="hot-article-img">

<a href="/article/214679.html" target="_blank" title="iPhone X正式告诉我们,手机和相机开始分道扬镳">

</a>

</div>, <div class="hot-article-img">

<a href="/article/214962.html" target="_blank" title="信用已被透支殆尽,乐视汽车或成贾跃亭弃子">

</a>

</div>, <div class="hot-article-img">

<a href="/article/214867.html" target="_blank" title="别小看“搞笑诺贝尔奖”,要向好奇心致敬">

</a>

</div>, <div class="hot-article-img">

<a href="/article/214954.html" target="_blank" title="10 年前改变世界的,可不止有 iPhone | 发车">

</a>

</div>, <div class="hot-article-img">

<a href="/article/214908.html" target="_blank" title="感谢微博替我做主">

</a>

</div>, <div class="hot-article-img">

<a href="/article/215001.html" target="_blank" title="苹果确认取消打赏抽成,但还有多少内容让你觉得值得掏腰包?">

</a>

</div>, <div class="hot-article-img">

<a href="/article/214969.html" target="_blank" title="中国音乐的“全面付费”时代即将到来?">

</a>

</div>, <div class="hot-article-img">

<a href="/article/214964.html" target="_blank" title="百丽退市启示录:“一代鞋王”如何与新生代消费者渐行渐远">

</a>

</div>]

这里数据是抓取到了,但是太乱了,并且还有很多不是我们想要的,下面就通过遍历来提炼出我们的有效信息

for news in allList:

aaa = news.select('a')

# 只选择长度大于0的结果

if len(aaa) > 0:

try:#如果抛出异常就代表为空

href = url + aaa[0]['href']

except Exception:

href=''

try:

imgurl = aaa[0].select('img')[0]['src']

except Exception:

imgurl=""

# 新闻标题

try:

title = aaa[0]['title']

except Exception:

title = "标题为空"

print("标题",title,"nurl:",href,"n图片地址:",imgurl)

print("==============================================================================================")

这里添加异常处理,主要是有的新闻可能没有标题,没有url或者图片,如果不做异常处理,可能导致我们爬取的中断。

过滤后的有效信息

url: https://www.huxiu.com/article/211390.html

图片地址: https://img.huxiucdn.com/article/cover/201708/22/173535862821.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 TFBOYS成员各自飞,商业价值天花板已现?

url: https://www.huxiu.com/article/214982.html

图片地址: https://img.huxiucdn.com/article/cover/201709/17/094856378420.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 买手店江湖

url: https://www.huxiu.com/article/213703.html

图片地址: https://img.huxiucdn.com/article/cover/201709/17/122655034450.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 iPhone X正式告诉我们,手机和相机开始分道扬镳

url: https://www.huxiu.com/article/214679.html

图片地址: https://img.huxiucdn.com/article/cover/201709/14/182151300292.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 信用已被透支殆尽,乐视汽车或成贾跃亭弃子

url: https://www.huxiu.com/article/214962.html

图片地址: https://img.huxiucdn.com/article/cover/201709/16/210518696352.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 别小看“搞笑诺贝尔奖”,要向好奇心致敬

url: https://www.huxiu.com/article/214867.html

图片地址: https://img.huxiucdn.com/article/cover/201709/15/180620783020.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 10 年前改变世界的,可不止有 iPhone | 发车

url: https://www.huxiu.com/article/214954.html

图片地址: https://img.huxiucdn.com/article/cover/201709/16/162049096015.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 感谢微博替我做主

url: https://www.huxiu.com/article/214908.html

图片地址: https://img.huxiucdn.com/article/cover/201709/16/010410913192.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 苹果确认取消打赏抽成,但还有多少内容让你觉得值得掏腰包?

url: https://www.huxiu.com/article/215001.html

图片地址: https://img.huxiucdn.com/article/cover/201709/17/154147105217.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 中国音乐的“全面付费”时代即将到来?

url: https://www.huxiu.com/article/214969.html

图片地址: https://img.huxiucdn.com/article/cover/201709/17/101218317953.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

标题 百丽退市启示录:“一代鞋王”如何与新生代消费者渐行渐远

url: https://www.huxiu.com/article/214964.html

图片地址: https://img.huxiucdn.com/article/cover/201709/16/213400162818.jpg?imageView2/1/w/280/h/210/|imagemogr2/strip/interlace/1/quality/85/format/jpg

==============================================================================================

到这里我们抓取新闻网站新闻信息就大功告成了,下面贴出来完整代码

from bs4 import BeautifulSoup

from urllib import request

import chardet

url = "https://www.huxiu.com"

response = request.urlopen(url)

html = response.read()

charset = chardet.detect(html)

html = html.decode(str(charset["encoding"])) # 设置抓取到的html的编码方式

# 使用剖析器为html.parser

soup = BeautifulSoup(html, 'html.parser')

# 获取到每一个class=hot-article-img的a节点

allList = soup.select('.hot-article-img')

#遍历列表,获取有效信息

for news in allList:

aaa = news.select('a')

# 只选择长度大于0的结果

if len(aaa) > 0:

try:#如果抛出异常就代表为空

href = url + aaa[0]['href']

except Exception:

href=''

try:

imgurl = aaa[0].select('img')[0]['src']

except Exception:

imgurl=""

# 新闻标题

try:

title = aaa[0]['title']

except Exception:

title = "标题为空"

print("标题",title,"nurl:",href,"n图片地址:",imgurl)

print("==============================================================================================")

数据获取到了我们还要把数据存到数据库,只要存到我们的数据库里,数据库里有数据了,就可以做后面的数据分析处理,也可以用这些爬取来的文章,给app提供新闻api接口,当然这都是后话了,等我自学到Python数据库操作以后,会写一篇文章

《python3实战入门数据库篇---把爬取到的数据存到数据库》

编程小石头,为分享干货而生!据说,每个年轻上进,颜值又高的互联网人都关注了编程小石头。