3.客户端操作

shell客户端

3.1HBase数据模型概念:

| 在hive表或者mysql表中说描述哪一个数据都是说的哪个库里面的哪张表里面的哪一行数据中的哪一列,才能定位到这个数据 但是在hbase中没有库的概念,说一个数据说的是哪一个名称空间下的那一张表下的哪一个行键的哪一个列族下面的哪一个列对应的是这个数据 |

namespace:doit

table:user_info

| Rowkey |

Column Family1(列族) |

Column Family2(列族) |

| id |

Name |

age |

gender |

phoneNum |

address |

job |

code |

| rowkey_001 |

1 |

柳岩 |

18 |

女 |

88888888 |

北京.... |

演员 |

123 |

| rowkey_002 |

2 |

唐嫣 |

38 |

女 |

66666666 |

上海.... |

演员 |

213 |

| rowkey_003 |

3 |

大郎 |

8 |

男 |

44444444 |

南京.... |

销售 |

312 |

| rowkey_004 |

4 |

金莲 |

33 |

女 |

99999999 |

东京.... |

销售 |

321 |

| ... |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

namespace:hbase中没有数据库的概念,是使用namespace来达到数据库分类别管理表的作用

table:表,一个表包含多行数据

Row Key (行键):一行数据包含一个唯一标识rowkey、多个column以及对应的值。在HBase中,一张表中所有row都按照rowkey的字典序由小到大排序。

Column Family(列族):在建表的时候指定,不能够随意的删减,一个列族下面可以有多个列(类似于给列进行分组,相同属性的列是一个组,给这个组取个名字叫列族)

Column Qualifier (列):列族下面的列,一个列必然是属于某一个列族的行

Cell:单元格,由(rowkey、column family、qualifier、type、timestamp,value)组成的结构,其中type表示Put/Delete操作类型,timestamp代表这个cell的版本。KV结构存储,其中rowkey、column family、qualifier、type以及timestamp是K,value字段对应KV结构的V。

Timestamp(时间戳):时间戳,每个cell在写入HBase的时候都会默认分配一个时间戳作为该cell的版本,用户也可以在写入的时候自带时间戳。HBase支持多版本特性,即同一rowkey、column下可以有多个value存在,这些value使用timestamp作为版本号,版本越大,表示数据越新。

3.2进入客户端命令:

| Shell

如果配置了环境变量:在任意地方敲 hbase shell ,如果没有配置环境变量,需要在bin目录下./hbase shell

[root@linux01 conf]# hbase shell

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/app/hadoop-3.1.1/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/app/hbase-2.2.5/lib/client-facing-thirdparty/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

HBase Shell

Use "help" to get list of supported commands.

Use "exit" to quit this interactive shell.

For Reference,please visit: http://hbase.apache.org/2.0/book.html#shell

Version 2.2.5,rf76a601273e834267b55c0cda12474590283fd4c,2020年 05月 21日 星期四 18:34:40 CST

Took 0.0026 seconds

hbase(main):001:0> --代表成功进入了hbase的shell客户端 |

3.3命令大全

3.3.1通用命令

status: 查看HBase的状态,例如,服务器的数量。

| Shell

hbase(main):001:0> status

1 active master,0 backup masters,3 servers,0 dead,0.6667 average load

Took 0.3609 seconds |

version: 提供正在使用HBase版本。

| Shell

hbase(main):002:0> version

2.2.5,2020年 05月 21日 星期四 18:34:40 CST

Took 0.0004 seconds |

table_help: 表引用命令提供帮助。

| Shell

关于表的一些命令参考

如:

To read the data out,you can scan the table:

hbase> t.scan

which will read all the rows in table 't'. |

whoami: 提供有关用户的信息。

| Shell

hbase(main):004:0> whoami

root (auth:SIMPLE)

groups: root

Took 0.0098 seconds |

3.3.2命名空间相关命令

list_namespace:列出所有的命名空间

| Shell

hbase(main):005:0> list_namespace

NAMESPACE

default

hbase

2 row(s)

Took 0.0403 seconds |

create_namespace:创建一个命名空间

| Shell

hbase(main):002:0> create_namespace doit

NameError: undefined local variable or method `doit' for main:Object

hbase(main):003:0> create_namespace 'doit'

Took 0.2648 seconds

注意哦:名称需要加上引号,不然会报错的 |

describe_namespace:描述一个命名空间

| Shell

hbase(main):004:0> describe_namespace 'doit'

DESCRIPTION

{NAME => 'doit'}

Quota is disabled

Took 0.0710 seconds |

drop_namespace:删除一个命名空间

| Shell

注意 :只能删除空的命名空间,如果里面有表是删除不了的

hbase(main):005:0> drop_namespace 'doit'

Took 0.2461 seconds

--命名空间不为空的话

hbase(main):035:0> drop_namespace 'doit'

ERROR: org.apache.hadoop.hbase.constraint.ConstraintException: Only empty namespaces can be removed. Namespace doit has 1 tables

at org.apache.hadoop.hbase.master.procedure.DeleteNamespaceProcedure.prepareDelete(DeleteNamespaceProcedure.java:217)

at org.apache.hadoop.hbase.master.procedure.DeleteNamespaceProcedure.executeFromState(DeleteNamespaceProcedure.java:78)

at org.apache.hadoop.hbase.master.procedure.DeleteNamespaceProcedure.executeFromState(DeleteNamespaceProcedure.java:45)

at org.apache.hadoop.hbase.procedure2.StateMachineProcedure.execute(StateMachineProcedure.java:194)

at org.apache.hadoop.hbase.procedure2.Procedure.doExecute(Procedure.java:962)

at org.apache.hadoop.hbase.procedure2.ProcedureExecutor.execProcedure(ProcedureExecutor.java:1662)

at org.apache.hadoop.hbase.procedure2.ProcedureExecutor.executeProcedure(ProcedureExecutor.java:1409)

at org.apache.hadoop.hbase.procedure2.ProcedureExecutor.access$1100(ProcedureExecutor.java:78)

at org.apache.hadoop.hbase.procedure2.ProcedureExecutor$WorkerThread.run(ProcedureExecutor.java:1979)

For usage try 'help "drop_namespace"'

Took 0.1448 seconds |

alter_namespace:修改namespace其中属性

| Shell

hbase(main):038:0> alter_namespace 'doit',{METHOD => 'set','PROPERTY_NAME' => 'PROPERTY_VALUE'}

Took 0.2491 seconds |

list_namespace_tables:列出一个命名空间下所有的表

| Shell

hbase(main):037:0> list_namespace_tables 'doit'

TABLE

user

1 row(s)

Took 0.0372 seconds

=> ["user"] |

3.3.3DDL相关命令

list:列举出默认名称空间下所有的表

| Shell

hbase(main):001:0> list

TABLE

doit:user

1 row(s)

Took 0.3187 seconds

=> ["doit:user"] |

create:建表

| Shell

create ‘xx:t1’,{NAME=>‘f1’,VERSION=>5}

创建表t1并指明命名空间xx

{NAME} f1指的是列族

VERSION 表示版本数

多个列族f1、f2、f3

create ‘t2’,{NAME=>‘f1’},{NAME=>‘f2’},{NAME=>‘f3’}

hbase(main):003:0> create 'doit:student' 'f1','f2','f3'

Created table doit:studentf1

Took 1.2999 seconds

=> Hbase::Table - doit:studentf1

# 创建表得时候预分region

hbase(main):106:0> create 'doit:test','f1',SPLITS => ['rowkey_010','rowkey_020','rowkey_030','rowkey_040']

Created table doit:test

Took 1.3133 seconds

=> Hbase::Table - doit:test |

drop:删除表

| Shell

hbase(main):006:0> drop 'doit:studentf1'

ERROR: Table doit:studentf1 is enabled. Disable it first.

For usage try 'help "drop"'

Took 0.0242 seconds

注意哦:删除表之前需要禁用表

hbase(main):007:0> disable 'doit:studentf1'

Took 0.7809 seconds

hbase(main):008:0> drop 'doit:studentf1'

Took 0.2365 seconds

hbase(main):009:0> |

drop_all:丢弃在命令中给出匹配“regex”的表

| Shell

hbase(main):023:0> disable_all 'doit:student.*'

doit:student1

doit:student2

doit:student3

doit:studentf1

Disable the above 4 tables (y/n)?

y

4 tables successfully disabled

Took 4.3497 seconds

hbase(main):024:0> drop_all 'doit:student.*'

doit:student1

doit:student2

doit:student3

doit:studentf1

Drop the above 4 tables (y/n)?

y

4 tables successfully dropped

Took 2.4258 seconds |

disable:禁用表

| Shell

删除表之前必须先禁用表

hbase(main):007:0> disable 'doit:studentf1'

Took 0.7809 seconds |

disable_all:禁用在命令中给出匹配“regex”的表

| Shell

hbase(main):023:0> disable_all 'doit:student.*'

doit:student1

doit:student2

doit:student3

doit:studentf1

Disable the above 4 tables (y/n)?

y

4 tables successfully disabled

Took 4.3497 seconds |

enable:启用表

| Shell

删除表之前必须先禁用表

hbase(main):007:0> enable 'doit:student'

Took 0.7809 seconds |

enable_all:启用在命令中给出匹配“regex”的表

| Shell

hbase(main):032:0> enable_all 'doit:student.*'

doit:student

doit:student1

doit:student2

doit:student3

doit:student4

Enable the above 5 tables (y/n)?

y

5 tables successfully enabled

Took 5.0114 seconds |

is_enabled:判断该表是否是启用的表

| Shell

hbase(main):034:0> is_enabled 'doit:student'

true

Took 0.0065 seconds

=> true |

is_disabled:判断该表是否是禁用的表

| Shell

hbase(main):035:0> is_disabled 'doit:student'

false

Took 0.0046 seconds

=> 1 |

describe:描述这张表

| Shell

hbase(main):038:0> describe 'doit:student'

Table doit:student is ENABLED

doit:student

COLUMN FAMILIES DESCRIPTION

{NAME => 'f1',VERSIONS => '1',EVICT_BLOCKS_ON_CLOSE => 'false',NEW_VERSION_BEHAVIOR => 'false',KEEP_DELETED_CELLS => 'FALSE',CACHE_DATA_ON_WRITE => 'false',DATA_BLO

CK_ENCODING => 'NONE',TTL => 'FOREVER',MIN_VERSIONS => '0',REPLICATION_SCOPE => '0',BLOOMFILTER => 'ROW',CACHE_INDEX_ON_WRITE => 'false',IN_MEMORY => 'false',CACHE

_BLOOMS_ON_WRITE => 'false',PREFETCH_BLOCKS_ON_OPEN => 'false',COMPRESSION => 'NONE',BLOCKCACHE => 'true',BLOCKSIZE => '65536'}

{NAME => 'f2',BLOCKSIZE => '65536'}

{NAME => 'f3',BLOCKSIZE => '65536'}

3 row(s)

QUOTAS

0 row(s)

Took 0.0349 seconds

VERSIONS => '1', -- 版本数量

EVICT_BLOCKS_ON_CLOSE => 'false',

NEW_VERSION_BEHAVIOR => 'false',

KEEP_DELETED_CELLS => 'FALSE', 保留删除的单元格

CACHE_DATA_ON_WRITE => 'false',

DATA_BLOCK_ENCODING => 'NONE',

TTL => 'FOREVER',-- 过期时间

MIN_VERSIONS => '0',-- 最小版本数

REPLICATION_SCOPE => '0',

BLOOMFILTER => 'ROW', --布隆过滤器

CACHE_INDEX_ON_WRITE => 'false',

IN_MEMORY => 'false',-- 内存中

CACHE_BLOOMS_ON_WRITE => 'false',--布隆过滤器

PREFETCH_BLOCKS_ON_OPEN => 'false',

COMPRESSION => 'NONE', -- 压缩格式

BLOCKCACHE => 'true', -- 块缓存

BLOCKSIZE => '65536' -- 块大小 |

alter:修改表里面的属性

| Shell

hbase(main):040:0> alter 'doit:student',NAME => 'cf1',VERSIONS => 5,TTL => 10

Updating all regions with the new schema...

1/1 regions updated.

Done.

Took 2.1406 seconds |

alter_async:直接操作不等待,和上面的alter功能一样

| Shell

hbase(main):059:0> alter_async 'doit:student',TTL => 10

Took 1.0268 seconds |

alter_status:获取alter命令的执行状态

| Shell

hbase(main):060:0> alter_status 'doit:student'

1/1 regions updated.

Done.

Took 1.0078 seconds |

list_regions:列出一个表中所有的region

| Shell

Examples:

hbase> list_regions 'table_name'

hbase> list_regions 'table_name','server_name'

hbase> list_regions 'table_name',{SERVER_NAME => 'server_name',LOCALITY_THRESHOLD => 0.8}

hbase> list_regions 'table_name',LOCALITY_THRESHOLD => 0.8},['SERVER_NAME']

hbase> list_regions 'table_name',{},['SERVER_NAME','start_key']

hbase> list_regions 'table_name','','start_key']

hbase(main):045:0> list_regions 'doit:student'

SERVER_NAME | REGION_NAME | START_KEY | END_KEY | SIZE | REQ | LOCALITY |

--------------------------- | ------------------------------------------------------------- | ---------- | ---------- | ----- | ----- | ---------- |

linux02,16020,1683636566738 | doit:student,1683642944714.39f7c8772bc476c4d38c663e879d50da. | | | 0 | 0 | 0.0 |

1 rows

Took 0.0145 seconds |

locate_region:通过表名和row名方式获取region

| Shell

hbase(main):062:0> locate_region 'doit:student','key0'

HOST REGION

linux02:16020 {ENCODED => 39f7c8772bc476c4d38c663e879d50da,NAME => 'doit:student,1683642944714.39f7c8772bc476c4d38c663e879d50da.',STARTKEY => '',ENDK

EY => ''}

1 row(s)

Took 0.0027 seconds |

show_filters:显示hbase的所有的过滤器

| Shell

hbase(main):058:0> show_filters

DependentColumnFilter

KeyOnlyFilter

ColumnCountGetFilter

SingleColumnValueFilter

PrefixFilter

SingleColumnValueExcludeFilter

FirstKeyOnlyFilter

ColumnRangeFilter

ColumnValueFilter

TimestampsFilter

FamilyFilter

QualifierFilter

ColumnPrefixFilter

RowFilter

MultipleColumnPrefixFilter

InclusiveStopFilter

PageFilter

ValueFilter

ColumnPaginationFilter

Took 0.0035 seconds |

3.3.4DML相关命令

put插入/更新数据【某一行的某一列】(如果不存在,就插入,如果存在就更新)

| Shell

hbase(main):007:0> put 'doit:user_info','rowkey_001','f1:name','zss'

Took 0.0096 seconds

hbase(main):008:0> put 'doit:user_info','f1:age','1'

Took 0.0039 seconds

hbase(main):009:0> put 'doit:user_info','f1:gender','male'

Took 0.0039 seconds

hbase(main):010:0> put 'doit:user_info','f2:phone_num','98889'

Took 0.0040 seconds

hbase(main):011:0> put 'doit:user_info','f2:gender','98889'

注意:put中需要指定哪个命名空间的那个表,然后rowkey是什么,哪个列族下面的哪个列名,然后值是什么

一个个的插入,不能一下子插入多个列名的值 |

get:获取一个列族中列这个cell

| Shell

hbase(main):015:0> get 'doit:user_info','f2:gender'

COLUMN CELL

f2:gender timestamp=1683646645379,value=123

1 row(s)

Took 0.0242 seconds

hbase(main):016:0> get 'doit:user_info','rowkey_001'

COLUMN CELL

f1:age timestamp=1683646450598,value=1

f1:gender timestamp=1683646458847,value=male

f1:name timestamp=1683646443469,value=zss

f2:gender timestamp=1683646645379,value=123

f2:phone_num timestamp=1683646472508,value=98889

1 row(s)

Took 0.0129 seconds

# 如果遇到中文乱码的问题怎么办呢?在最后加上{'FORMATTER'=>'toString'}参数即可

hbase(main):137:0> get 'doit:student',{'FORMATTER'=>'toString'}

COLUMN CELL

f1:name timestamp=1683864047691,value=张三

1 row(s)

Took 0.0057 seconds

注意:get是hbase中查询数据最快的方式,但是只能每次返回一个rowkey的数据 |

scan:扫描表中的所有数据

| Shell

hbase(main):012:0> scan 'doit:user_info'

ROW COLUMN+CELL

rowkey_001 column=f1:age,timestamp=1683646450598,value=1

rowkey_001 column=f1:gender,timestamp=1683646458847,value=male

rowkey_001 column=f1:name,timestamp=1683646443469,value=zss

rowkey_001 column=f2:gender,timestamp=1683646483495,value=98889

rowkey_001 column=f2:phone_num,timestamp=1683646472508,value=98889

1 row(s)

Took 0.1944 seconds

scan 'tbname',{Filter(过滤器)}

scan 'itcast:t2'

#rowkey前缀过滤器

scan 'itcast:t2',{ROWPREFIXFILTER => '2021'}

scan 'itcast:t2',{ROWPREFIXFILTER => '202101'}

#rowkey范围过滤器

#STARTROW:从某个rowkey开始,包含,闭区间

#STOPROW:到某个rowkey结束,不包含,开区间

scan 'itcast:t2',{STARTROW=>'20210101_000'}

scan 'itcast:t2',{STARTROW=>'20210201_001'}

scan 'itcast:t2',{STARTROW=>'20210101_000',STOPROW=>'20210201_001'}

scan 'itcast:t2',{STARTROW=>'20210201_001',STOPROW=>'20210301_007'}

|- 在Hbase数据检索,==尽量走索引查询:按照Rowkey条件查询==

- 尽量避免走全表扫描

- 索引查询:有一本新华字典,这本字典可以根据拼音检索,找一个字,先找目录,找字

- 全表扫描:有一本新华字典,这本字典没有检索目录,找一个字,一页一页找

- ==Hbase所有Rowkey的查询都是前缀匹配==

# 如果遇到中文乱码的问题怎么办呢?在最后加上{'FORMATTER'=>'toString'}参数即可

hbase(main):130:0> scan 'doit:student',{'FORMATTER'=>'toString'}

ROW COLUMN+CELL

rowkey_001 column=f1:name,timestamp=1683863389259,value=张三

1 row(s)

Took 0.0063 seconds |

incr:一般用于自动计数的,不用记住上一次的值,直接做自增

| Shell

注意哦:因为shell往米面设置的value的值是String类型的

hbase(main):005:0> incr 'doit:student','rowkey002','f1:age'

COUNTER VALUE = 1

Took 0.1877 seconds

hbase(main):006:0> incr 'doit:student','f1:age'

COUNTER VALUE = 2

Took 0.0127 seconds

hbase(main):007:0> incr 'doit:student','f1:age'

COUNTER VALUE = 3

Took 0.0079 seconds

hbase(main):011:0> incr 'doit:student','f1:age'

COUNTER VALUE = 4

Took 0.0087 seconds |

count:统计一个表里面有多少行数据

| Shell

hbase(main):031:0> count 'doit:user_info'

1 row(s)

Took 0.0514 seconds

=> 1 |

delete删除某一行中列对应的值

| Shell

# 删除某一行中列对应的值

hbase(main):041:0> delete 'doit:student','f1:id'

Took 0.0152 seconds |

deleteall:删除一行数据

| Shell

# 根据rowkey删除一行数据

hbase(main):042:0> deleteall 'doit:student','rowkey_001'

Took 0.0065 seconds |

append:追加,假如该列不存在添加新列,存在将值追加到最后

| Shell

# 再原有值得基础上追加值

hbase(main):098:0> append 'doit:student','hheda'

CURRENT VALUE = zsshheda

Took 0.0070 seconds

hbase(main):100:0> get 'doit:student','f1:name'

COLUMN CELL

f1:name timestamp=1683861530789,value=zsshheda

1 row(s)

Took 0.0057 seconds

#注意:如果原来没有这个列,会自动添加一个列,然后将值set进去

hbase(main):101:0> append 'doit:student','f1:name1','hheda'

CURRENT VALUE = hheda

Took 0.0063 seconds

hbase(main):102:0> get 'doit:student','f1:name1'

COLUMN CELL

f1:name1 timestamp=1683861631392,value=hheda

1 row(s)

Took 0.0063 seconds |

truncate:清空表里面所有的数据

| Shell

#执行流程

先disable表

然后再drop表

最后重新create表

hbase(main):044:0> truncate 'doit:student'

Truncating 'doit:student' table (it may take a while):

Disabling table...

Truncating table...

Took 2.5457 seconds |

truncate_preserve:清空表但保留分区

| Shell

hbase(main):008:0> truncate_preserve 'doit:test'

Truncating 'doit:test' table (it may take a while):

Disabling table...

Truncating table...

Took 4.1352 seconds

hbase(main):009:0> list_regions 'doit:test'

SERVER_NAME | REGION_NAME | START_KEY | END_KEY | SIZE | REQ | LOCALITY |

--------------------------- | -------------------------------------------------------------------- | ---------- | ---------- | ----- | ----- | ---------- |

linux03,1684200651855 | doit:test,1684205468848.920ae3e043ad95890c4f5693cb663bc5. | | rowkey_010 | 0 | 0 | 0.0 |

linux01,1684205091382 | doit:test,rowkey_010,1684205468848.f8a21615be51f42c562a2338b1efa409. | rowkey_010 | rowkey_020 | 0 | 0 | 0.0 |

linux02,1684200651886 | doit:test,rowkey_020,1684205468848.25d62e8cc2fdaecec87234b8d28f0827. | rowkey_020 | rowkey_030 | 0 | 0 | 0.0 |

linux03,1684200651855 | doit:test,rowkey_030,1684205468848.2b0468e6643b95159fa6e210fa093e66. | rowkey_030 | rowkey_040 | 0 | 0 | 0.0 |

linux01,rowkey_040,1684205468848.fb12c09c7c73cfeff0bf79b5dda076cb. | rowkey_040 | | 0 | 0 | 0.0 |

5 rows

Took 0.1019 seconds |

get_counter:获取计数器

| Shell

hbase(main):017:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 1

Took 0.0345 seconds

hbase(main):018:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 2

Took 0.0066 seconds

hbase(main):019:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 3

Took 0.0059 seconds

hbase(main):020:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 4

Took 0.0061 seconds

hbase(main):021:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 5

Took 0.0064 seconds

hbase(main):022:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 6

Took 0.0062 seconds

hbase(main):023:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 7

Took 0.0066 seconds

hbase(main):024:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 8

Took 0.0059 seconds

hbase(main):025:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 9

Took 0.0063 seconds

hbase(main):026:0> incr 'doit:student','f1:name2'

COUNTER VALUE = 10

Took 0.0061 seconds

hbase(main):027:0> get_counter 'doit:student','f1:name2'

COUNTER VALUE = 10

Took 0.0040 seconds

hbase(main):028:0> |

get_splits:用于获取表所对应的region数个数

| Shell

hbase(main):148:0> get_splits 'doit:test'

Total number of splits = 5

rowkey_010

rowkey_020

rowkey_030

rowkey_040

Took 0.0120 seconds

=> ["rowkey_010","rowkey_020","rowkey_030","rowkey_040"] |

| 尖叫总结:实际生产中很少通过hbase shell去操作hbase,更多的是学习测试,问题排查等等才会使用到hbase shell,hbase总的来说就是写数据,然后查询。 前者是通过API bulkload等形式写数据,后者通过api调用查询。 |

java客户端

3.4导入maven依赖

| XML

<dependencies>

<dependency>

<groupId>org.apache.zookeeper</groupId>

<artifactId>zookeeper</artifactId>

<version>3.4.6</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-client</artifactId>

<version>2.2.5</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.2.1</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.2.1</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-server</artifactId>

<version>2.2.5</version>

</dependency>

<!-- 使用mr程序操作hbase 数据的导入 -->

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-mapreduce</artifactId>

<version>2.2.5</version>

</dependency>

<dependency>

<groupId>com.google.code.gson</groupId>

<artifactId>gson</artifactId>

<version>2.8.5</version>

</dependency>

<!-- phoenix 凤凰 用来整合Hbase的工具 -->

<dependency>

<groupId>org.apache.phoenix</groupId>

<artifactId>phoenix-core</artifactId>

<version>5.0.0-HBase-2.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-auth</artifactId>

<version>3.1.2</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.5.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>2.6</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<!-- bind to the packaging phase -->

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build> |

获取hbase的连接,list出所有的表

| Java

package com.doit.day01;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.Admin;

import org.apache.hadoop.hbase.client.Connection;

import org.apache.hadoop.hbase.client.ConnectionFactory;

import java.io.IOException;

/**

* Hbase的java客户端连接hbase的时候,只需要连接zookeeper的集群

* 就可以找到你Hbase集群的位置

* 核心的对象:

* Configuration:HbaseConfiguration.create();

* Connection:ConnectionFactory.createConnection(conf);

* table:conn.getTable(TableName.valueOf("tb_b")); 对表进行操作 DML

* Admin:conn.getAdmin();操作Hbase系统DDL,对名称空间等进行操作

*/

public class ConnectionDemo {

public static void main(String[] args) throws Exception {

//获取到hbase的配置文件对象

Configuration conf = HBaseConfiguration.create();

//针对配置文件设置zk的集群地址

conf.set("hbase.zookeeper.quorum","linux01:2181,linux02:2181,linux03:2181");

//创建hbase的连接对象

Connection conn = ConnectionFactory.createConnection(conf);

//获取到操作hbase的对象

Admin admin = conn.getAdmin();

//调用api获取到所有的表

TableName[] tableNames = admin.listTableNames();

//获取到哪个命名空间下的所有的表

TableName[] doits = admin.listTableNamesByNamespace("doit");

for (TableName tableName : doits) {

byte[] name = tableName.getName();

System.out.println(new String(name));

}

conn.close();

}

} |

获取到所有的命名空间

| Java

package com.doit.day01;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.NamespaceDescriptor;

import org.apache.hadoop.hbase.client.Admin;

import org.apache.hadoop.hbase.client.Connection;

import org.apache.hadoop.hbase.client.ConnectionFactory;

/**

* Hbase的java客户端连接hbase的时候,只需要连接zookeeper的集群

* 就可以找到你Hbase集群的位置

* 核心的对象:

* Configuration:HbaseConfiguration.create();

* Connection:ConnectionFactory.createConnection(conf);

* Admin:conn.getAdmin();操作Hbase系统DDL,对名称空间等进行操作

*/

public class NameSpaceDemo {

public static void main(String[] args) throws Exception {

//获取到hbase的配置文件对象

Configuration conf = HBaseConfiguration.create();

//针对配置文件设置zk的集群地址

conf.set("hbase.zookeeper.quorum",linux03:2181");

//创建hbase的连接对象

Connection conn = ConnectionFactory.createConnection(conf);

//获取到操作hbase的对象

Admin admin = conn.getAdmin();

//获取到命名空间的描述器

NamespaceDescriptor[] namespaceDescriptors = admin.listNamespaceDescriptors();

for (NamespaceDescriptor namespaceDescriptor : namespaceDescriptors) {

//针对描述器获取到命名空间的名称

String name = namespaceDescriptor.getName();

System.out.println(name);

}

conn.close();

}

} |

创建一个命名空间

| Java

package com.doit.day01;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.NamespaceDescriptor;

import org.apache.hadoop.hbase.client.Admin;

import org.apache.hadoop.hbase.client.Connection;

import org.apache.hadoop.hbase.client.ConnectionFactory;

import org.apache.hadoop.hbase.protobuf.generated.HBaseProtos;

import java.util.Properties;

/**

* Hbase的java客户端连接hbase的时候,只需要连接zookeeper的集群

* 就可以找到你Hbase集群的位置

* 核心的对象:

* Configuration:HbaseConfiguration.create();

* Connection:ConnectionFactory.createConnection(conf);

* Admin:conn.getAdmin();操作Hbase系统DDL,对名称空间等进行操作

*/

public class CreateNameSpaceDemo {

public static void main(String[] args) throws Exception {

//获取到hbase的配置文件对象

Configuration conf = HBaseConfiguration.create();

//针对配置文件设置zk的集群地址

conf.set("hbase.zookeeper.quorum",linux03:2181");

//创建hbase的连接对象

Connection conn = ConnectionFactory.createConnection(conf);

//获取到操作hbase的对象

Admin admin = conn.getAdmin();

//获取到命名空间描述器的构建器

NamespaceDescriptor.Builder spaceFromJava = NamespaceDescriptor.create("spaceFromJava");

//当然还可以给命名空间设置属性

spaceFromJava.addConfiguration("author","robot_jiang");

spaceFromJava.addConfiguration("desc","this is my first java namespace...");

//拿着构建器构建命名空间的描述器

NamespaceDescriptor build = spaceFromJava.build();

//创建命名空间

admin.createNamespace(build);

conn.close();

}

} |

创建带有多列族的表

| Java

package com.doit.day01;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.NamespaceDescriptor;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.*;

import org.apache.hadoop.hbase.protobuf.generated.TableProtos;

import java.nio.charset.StandardCharsets;

import java.util.ArrayList;

import java.util.Map;

import java.util.Set;

/**

* Hbase的java客户端连接hbase的时候,只需要连接zookeeper的集群

* 就可以找到你Hbase集群的位置

* 核心的对象:

* Configuration:HbaseConfiguration.create();

* Connection:ConnectionFactory.createConnection(conf);

* Admin:conn.getAdmin();操作Hbase系统DDL,对名称空间等进行操作

*/

public class CreateTableDemo {

public static void main(String[] args) throws Exception {

//获取到hbase的配置文件对象

Configuration conf = HBaseConfiguration.create();

//针对配置文件设置zk的集群地址

conf.set("hbase.zookeeper.quorum",linux03:2181");

//创建hbase的连接对象

Connection conn = ConnectionFactory.createConnection(conf);

Admin admin = conn.getAdmin();

//获取到操作hbase操作表的对象

TableDescriptorBuilder java = TableDescriptorBuilder.newBuilder(TableName.valueOf("java"));

//表添加列族需要集合的方式

ArrayList<ColumnFamilyDescriptor> list = new ArrayList<>();

//构建一个列族的构造器

ColumnFamilyDescriptorBuilder col1 = ColumnFamilyDescriptorBuilder.newBuilder("f1".getBytes(StandardCharsets.UTF_8));

ColumnFamilyDescriptorBuilder col2 = ColumnFamilyDescriptorBuilder.newBuilder("f2".getBytes(StandardCharsets.UTF_8));

ColumnFamilyDescriptorBuilder col3 = ColumnFamilyDescriptorBuilder.newBuilder("f3".getBytes(StandardCharsets.UTF_8));

//构建列族

ColumnFamilyDescriptor build1 = col1.build();

ColumnFamilyDescriptor build2 = col2.build();

ColumnFamilyDescriptor build3 = col3.build();

//将列族添加到集合中去

list.add(build1);

list.add(build2);

list.add(build3);

//给表设置列族

java.setColumnFamilies(list);

//构建表的描述器

TableDescriptor build = java.build();

//创建表

admin.createTable(build);

conn.close();

}

} |

向表中添加数据

| Java

package com.doit.day01;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.*;

import java.nio.charset.StandardCharsets;

import java.util.ArrayList;

import java.util.Arrays;

/**

* 注意:put数据需要指定往哪个命名空间的哪个表的哪个rowKey的哪个列族的哪个列中put数据,put的值是什么

*/

public class PutDataDemo {

public static void main(String[] args) throws Exception {

//获取到hbase的配置文件对象

Configuration conf = HBaseConfiguration.create();

//针对配置文件设置zk的集群地址

conf.set("hbase.zookeeper.quorum",linux03:2181");

//创建hbase的连接对象

Connection conn = ConnectionFactory.createConnection(conf);

Admin admin = conn.getAdmin();

//指定往哪一张表中put数据

Table java = conn.getTable(TableName.valueOf("java"));

//创建put对象,设置rowKey

Put put = new Put("rowkey_001".getBytes(StandardCharsets.UTF_8));

put.addColumn("f1".getBytes(StandardCharsets.UTF_8),"name".getBytes(StandardCharsets.UTF_8),"xiaotao".getBytes(StandardCharsets.UTF_8));

put.addColumn("f1".getBytes(StandardCharsets.UTF_8),"age".getBytes(StandardCharsets.UTF_8),"42".getBytes(StandardCharsets.UTF_8));

Put put1 = new Put("rowkey_002".getBytes(StandardCharsets.UTF_8));

put1.addColumn("f1".getBytes(StandardCharsets.UTF_8),"xiaotao".getBytes(StandardCharsets.UTF_8));

put1.addColumn("f1".getBytes(StandardCharsets.UTF_8),"42".getBytes(StandardCharsets.UTF_8));

Put put2 = new Put("rowkey_003".getBytes(StandardCharsets.UTF_8));

put2.addColumn("f1".getBytes(StandardCharsets.UTF_8),"xiaotao".getBytes(StandardCharsets.UTF_8));

put2.addColumn("f1".getBytes(StandardCharsets.UTF_8),"42".getBytes(StandardCharsets.UTF_8));

java.put(Arrays.asList(put,put1,put2));

conn.close();

}

} |

get表中的数据

| Java

package com.doit.day01;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.Cell;

import org.apache.hadoop.hbase.CellUtil;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.*;

import java.nio.charset.StandardCharsets;

/**

* 注意:put数据需要指定往哪个命名空间的哪个表的哪个rowKey的哪个列族的哪个列中put数据,put的值是什么

*/

public class GetDataDemo {

public static void main(String[] args) throws Exception {

//获取到hbase的配置文件对象

Configuration conf = HBaseConfiguration.create();

//针对配置文件设置zk的集群地址

conf.set("hbase.zookeeper.quorum",linux03:2181");

//创建hbase的连接对象

Connection conn = ConnectionFactory.createConnection(conf);

//指定往哪一张表中put数据

Table java = conn.getTable(TableName.valueOf("java"));

Get get = new Get("rowkey_001".getBytes(StandardCharsets.UTF_8));

// get.addFamily("f1".getBytes(StandardCharsets.UTF_8));

get.addColumn("f1".getBytes(StandardCharsets.UTF_8),"name".getBytes(StandardCharsets.UTF_8));

Result result = java.get(get);

boolean advance = result.advance();

if(advance){

Cell current = result.current();

String family = new String(CellUtil.cloneFamily(current));

String qualifier = new String(CellUtil.cloneQualifier(current));

String row = new String(CellUtil.cloneRow(current));

String value = new String(CellUtil.cloneValue(current));

System.out.println(row+","+family+","+qualifier+","+value);

}

conn.close();

}

} |

scan表中的数据

| Java

package com.doit.day01;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.*;

import org.apache.hadoop.hbase.client.*;

import java.nio.charset.StandardCharsets;

import java.util.Arrays;

import java.util.Iterator;

/**

* 注意:put数据需要指定往哪个命名空间的哪个表的哪个rowKey的哪个列族的哪个列中put数据,put的值是什么

*/

public class ScanDataDemo {

public static void main(String[] args) throws Exception {

//获取到hbase的配置文件对象

Configuration conf = HBaseConfiguration.create();

//针对配置文件设置zk的集群地址

conf.set("hbase.zookeeper.quorum",linux03:2181");

//创建hbase的连接对象

Connection conn = ConnectionFactory.createConnection(conf);

//指定往哪一张表中put数据

Table java = conn.getTable(TableName.valueOf("java"));

Scan scan = new Scan();

scan.withStartRow("rowkey_001".getBytes(StandardCharsets.UTF_8));

scan.withStopRow("rowkey_004".getBytes(StandardCharsets.UTF_8));

ResultScanner scanner = java.getScanner(scan);

Iterator<Result> iterator = scanner.iterator();

while (iterator.hasNext()){

Result next = iterator.next();

while (next.advance()){

Cell current = next.current();

String family = new String(CellUtil.cloneFamily(current));

String row = new String(CellUtil.cloneRow(current));

String qualifier = new String(CellUtil.cloneQualifier(current));

String value = new String(CellUtil.cloneValue(current));

System.out.println(row+","+value);

}

}

conn.close();

}

} |

删除一行数据

| Java

package com.doit.day02;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.*;

import java.io.IOException;

import java.nio.charset.StandardCharsets;

public class _12_删除一行数据 {

public static void main(String[] args) throws IOException {

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum","linux01");

Connection conn = ConnectionFactory.createConnection(conf);

Table java = conn.getTable(TableName.valueOf("java"));

Delete delete = new Delete("rowkey_001".getBytes(StandardCharsets.UTF_8));

java.delete(delete);

}

} |

Hbase的客户端操作特别的麻烦 .

使用JAVA客户端连接也是特别麻烦的一个.代码输入繁琐 不好记忆

越往后学习会发现前面的内容对后面也非常的重要 . 比如JAVA的内容学习会用影响到会面使用,特别像这种需要建立连接内容的 . 将其他的服务端连接到JAVA .

细水长流,前面的内容会直接的影响后面的使用 . 特别是像我这种记性特别差 . 学了就忘的人 . 经常的整理笔记,写Xmind (思维导图) 加深映像 .

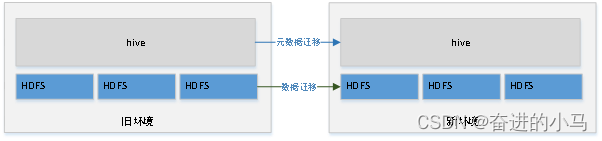

文章浏览阅读565次。hive和hbase数据迁移_hive转hbase

文章浏览阅读565次。hive和hbase数据迁移_hive转hbase 文章浏览阅读707次。基于单机版安装HBase,前置条件为Hadoop...

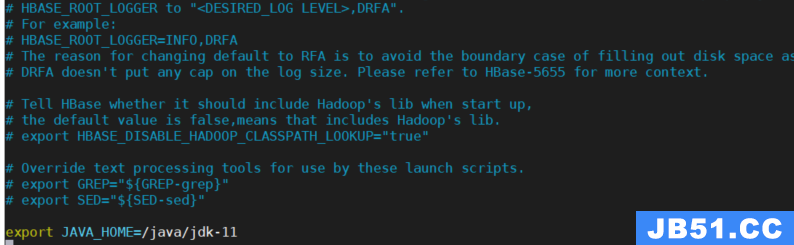

文章浏览阅读707次。基于单机版安装HBase,前置条件为Hadoop... 文章浏览阅读1k次,点赞16次,收藏21次。整理和梳理日常hbas...

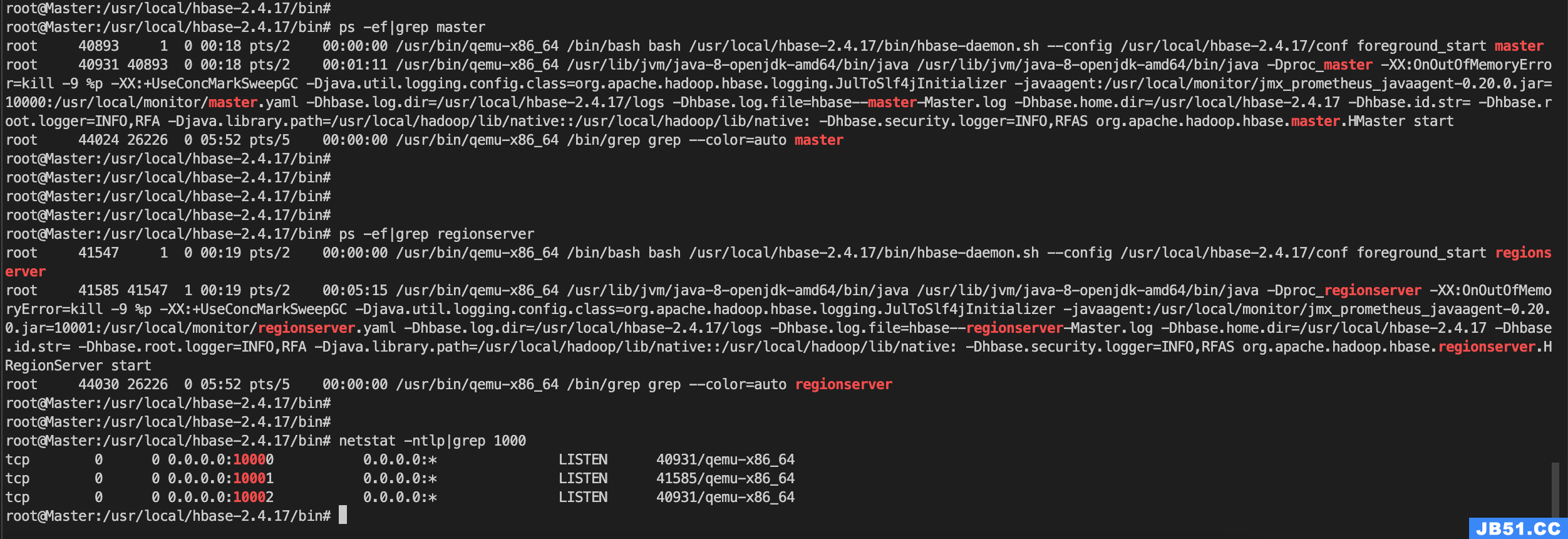

文章浏览阅读1k次,点赞16次,收藏21次。整理和梳理日常hbas...