问题描述

我将创建虚拟数据并在其上训练sklearn Logistic回归。然后,我想获取predict_proba的输出,但仅使用自己的coef_和intercept_计算,但是结果不同。设置如下:

X = [[0,0],[0,1,2,[1,1],0]]

y = [0,2]

# Fit the classifier

clf = linear_model.LogisticRegression(C=1e5,multi_class="ovr",class_weight="balanced")

clf.fit(X,y)

然后,我将仅使用有关Sigmoid和softmax的知识来获取输出:

softmax([

expit(np.dot([[0,0]],clf.coef_[0]) + clf.intercept_[0]),expit(np.dot([[0,clf.coef_[1]) + clf.intercept_[1]),clf.coef_[2]) + clf.intercept_[2])

])

但是它将返回不同的值

clf.predict_proba([[0,0]])

array([[0.281399,0.15997556,0.55862544]])与array([[0.29882052],[0.24931448],[0.451865 ]])

解决方法

from sklearn import linear_model

from scipy.special import expit,softmax

import numpy as np

# Data

X = [[0,0],[0,1,2,[1,1],0]]

y = [0,2]

# Classifier

clf = linear_model.LogisticRegression(C=1e5,multi_class="ovr",class_weight="balanced")

clf.fit(X,y)

# Predicted probabilities

print(clf.predict_proba([[0,0]]))

#[[0.281399 0.15997556 0.55862544]]

# Recalculated predicted probabilities without softmax

prob1 = np.array([expit(np.dot([[0,0]],clf.coef_[0]) + clf.intercept_[0]),expit(np.dot([[0,clf.coef_[1]) + clf.intercept_[1]),clf.coef_[2]) + clf.intercept_[2])]).reshape(1,-1)

print(prob1 / np.sum(prob1))

#[[0.281399 0.15997556 0.55862544]]

# Recalculated predicted probabilities with softmax

prob2 = np.log(prob1)

print(softmax(prob2))

#[[0.281399 0.15997556 0.55862544]]

设置时间 控制面板

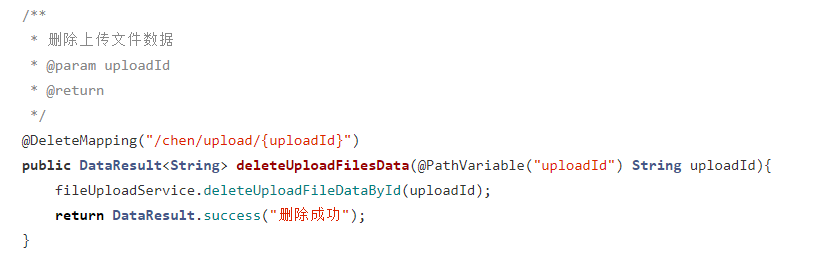

设置时间 控制面板 错误1:Request method ‘DELETE‘ not supported 错误还原:...

错误1:Request method ‘DELETE‘ not supported 错误还原:...