我有一个这样的RDD:byUserHour:org.apache.spark.rdd.RDD [(String,String,Int)]我想为中位数,平均值等计算创建一个稀疏的数据矩阵.RDD包含row_id,column_id和value.我有两个包含row_id和column_id字符串的数组用于查找.

这是我的尝试:

import breeze.linalg._

val builder = new CSCMatrix.Builder[Int](rows=BCnUsers.value.toInt,cols=broadcastTimes.value.size)

byUserHour.foreach{x =>

val row = userids.indexOf(x._1)

val col = broadcastTimes.value.indexOf(x._2)

builder.add(row,col,x._3)}

builder.result()

这是我的错误:

14/06/10 16:39:34 INFO DAGScheduler: Failed to run foreach at <console>:38

org.apache.spark.SparkException: Job aborted due to stage failure: Task not serializable: java.io.NotSerializableException: breeze.linalg.CSCMatrix$Builder$mcI$sp

at org.apache.spark.scheduler.DAGScheduler.org$apache$spark$scheduler$DAGScheduler$$failJobAndIndependentStages(DAGScheduler.scala:1033)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1017)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1015)

at scala.collection.mutable.ResizableArray$class.foreach(ResizableArray.scala:59)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:47)

at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:1015)

at org.apache.spark.scheduler.DAGScheduler.org$apache$spark$scheduler$DAGScheduler$$submitMissingTasks(DAGScheduler.scala:770)

at org.apache.spark.scheduler.DAGScheduler.org$apache$spark$scheduler$DAGScheduler$$submitStage(DAGScheduler.scala:713)

at org.apache.spark.scheduler.DAGScheduler.handleJobSubmitted(DAGScheduler.scala:697)

at org.apache.spark.scheduler.DAGSchedulerEventProcessActor$$anonfun$receive$2.applyOrElse(DAGScheduler.scala:1176)

at akka.actor.ActorCell.receiveMessage(ActorCell.scala:498)

at akka.actor.ActorCell.invoke(ActorCell.scala:456)

at akka.dispatch.Mailbox.processMailbox(Mailbox.scala:237)

at akka.dispatch.Mailbox.run(Mailbox.scala:219)

at akka.dispatch.ForkJoinExecutorConfigurator$AkkaForkJoinTask.exec(AbstractDispatcher.scala:386)

at scala.concurrent.forkjoin.ForkJoinTask.doExec(ForkJoinTask.java:260)

at scala.concurrent.forkjoin.ForkJoinPool$WorkQueue.runTask(ForkJoinPool.java:1339)

at scala.concurrent.forkjoin.ForkJoinPool.runWorker(ForkJoinPool.java:1979)

at scala.concurrent.forkjoin.ForkJoinWorkerThread.run(ForkJoinWorkerThread.java:107)

我的数据集非常大,所以如果可能的话我希望这样做.任何帮助,将不胜感激.

进度更新:

CSCMartix并不适用于Spark.但是,有RowMatrix扩展了DistributedMatrix. RowMatrix确实有一个方法computeColumnSummaryStatistics(),它应该计算我正在寻找的一些统计数据.我知道MLlib每天都在增长,所以我会关注更新,但在此期间我会尝试制作一个RDD [Vector]来为RowMatrix提供信息.注意到RowMatrix是实验性的,并且表示没有有意义的行索引的面向行的分布式矩阵.

解决方法

从映射稍微不同的byUserHour开始现在是RDD [(String,(String,Int))]

.因为RowMatrix不保留row_id上的行groupByKey的顺序.也许将来我会弄清楚如何使用稀疏矩阵来做到这一点.

.因为RowMatrix不保留row_id上的行groupByKey的顺序.也许将来我会弄清楚如何使用稀疏矩阵来做到这一点.

val byUser = byUserHour.groupByKey // RDD[(String,Iterable[(String,Int)])]

val times = countHour.map(x => x._1.split("\\+")(1)).distinct.collect.sortWith(_ < _) // Array[String]

val broadcastTimes = sc.broadcast(times) // Broadcast[Array[String]]

val userMaps = byUser.mapValues {

x => x.map{

case(time,cnt) => time -> cnt

}.toMap

} // RDD[(String,scala.collection.immutable.Map[String,Int])]

val rows = userMaps.map {

case(u,ut) => (u.toDouble +: broadcastTimes.value.map(ut.getOrElse(_,0).toDouble))} // RDD[Array[Double]]

import org.apache.spark.mllib.linalg.{Vector,Vectors}

val rowVec = rows.map(x => Vectors.dense(x)) // RDD[org.apache.spark.mllib.linalg.Vector]

import org.apache.spark.mllib.linalg.distributed._

val countMatrix = new RowMatrix(rowVec)

val stats = countMatrix.computeColumnSummaryStatistics()

val meanvec = stats.mean

共收录Twitter的14款开源软件,第1页Twitter的Emoji表情 Tw...

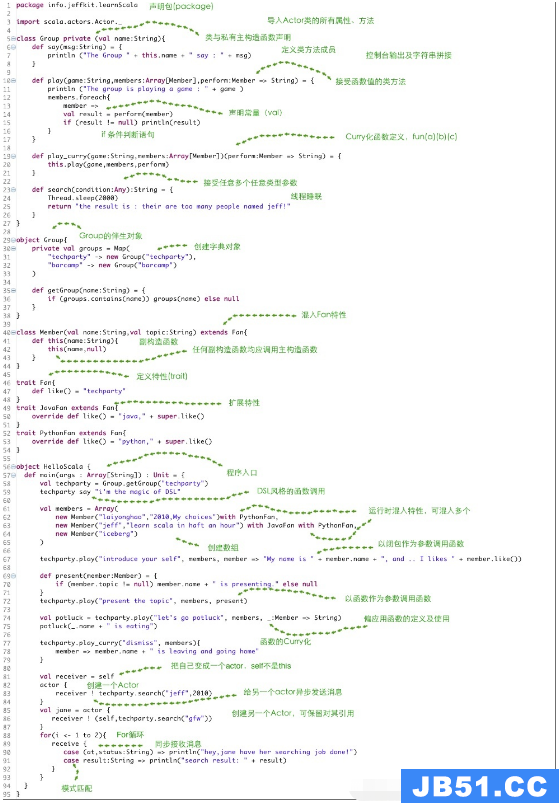

共收录Twitter的14款开源软件,第1页Twitter的Emoji表情 Tw... 本篇内容主要讲解“Scala怎么使用”,感兴趣的朋友不妨来看看...

本篇内容主要讲解“Scala怎么使用”,感兴趣的朋友不妨来看看...