hadoop 伪分布式(一台)集群搭建

1.安装jdk 1.7以上

–2.安装hadoop 2.8.5

–3.配置/etc/profile,添加

JAVA_HOME=/opt/module/jdk1.8.0_221

HADOOP_HOME=/opt/module/hadoop-2.8.5

PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

export PATH

#使配置文件生效

source /etc/profile

–4.配置 hostnae

vi /etc/hosts

192.168.228.128 bigdata

192.168.228.129 bigdata02

192.168.228.130 bigdata03

vi /etc/sysconfig/network

HOSTNAME=bigdata

vi /etc/hostname

bigdata

–5.关防火墙

#--关闭防火墙:

systemctl stop firewalld.service

#--禁用防火墙:

systemctl disable firewalld.service

#--查看防火墙:

systemctl status firewalld.service

#--永久关闭 Selinux:

vi /etc/selinux /config将 SELINUX=enforcing 改为 SELINUX=disabled

#或者:

sed -i 's/SELINUX=.*/SELINUX=disabled/g' /etc/sysconfig/selinux

#--临时关闭

setenforce 0

–6.配置静态IP

[root@bigdata hadoop]# cat /etc/sysconfig/network-scripts/ifcfg-ens33

TYPE="Ethernet"

PROXY_METHOD="none"

broWSER_ONLY="no"

#设置成静态分配IP

BOOTPROTO="static"

DEFROUTE="yes"

IPV4_FAILURE_FATAL="no"

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

IPV6_DEFROUTE="yes"

IPV6_FAILURE_FATAL="no"

IPV6_ADDR_GEN_MODE="stable-privacy"

NAME="ens33"

#UUID="b0c93b25-6ab9-44a6-8f42-825e018cd065"

DEVICE="ens33"

#yes表示开机启动网卡

ONBOOT="yes"

#以下为手动添加内容

IPADDR0=192.168.228.128

PREFIXO0=24

GATEWAY0=192.168.228.1

DNS1=8.8.8.8

DNS2=8.8.4.4

7.配置ssh互信(如有多台服务器,每台执行)

ssh-keygen -t rsa +三个回车键

#如:

ssh-copy-id bigdata

#ssh-copy-id bigdata02

#ssh-copy-id bigdata03

–8.创建 itstar 用户,目录

adduser itstar

passwd itstar

mkdir -p /opt/module/hadoop-2.8.5/data/tmp

mkdir /opt/module/hadoop-2.8.5/logs

–设置 itstar 用户具有 root 权限

vi /etc/sudoers 92 行 找到 root ALL=(ALL) ALL复制一行:

itstar ALL=(ALL)

–9.配置hadoop,路径:hadoop安装目录/etc/hadoop,在以下文件之间位置添加如下内容

集群规划:

--------+-----------------------+----------------+-------------

+ bigdata + bigdata02 + bigdata02

--------+-----------------------+----------------+--------------

HDFS + NameNode + +

+ SecondaryNameNode + Datanode + Datanode

+ Datanode + +

--------+-----------------------+----------------+--------------

YARN + ResourceManager + +

+ NodeManager + NodeManager + NodeManager

--------+-----------------------+----------------+---------------

–1)core-site.xml

<property>

<name>fs.defaultFS</name>

<value>hdfs://bigdata:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-2.8.5/data/tmp</value>

</property>

注:/opt/module/hadoop-2.8.5/data/tmp目录如果没有需手工创建

bigdata此处表示主机名,以下文件中同义

–2)hdfs-site.xml

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>bigdata:50090</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

–3)yarn-site.xml

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>bigdata</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

–4)mapred-site.xml

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>bigdata:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>bigdata:19888</value>

</property>

–5)在hadoop-env.sh、yarn-env.sh、mapred-env.sh末尾添加JAVA_HOME配置

export JAVA_HOME=/opt/module/jdk1.8.0_221

–6)修改slaves,将localhost改为相应的’hostname’

[root@bigdata hadoop]# cat slaves

bigdata

–10.格式化hadoop运行临时数据目录

hdfs namenode -format

–11.运行hadoop

start-all.sh

注:如果以上文件配置过程中出现问题,10、11两步均需重新操作(需要将手动创建的目录下清空在进行格式化)

查看启动进程(不算jps有5个进程) jps

[root@bigdata hadoop]# jps

29504 ResourceManager

29348 SecondaryNameNode

29609 NodeManager

29210 Datanode

29115 NameNode

29964 Jps

如需关闭,执行

stop-all.sh

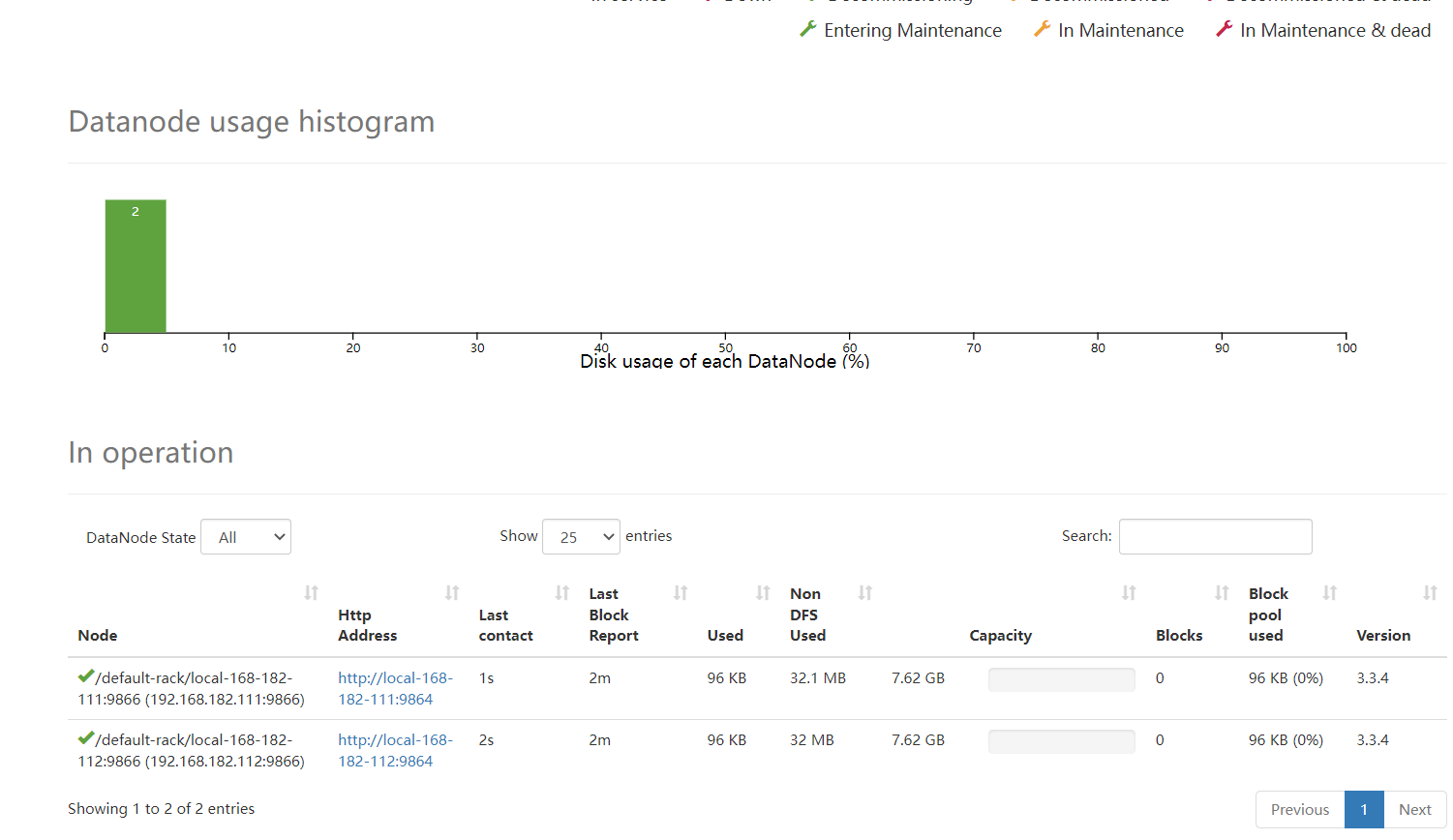

–12.查看效果

浏览器浏览http://IP:50070,此处为 http://192.168.228.128:

hadoop dfsadmin -safemode leave

hadoop fs -put slaves /

–表示将slaves 文件上传至/目录下

查看已上传文件:网页浏览:Utilities/browse the file system

**

由伪分布式(一台服务器)调整成完全分布式集群(最少3台)

**

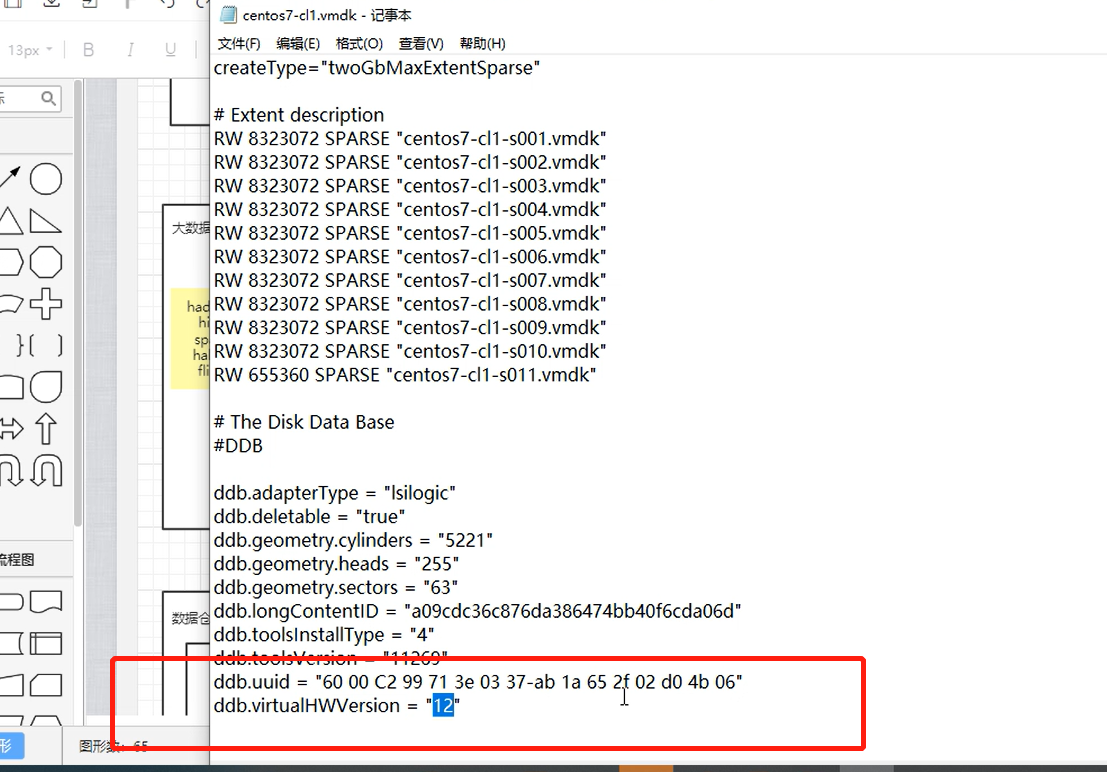

先将bigdata克隆成bigdata02,bigdata03

–1.注意SSH配置三台互信

–2.修改slaves里边配置成

bigdata

bigdata02

bigdata03

rm -rf data/*

rm -rf logs/*

–4.格式化hadoop运行临时数据目录

hdfs namenode -format

–5.启动,只在节点1-bigdata执行

start-all.sh

注:如果NameNode和ResourceManager不在同一台服务器上,需要在各自所在的服务器分开使用启动命令start-dfs.sh 、start-yarn.sh