问题描述

我遇到了opensearch的问题,我使用librairie aiohttp进行并发请求。

上下文: 该应用程序应在特定端口上连接到Docker应用程序,以便检索opensearch网址 托管应用的 当我的应用尝试连接到主机时,它返回507存储不足错误

2020-08-12 09:40:11,550 - OpenSearchUrlTokenizer - DEBUG - Retrieving opensearch descriptor at url http://localhost:10000/api/mediacenter/v1.0/opensearch_descriptor/

aiohttp.client_exceptions.ClientConnectorError: Cannot connect to host localhost:10000 ssl:None [Cannot assign requested address]

2020-08-12 09:40:11,555 - werkzeug - INFO - 172.18.0.1 - - [12/Aug/2020 09:40:11] "GET /opensearch/search?q=e HTTP/1.1" 507 -

它获取了良好的端点,但在我无法访问所请求的资源的情况下,返回了这个小错误。

我尝试的事情:

在docker上暴露端口10000

限制并发请求

这是我使用的班级

class OpenSearchClient:

"""

This class contains the utilities required in order to search on an opensearch API

"""

@inject

def __init__(self,url_tokenizer: OpenSearchUrlTokenizer):

self.url_tokenizer = url_tokenizer

self.session = aiohttp.ClientSession(loop=asyncio.get_event_loop())

async def get_descriptor_url(self,url):

"""

Return the url template located in the descriptor. It returns the first URL whatever

the url type is,without replacing any information

:param url: a String containing

:return:

"""

logger.debug("Retrieving opensearch descriptor at url %s",url)

async with self.session.get(url) as resp:

xml = await resp.read()

logger.debug("Retrieved opensearch descriptor %s",xml)

root = etree.fromstring(xml)

nodes = root.xpath('./x:Url',namespaces={

'x': 'http://a9.com/-/spec/opensearch/1.1/'

})

if nodes:

n = nodes[0].attrib

template = n['template']

io = n['indexOffset'] if 'indexOffset' in n else 0

po = n['pageOffset'] if 'pageOffset' in n else 0

return (template,io,po)

async def search(self,url):

"""

Launch a search to a given url,parse the feed and returns the results. The provided url

must already have any template parameter replaced

:param url: A string containing the url to query

:return: a SearchResult list

"""

headers = {'Accept': 'application/atom+xml; application/rss+xml'}

logger.debug("Querying open search engine at url %s",url)

async with self.session.get(url,headers=headers) as resp:

d = feedparser.parse(await resp.read())

results = list(map(lambda x: SearchResult(url=self.url_tokenizer.resolve_url(url,x.link),title=x.title,description=x.summary),d.entries))

return results

解决方法

暂无找到可以解决该程序问题的有效方法,小编努力寻找整理中!

如果你已经找到好的解决方法,欢迎将解决方案带上本链接一起发送给小编。

小编邮箱:dio#foxmail.com (将#修改为@)

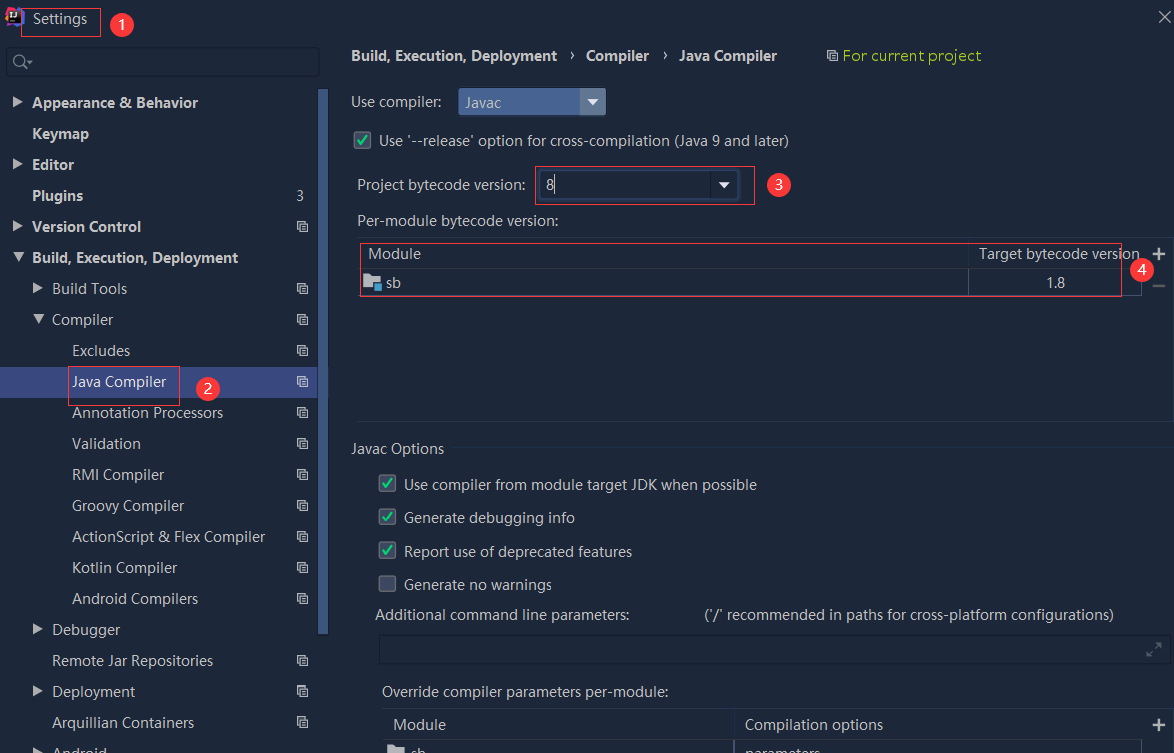

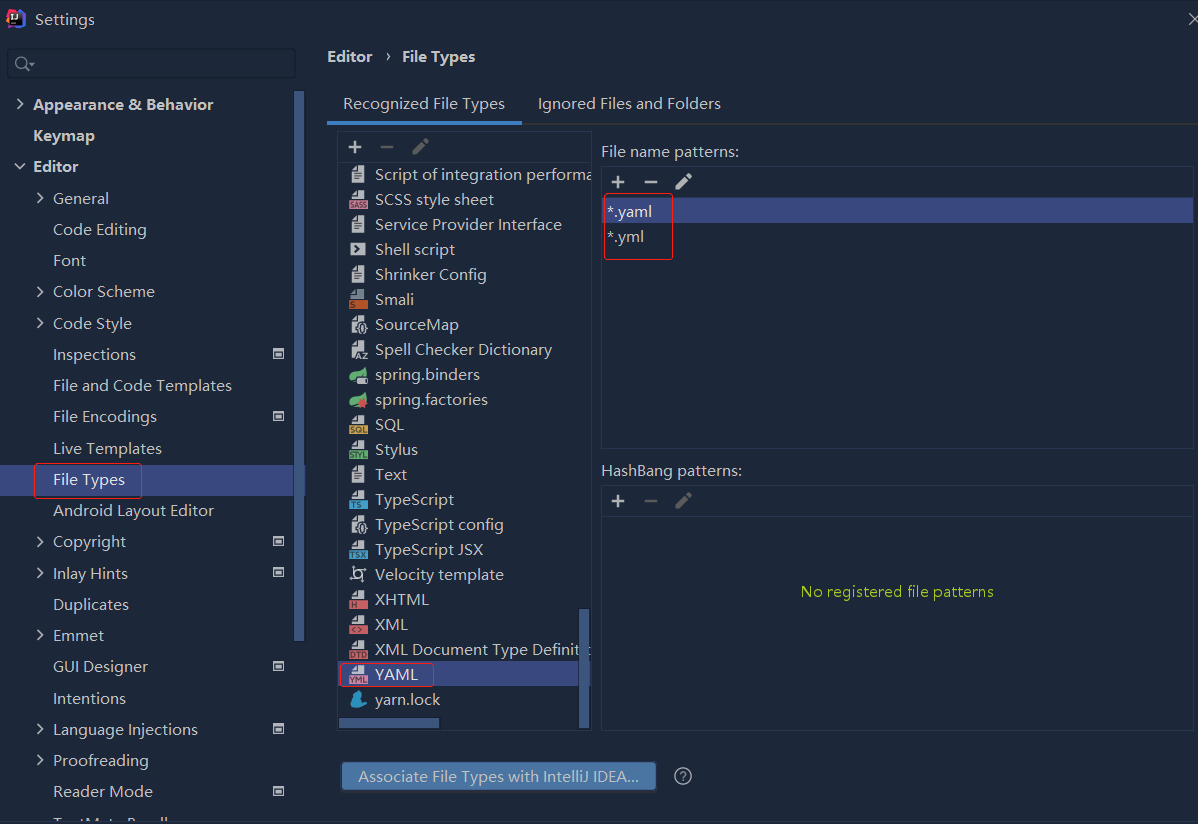

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

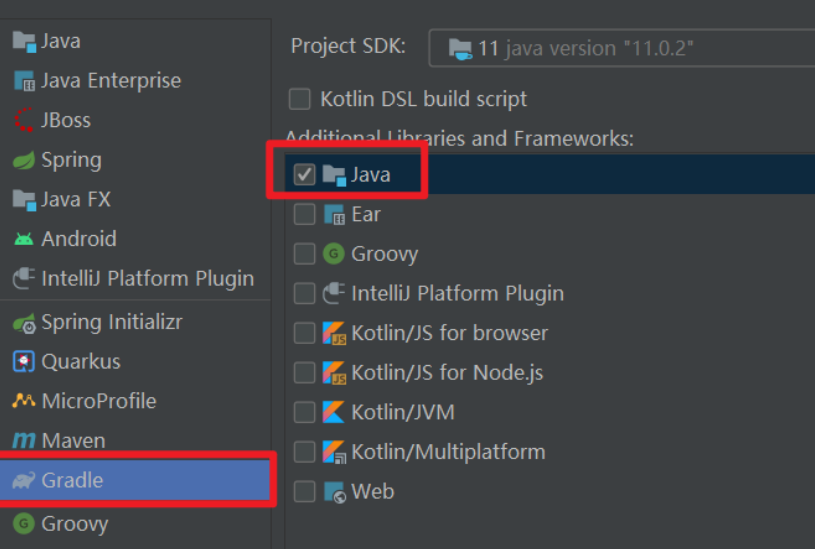

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...