问题描述

我一直在尝试在Keras中开发自定义Spatial RNN层。它应该是具有(1 * 3)* [1,0]的自定义内核的Conv2D层。它应该找到每个像素与其左侧像素之间的关系。下面是代码:

class SpatialRNN2D_Left(tf.keras.layers.Layer):

def __init__(self,rnn_radius,direction='all'):

super().__init__()

self.padding = "same"

self.kernel_switch_left = np.array([[1,0]])

def build(self,input_shape):

""

Build the class based on the input shape and the direction parameter. The required kernels are built as well.

Arguments:

input_shape: 4D tensor with shape: `1 + (rows,cols,channels)`.

Raises:

Nothing at the moment. But shall be added.

""

self.num_channel = int(input_shape[-1])

kernel_switch_left = self.kernel_switch_left

kernel_switch_left = np.repeat(kernel_switch_left[:,:,np.newaxis],int(self.num_channel),axis=-1)

kernel_switch_left = np.repeat(kernel_switch_left[:,axis=-1)

kernel_switch_left = K.constant(kernel_switch_left,dtype=tf.float32)

self.kernel_left = self.add_weight(

shape=[kernel_switch_left.shape[0],kernel_switch_left.shape[1],self.num_channel,self.num_channel],initializer=tf.keras.initializers.Ones(),trainable=True) * self.hex_filter_left

super().build(input_shape)

@tf.function

def call(self,input_tensor,**kwargs):

"""

Calls the tensor for forward pass operation.

Arguments:

input_tensor: Since at the moment the layer only works with batch of 1 the input_tensor should have shape of

a 4D tensor with shape: `1 + (rows,channels)`.

Returns:

4D tensor representative of the forward pass of the Spatial RNN layer with

shape: `batch_shape=1 + (rows,filters)`.

Raises:

Nothing at the moment. But shall be added.

"""

input_tensor = K.cast(tf.identity(input_tensor),tf.float32)

res_sum = tf.identity(input_tensor)

tensor = tf.identity(input_tensor)

conv = tf.keras.backend.conv2d(x=tensor,kernel=self.kernel_left,strides=[1],padding=self.padding)

tensor = tf.nn.relu(conv)

res_sum += tensor

return res_sum

def compute_output_shape(self,input_shape):

"""

Compute output shape.

Arguments:

input_shape: 4D tensor with shape: `1 + (rows,channels)`.

Returns:

4D tensor with shape: `batch_shape=1 + (rows,channels)

"""

return input_shape[0],input_shape[1],input_shape[2],self.num_channel

对于正向传递(预测)它工作正常,但是当我尝试训练模型时,出现“ ValueError:没有为任何变量提供渐变:['spatial_rn_n2d__left / Variable:0']。”。这是训练代码:

image1 = np.array(range(0,50,2)).reshape([1,5,1])

image1 = np.concatenate((image1,image1 + 50),axis=-1)

image2 = np.array(range(100,150,1])

image2 = np.concatenate((image2,image2 + 50),axis=-1)

# Setting up label arrays & dataset

label1_ch1 = np.array([[0,52,158,172,186],[10,82,228,242,256],[20,112,298,312,326],[30,142,368,382,396],[40,438,452,466]]).reshape((1,1))

label1_ch2 = np.array([[50,102,208,222,236],[60,132,278,292,306],[70,162,348,362,376],[80,192,418,432,446],[90,488,502,516]]).reshape((1,1))

label1 = np.concatenate((label1_ch1,label1_ch2),axis=-1)

label2_ch1 = np.array([[100,352,858,872,886],[110,928,942,956],[120,412,998,1012,1026],[130,442,1068,1082,1096],[140,472,1138,1152,1166]]).reshape((1,1))

label2_ch2 = np.array([[150,402,908,922,936],[160,978,992,1006],[170,462,1048,1062,1076],[180,492,1118,1132,1146],[190,522,1188,1202,1216]]).reshape((1,1))

label2 = np.concatenate((label2_ch1,label2_ch2),axis=-1)

label_dataset = np.concatenate((label1,label2),axis=0)

tf.keras.backend.clear_session()

x_in = tf.keras.layers.Input((5,image1.shape[-1]))

y_out = SpatialRNN2D_Left(rnn_radius=2,direction='left')(x_in)

model = tf.keras.Model(inputs=x_in,outputs=y_out)

model.summary()

def test_train():

image_dataset = np.concatenate((image1,image2),axis=0)

model.compile(optimizer='Adam',loss='binary_crossentropy')

model.fit(x=image_dataset,y=label_dataset,batch_size=1,epochs=10)

test_img1_output = model.predict(image1)

print(test_img1_output[0,0])

print(test_img1_output[0,1])

if __name__ == '__main__':

test_forward_pass()

test_train()

我想知道如何解决该错误,以便可以在单层模型中训练该层。如果我从构建函数中删除* self.hex_filter_left,它将训练得很好,但不是我想要的。我该如何解决错误?有没有更好的方法来获取我想要的图层?

下面是一些错误日志。

Traceback (most recent call last):

ValueError: in user code:

C:\Users\behrooz.bajestani\Anaconda3\envs\tfmd_py3.7_tf2\lib\site-packages\tensorflow\python\keras\engine\training.py:571 train_function *

outputs = self.distribute_strategy.run(

C:\Users\behrooz.bajestani\Anaconda3\envs\tfmd_py3.7_tf2\lib\site-packages\tensorflow\python\distribute\distribute_lib.py:951 run **

return self._extended.call_for_each_replica(fn,args=args,kwargs=kwargs)

C:\Users\behrooz.bajestani\Anaconda3\envs\tfmd_py3.7_tf2\lib\site-packages\tensorflow\python\distribute\distribute_lib.py:2290 call_for_each_replica

return self._call_for_each_replica(fn,args,kwargs)

C:\Users\behrooz.bajestani\Anaconda3\envs\tfmd_py3.7_tf2\lib\site-packages\tensorflow\python\distribute\distribute_lib.py:2649 _call_for_each_replica

return fn(*args,**kwargs)

C:\Users\behrooz.bajestani\Anaconda3\envs\tfmd_py3.7_tf2\lib\site-packages\tensorflow\python\keras\engine\training.py:541 train_step **

self.trainable_variables)

C:\Users\behrooz.bajestani\Anaconda3\envs\tfmd_py3.7_tf2\lib\site-packages\tensorflow\python\keras\engine\training.py:1804 _minimize

trainable_variables))

C:\Users\behrooz.bajestani\Anaconda3\envs\tfmd_py3.7_tf2\lib\site-packages\tensorflow\python\keras\optimizer_v2\optimizer_v2.py:521 _aggregate_gradients

filtered_grads_and_vars = _filter_grads(grads_and_vars)

C:\Users\behrooz.bajestani\Anaconda3\envs\tfmd_py3.7_tf2\lib\site-packages\tensorflow\python\keras\optimizer_v2\optimizer_v2.py:1219 _filter_grads

([v.name for _,v in grads_and_vars],))

ValueError: No gradients provided for any variable: ['spatial_rn_n2d__left/Variable:0'].

感谢您的支持和关注。

解决方法

暂无找到可以解决该程序问题的有效方法,小编努力寻找整理中!

如果你已经找到好的解决方法,欢迎将解决方案带上本链接一起发送给小编。

小编邮箱:dio#foxmail.com (将#修改为@)

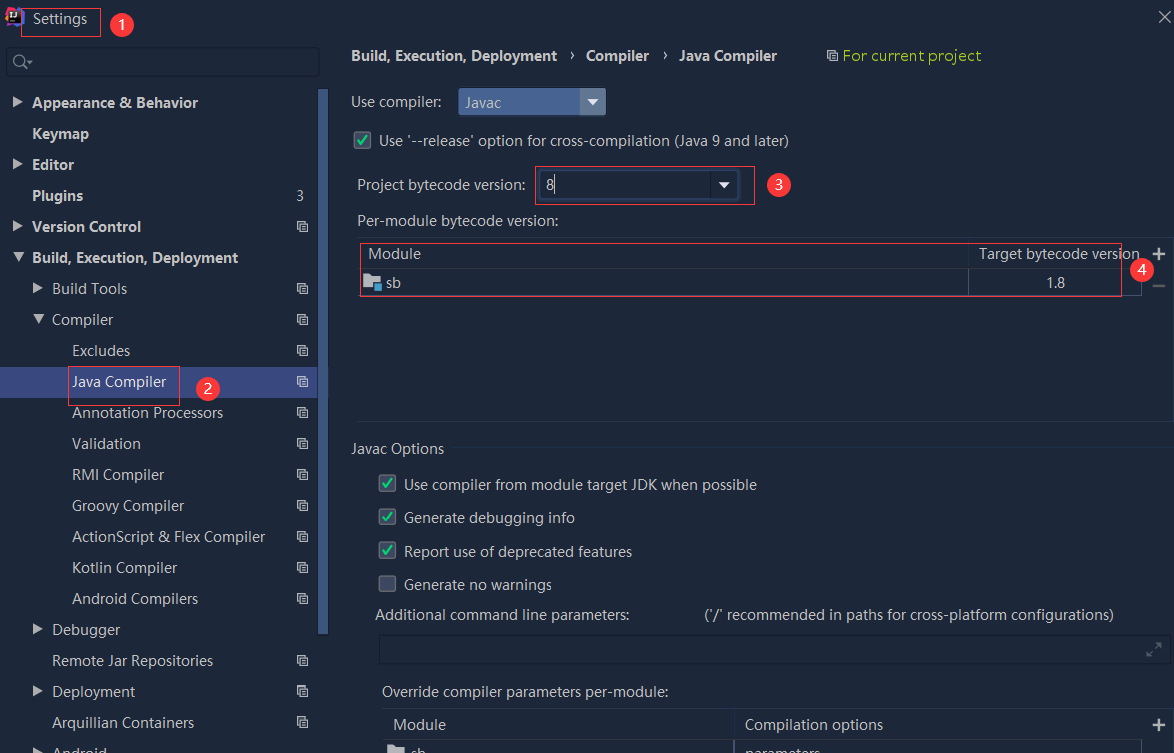

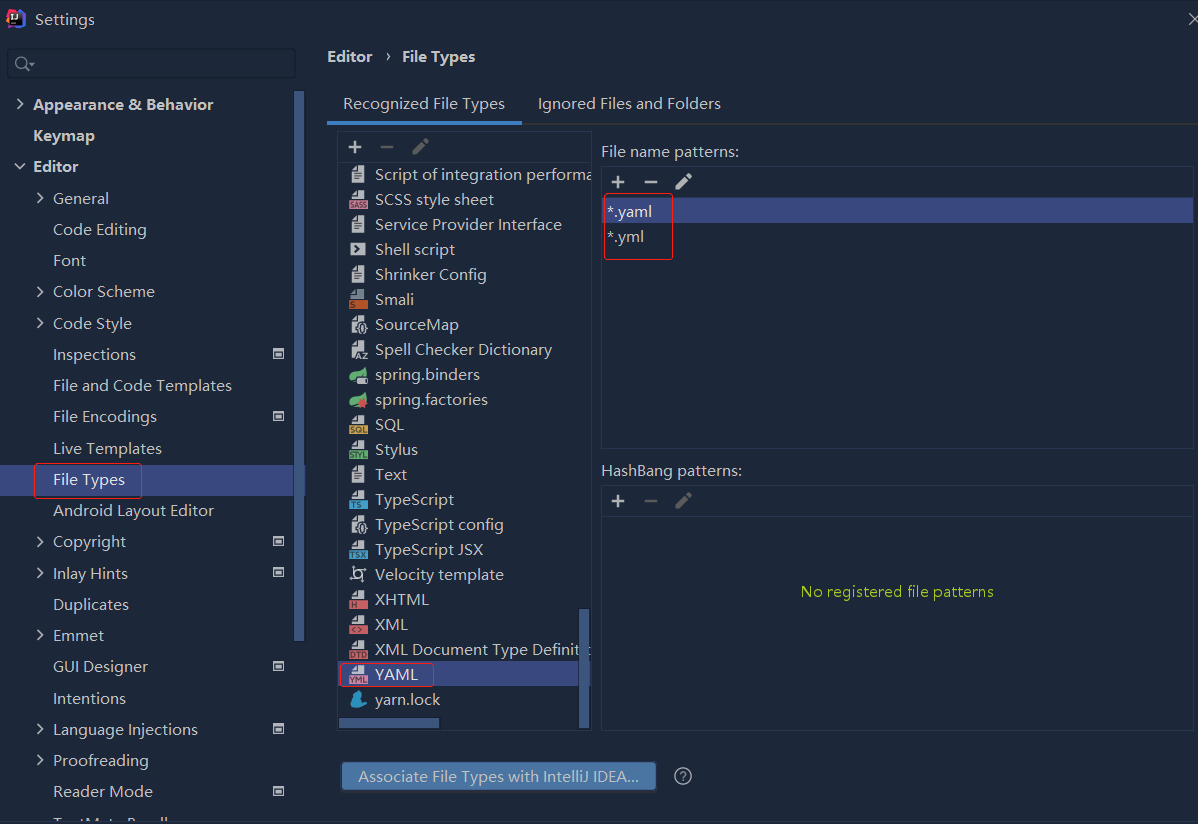

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

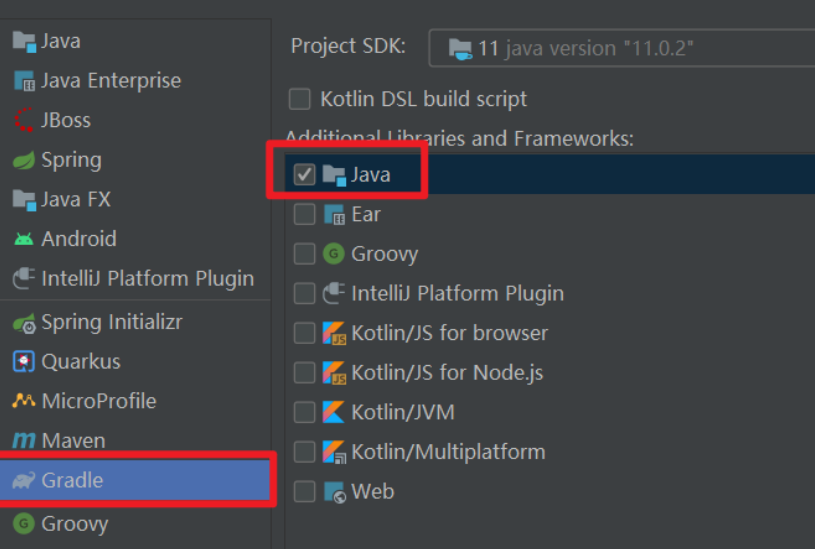

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...