问题描述

我正在使用以下代码来缝合两个视频的帧,并且效果很好。但是在缝合结果中,右侧图像看起来有点偏斜。我无法想到原因

def detectAndDescribe(self,image):

gray = cv2.cvtColor(image,cv2.COLOR_BGR2GRAY)

# AKAZE

descriptor = cv2.AKAZE_create()

(kps,features) = descriptor.detectAndCompute(image,None)

kps_np = np.float32([kp.pt for kp in kps])

# return a tuple of keypoints and features

return (kps_np,features,kps)

def matchKeypoints(self,kpsA,kpsB,featuresA,featuresB,ratio,reprojThresh):

matcher = cv2.BFMatcher(cv2.NORM_HAMMING)

rawMatches = matcher.knnMatch(featuresA,2)

matches = []

for m in rawMatches:

if len(m) == 2 and m[0].distance < m[1].distance * ratio:

matches.append((m[0].trainIdx,m[0].queryIdx))

if len(matches) > 4:

ptsA = np.float32([kpsA[i] for (_,i) in matches])

ptsB = np.float32([kpsB[i] for (i,_) in matches])

(H,status) = cv2.findHomography(ptsA,ptsB,cv2.RANSAC,reprojThresh)

return (matches,H,status,matchesDraw)

return None

#Stitching code

(imageB,imageA) = images

(kps_np_A,kpsA) = self.detectAndDescribe(imageA)

(kps_np_B,kpsB) = self.detectAndDescribe(imageB)

M = self.matchKeypoints(kps_np_A,kps_np_B,reprojThresh)

if M is None:

return None

self.cachedH = M[1]

result = cv2.warpPerspective(imageA,self.cachedH,(imageA.shape[1] + imageB.shape[1],imageA.shape[0]))

result[0:imageB.shape[0],0:imageB.shape[1]] = imageB

return result

是什么原因导致这种怪异的变化,以及解决该问题的方法是什么。我在这里使用AKAZE,但即使存在SIFT问题

解决方法

暂无找到可以解决该程序问题的有效方法,小编努力寻找整理中!

如果你已经找到好的解决方法,欢迎将解决方案带上本链接一起发送给小编。

小编邮箱:dio#foxmail.com (将#修改为@)

设置时间 控制面板

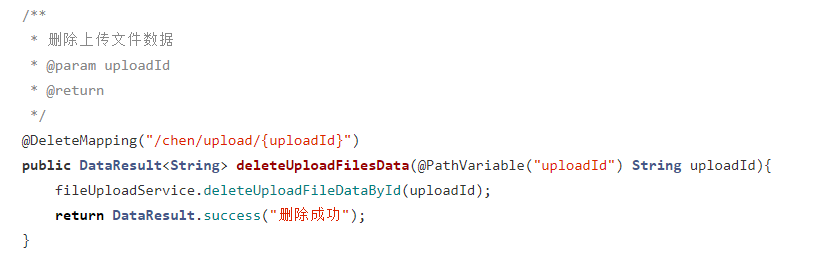

设置时间 控制面板 错误1:Request method ‘DELETE‘ not supported 错误还原:...

错误1:Request method ‘DELETE‘ not supported 错误还原:...