问题描述

我要从此页面抓取“服务/产品”部分:https://www.yellowpages.com/deland-fl/mip/ryan-wells-pumps-20533306?lid=1001782175490

文本位于dd元素内,该元素始终位于

import requests

from lxml import html

url = ""

headers = {'User-Agent': 'Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:76.0) Gecko/20100101 Firefox/76.0'}

session = requests.Session()

r = session.get(url,timeout=30,headers=headers)

t = html.fromstring(r.content)

products = t.xpath('//dd[preceding-sibling::dt[contains(.,"Services/Products")]]/text()[1]')[0] if t.xpath('//dd[preceding-sibling::dt[contains(.,"Services/Products")]]') else ''

是否可以使用Beautifulsoup(如果可能,还可以使用CSS选择器)代替lxml和xpath获取相同的文本?

解决方法

尝试使用BeautifulSoup和Requests。这要容易得多。 这是一些代码

# BeautifulSoup is an HTML parser. You can find specific elements in a BeautifulSoup object

from bs4 import BeautifulSoup

from requests import get

url = "https://www.yellowpages.com/deland-fl/mip/ryan-wells-pumps-20533306?lid=1001782175490"

obj = BeautifulSoup(get(url).content,"html.parser")

# Gets the section with the Services

buisness_info = obj.find("section",{"id":"business-info"})

# Getting all <dd> elements (cause you can pick off the one you need from the list)

all_dd = buisness_info.find_all("dd")

# Finds the specific tag with the text you need

services_and_products = all_dd[2]

# Gets the text

text = services_and_products.text

# All Done

print(text)

在您的页面上尝试以下操作:

inf = soup.select_one('section#business-info dl')

target = inf.find("dt",text='Services/Products').nextSibling

for t in target.stripped_strings:

print(t)

输出:

Pumps|Well Pumps|Residential Pumps|Water Pumps|Residential Pumps|Well Pumps|Residential Pumps|Commercial Pumps|Well Pumps|Pumps & Water Tanks|Residential & Commercial|Residential & Commercial|Water Tanks|Pump Maintenance|Pump Maintenance|Free Estimates|Service & Repair|Emergency Service Avail|Residential & Commercial|Service & Repair|Residential & Commercial|Pumps|Bonded|Insured|Water Tanks|Deep Wells|4 Wells|Pumps & Water Tanks 4'' Wells|2' - 12' Diameter Wells

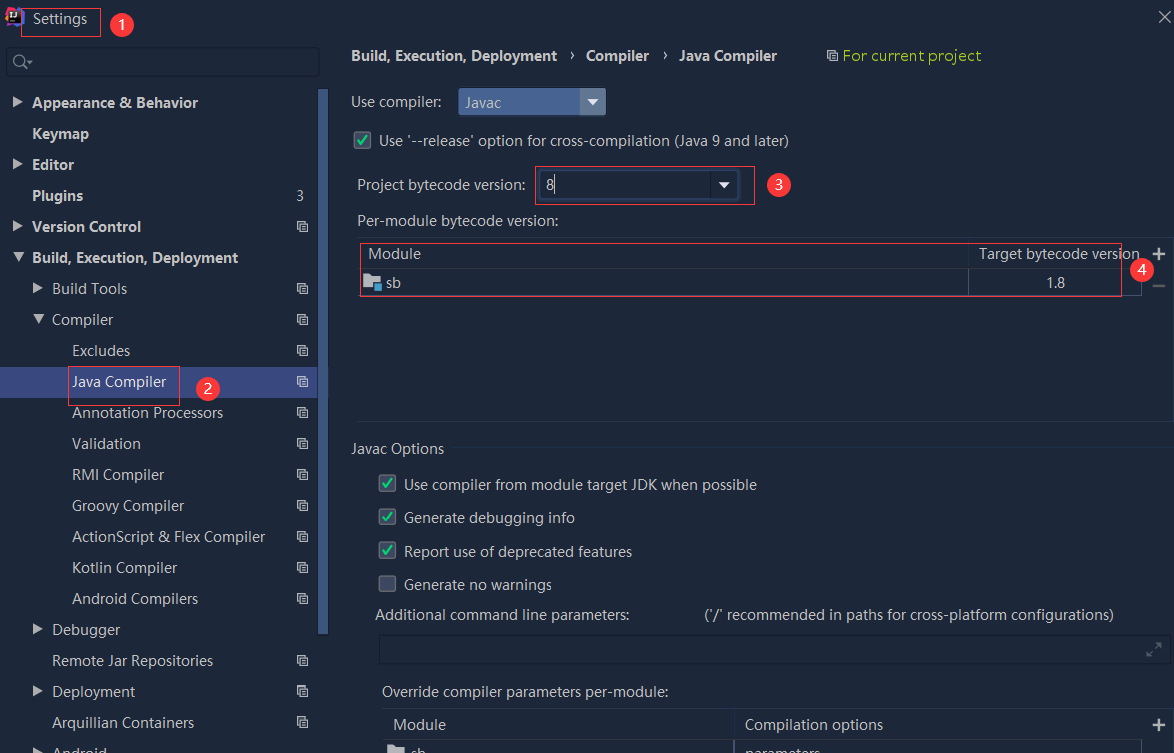

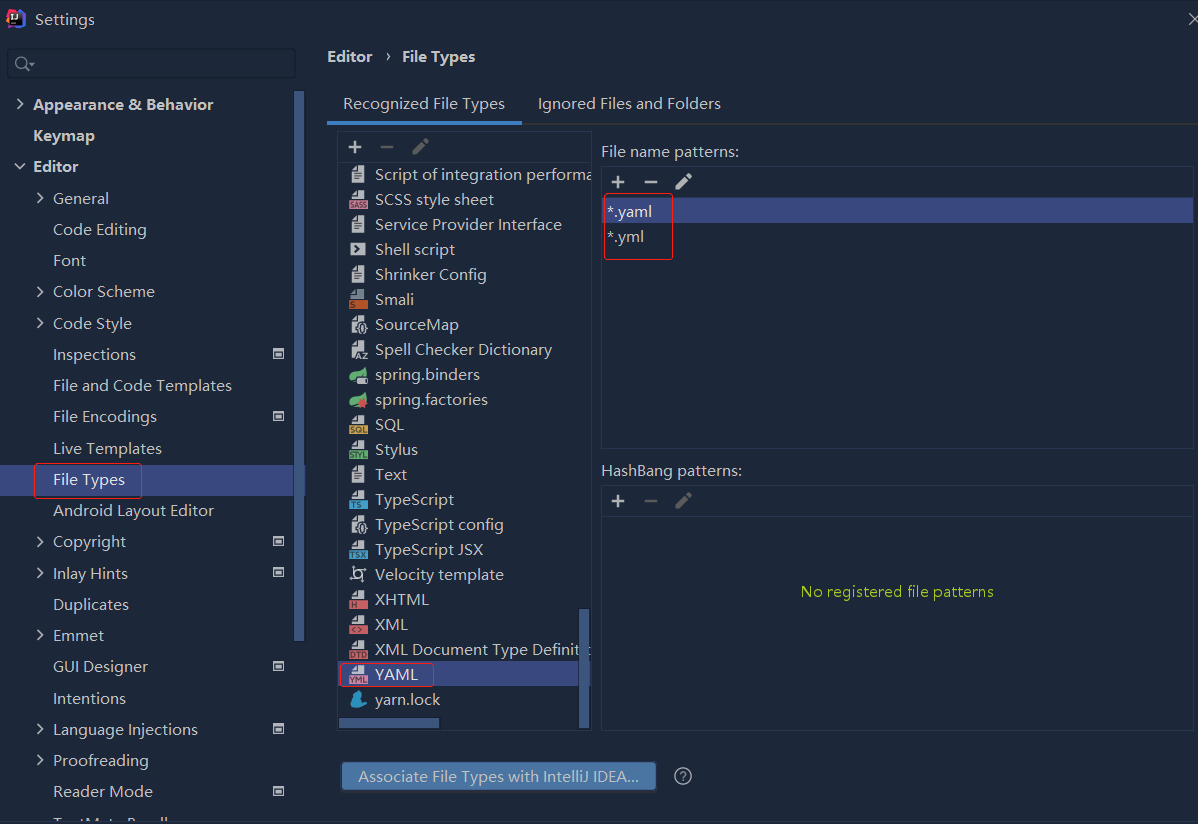

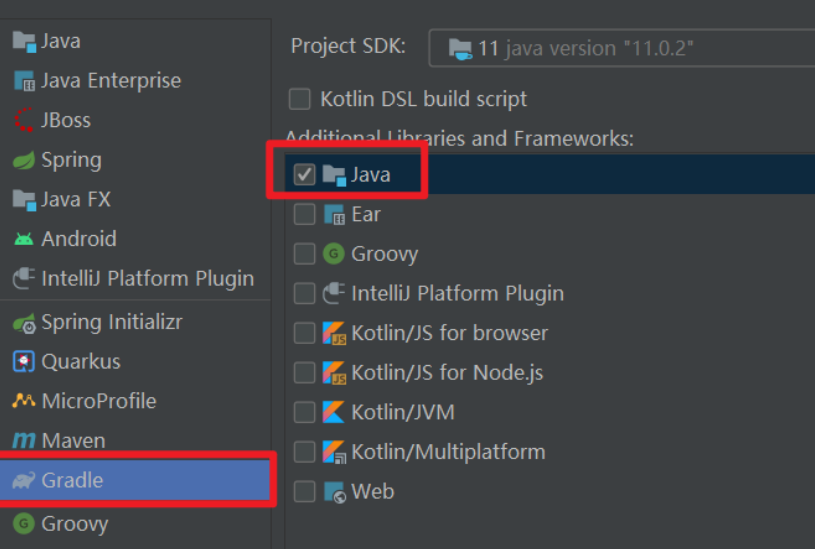

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...