问题描述

使用美丽的汤和熊猫,我尝试使用以下代码将网站上的所有链接附加到列表中。我可以将表格中的所有相关信息与所有页面一起抓取。该代码似乎以某种方式对我有用。但是发生的小问题是仅最后一页中的链接出现。输出不是我期望的。最后,我想在2页中追加一个列表,其中包含所有40个链接(在所需信息旁边)。尽管总共有618页,我还是先尝试抓取2页。您对如何调整代码,以便将每个链接附加到表中有任何建议?预先非常感谢。

import pandas as pd

import requests

from bs4 import BeautifulSoup

hdr={'User-Agent':'Chrome/84.0.4147.135'}

dfs=[]

for page_number in range(2):

http= "http://example.com/&Page={}".format(page_number+1)

print('Downloading page %s...' % http)

url= requests.get(http,headers=hdr)

soup = BeautifulSoup(url.text,'html.parser')

table = soup.find('table')

df_list= pd.read_html(url.text)

df = pd.concat(df_list)

dfs.append(df)

links = []

for tr in table.findAll("tr"):

trs = tr.findAll("td")

for each in trs:

try:

link = each.find('a')['href']

links.append(link)

except:

pass

df['Link'] = links

final_df = pd.concat(dfs)

final_df.to_csv('myfile.csv',index=False,encoding='utf-8-sig')

解决方法

这符合您的逻辑。您只需将链接列添加到最后一个df中,因为它不在循环中。获取页面循环中的链接,然后将其添加到df,然后可以将df附加到您的dfs列表中:

import pandas as pd

import requests

from bs4 import BeautifulSoup

hdr={'User-Agent':'Chrome/84.0.4147.135'}

dfs=[]

for page_number in range(2):

http= "http://example.com/&Page={}".format(page_number+1)

print('Downloading page %s...' % http)

url= requests.get(http,headers=hdr)

soup = BeautifulSoup(url.text,'html.parser')

table = soup.find('table')

df_list= pd.read_html(url.text)

df = pd.concat(df_list)

links = []

for tr in table.findAll("tr"):

trs = tr.findAll("td")

for each in trs:

try:

link = each.find('a')['href']

links.append(link)

except:

pass

df['Link'] = links

dfs.append(df)

final_df = pd.concat(dfs)

final_df.to_csv('myfile.csv',index=False,encoding='utf-8-sig')

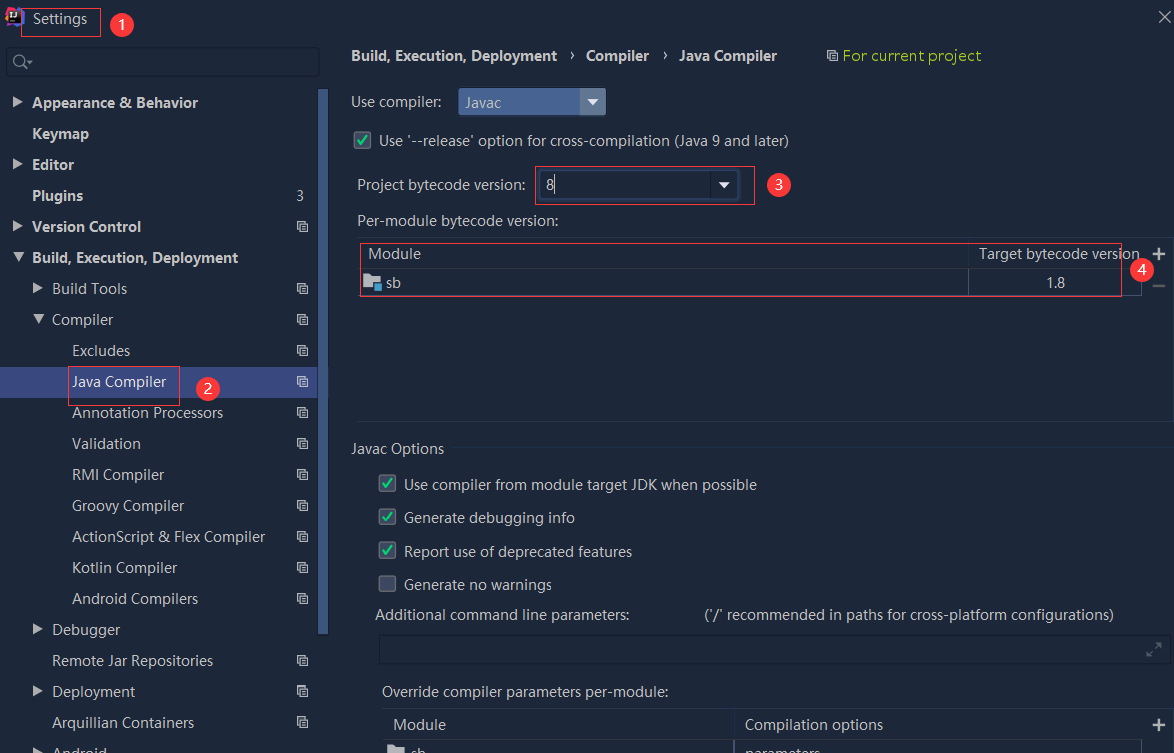

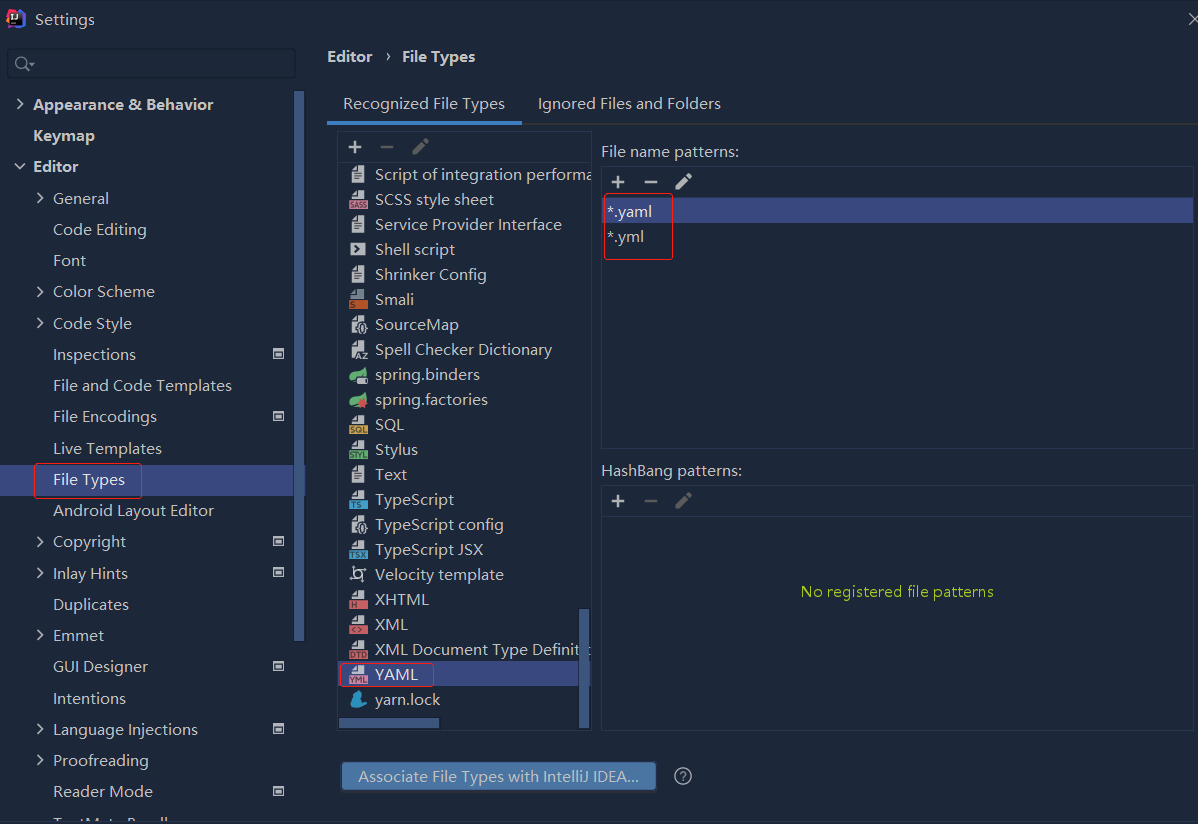

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

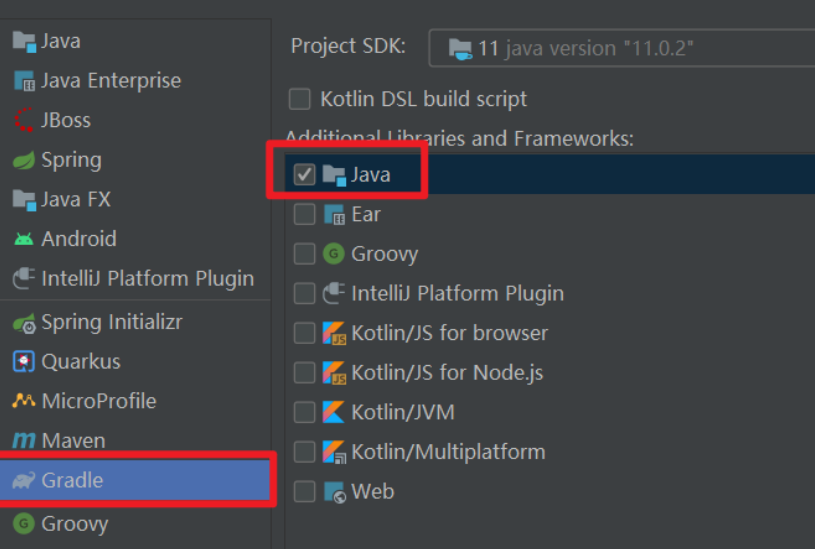

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...