问题描述

我对异步请求有疑问:

如何将for i in df.index.unique():

dff=df.loc[i]

colum=dff['CENSUS2010POP']

try:

ss=sum(colum.nlargest(3))

except:

ss=sum(colum.nlargest(2))

except:

ss=sum(colum.nlargest(1))

快速保存到文件中?

我想发出请求并保存对response.json()文件的响应,而不将其保存在内存中。

.json目前,该脚本仅打印JSON文件,一旦它们全部被擦除,我就可以保存它们:

import asyncio

import aiohttp

async def fetch(sem,session,url):

async with sem:

async with session.get(url) as response:

return await response.json() # here

async def fetch_all(urls,loop):

sem = asyncio.Semaphore(4)

async with aiohttp.ClientSession(loop=loop) as session:

results = await asyncio.gather(

*[fetch(sem,url) for url in urls]

)

return results

if __name__ == '__main__':

urls = (

"https://public.api.openprocurement.org/api/2.5/tenders/6a0585fcfb05471796bb2b6a1d379f9b","https://public.api.openprocurement.org/api/2.5/tenders/d1c74ec8bb9143d5b49e7ef32202f51c","https://public.api.openprocurement.org/api/2.5/tenders/a3ec49c5b3e847fca2a1c215a2b69f8d","https://public.api.openprocurement.org/api/2.5/tenders/52d8a15c55dd4f2ca9232f40c89bfa82","https://public.api.openprocurement.org/api/2.5/tenders/b3af1cc6554440acbfe1d29103fe0c6a","https://public.api.openprocurement.org/api/2.5/tenders/1d1c6560baac4a968f2c82c004a35c90",)

loop = asyncio.get_event_loop()

data = loop.run_until_complete(fetch_all(urls,loop))

print(data)

但是我感觉不对,因为一旦出现内存不足的情况,它肯定会失败。

有什么建议吗?

编辑

我的帖子仅限一个问题

解决方法

如何即时将

response.json()保存到文件中?

首先不要使用response.json(),而应使用streaming API:

async def fetch(sem,session,url):

async with sem,session.get(url) as response:

with open("some_file_name.json","wb") as out:

async for chunk in response.content.iter_chunked(4096)

out.write(chunk)

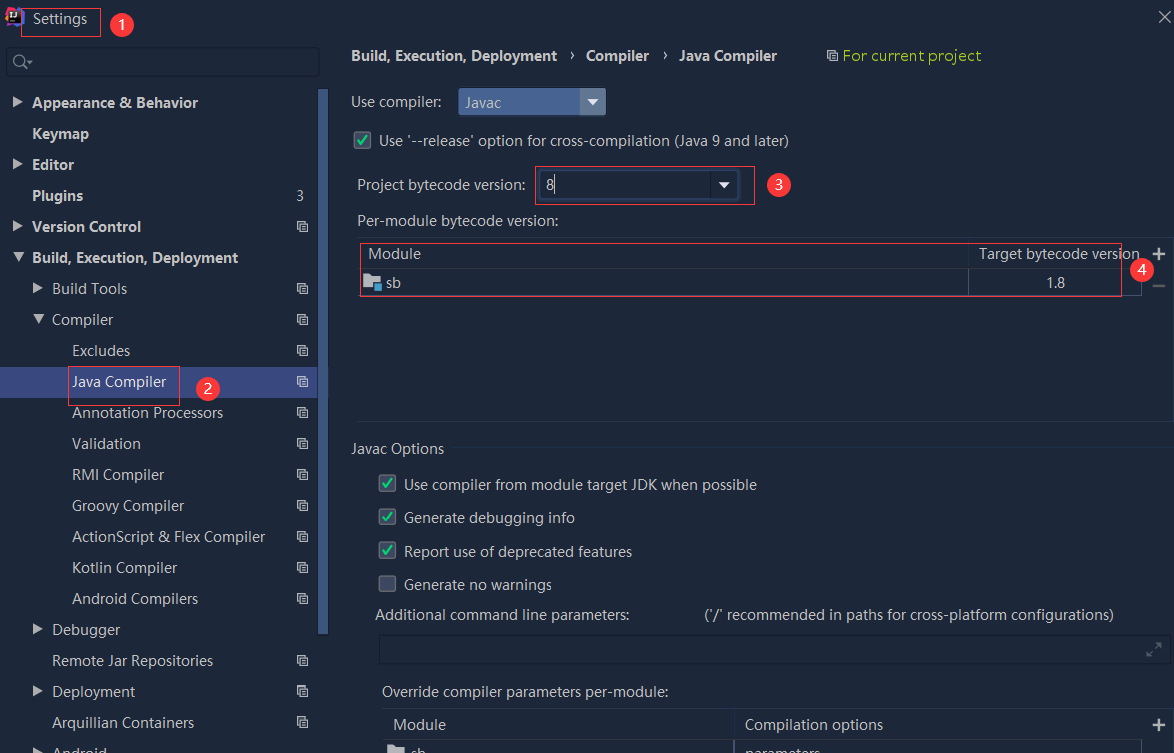

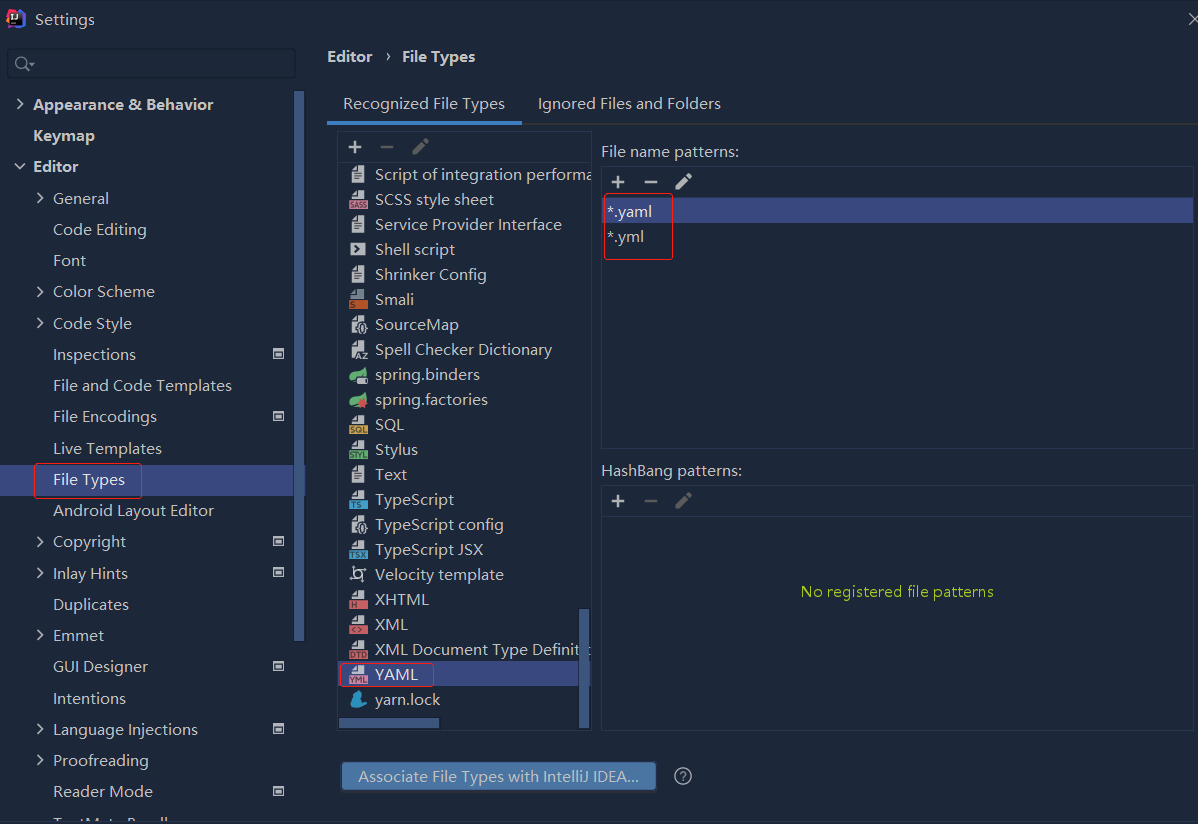

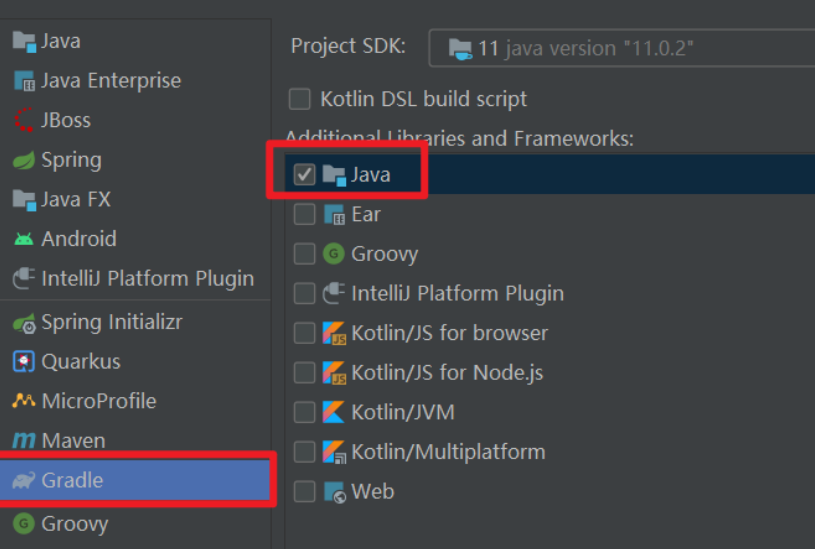

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...