问题描述

我正在尝试使用Selenium创建一个蜘蛛,该蜘蛛将在https://www.trustpilot.com中搜索商家,然后从搜索中检索树的等级/分数。由于有很多商人可以找到,因此我创建了一个列表,Seleniuem循环通过该列表,然后将page_source存储在列表中。想法是应该由Scrapy解析此page_source的列表,并返回带有商家评分的.json文件。运行Spider之后,我看到结果是0的页面已爬网和一个空的.json文件。 Cant似乎想出了为什么不进行任何解析的原因。 这是我的代码:-

# -*- coding: utf-8 -*-

import scrapy

from scrapy import Selector

from selenium.webdriver.common.keys import Keys

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

from selenium.webdriver.common.by import By

from selenium.webdriver.support import expected_conditions as EC

from selenium.webdriver.support.ui import WebDriverWait

from shutil import which

queries = ['yeewtuden.com','1a.lv','grishkoshop.com']

class SeleniumTestSpider(scrapy.Spider):

name = 'selenium_test'

allowed_domains = ['www.trustpilot.com']

start_urls = ["www.trustpilot.com"]

page_responses = []

def __init__(self):

super().__init__()

chrome_options = Options()

chrome_options.add_argument("--headless")

chrome_path = which("chromedriver")

driver = webdriver.Chrome(executable_path=chrome_path,options=chrome_options)

driver.implicitly_wait(10)

driver.get("https://www.trustpilot.com")

# search_field = driver.find_element_by_xpath("//input[@class='searchInputField___3e9zp']")

for query in queries:

search_field = WebDriverWait(driver,7).until(EC.presence_of_element_located((

By.CLASS_NAME,'searchInputField___3e9zp')))

search_field = driver.find_element_by_xpath("//input[@class='searchInputField___3e9zp']")

search_field.send_keys(query)

search_field.send_keys(Keys.ENTER)

self.page_responses.append(driver.page_source)

driver.back()

driver.close()

def parse(self,response):

for resp in self.page_responses:

resp = Selector(text=resp)

score = resp.xpath("//p[@class='header_trustscore']/text()").get()

yield {

'rating': score

}

解决方法

您可以使用下面的代码返回评分。

创建类的对象并创建一个生成器,该生成器将用于获取评分。

testSpider = SeleniumTestSpider()

parseGenerator = testSpider.parse(testSpider.page_responses)

for i in parseGenerator:

print(i,end=" ")

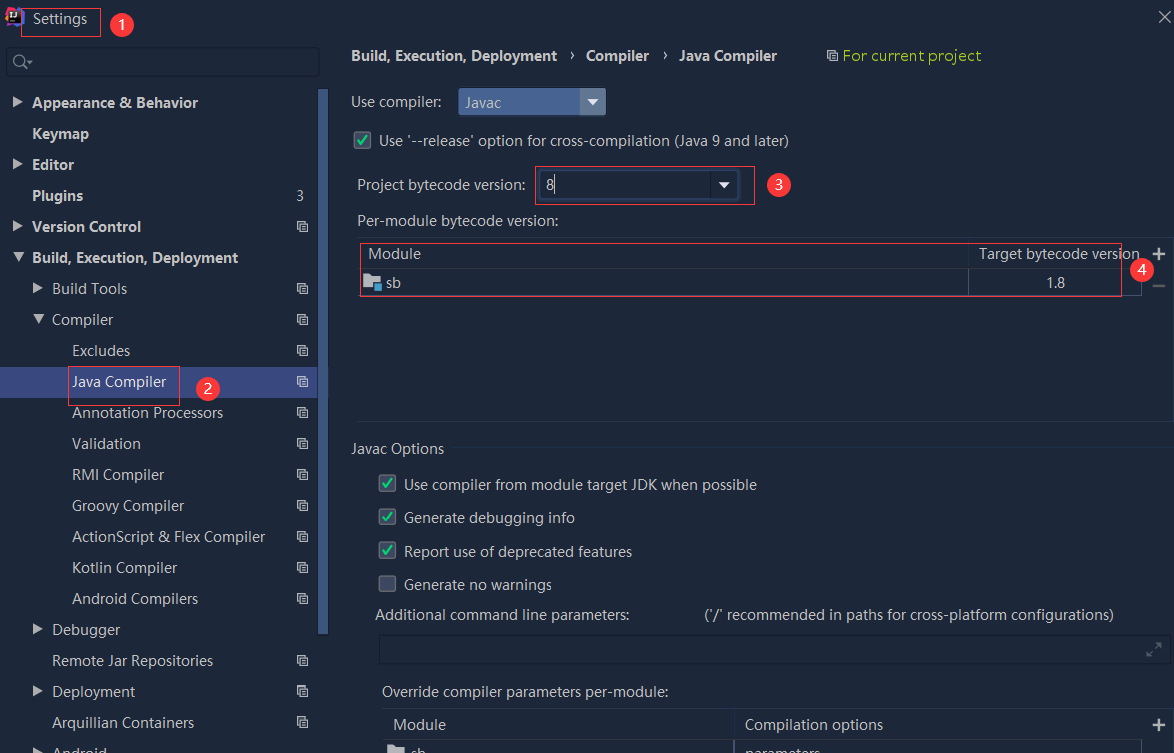

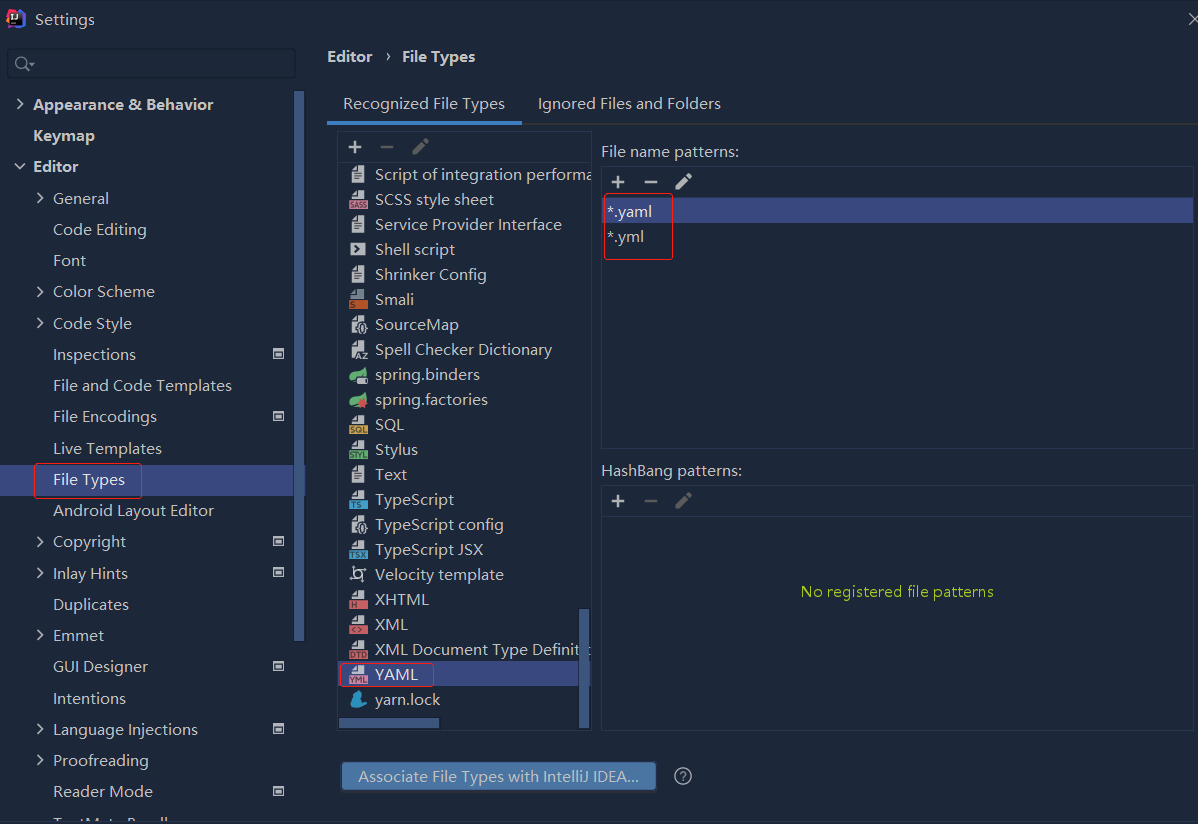

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

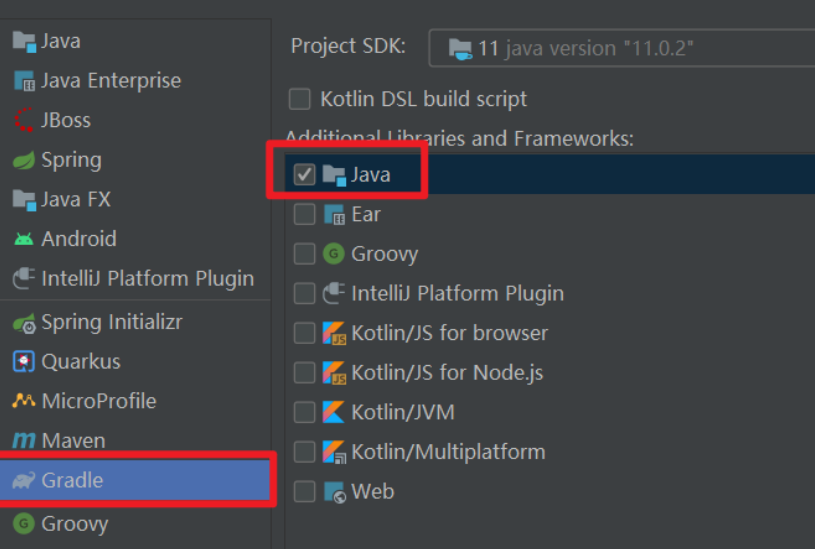

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...