问题描述

您好,下面是我尝试运行的pytorch模型。但是越来越错误。我也发布了错误跟踪。除非添加卷积层,否则它运行得很好。我对深度学习和Pytorch还是陌生的。因此,我很抱歉这是一个愚蠢的问题。我使用的是conv1d,为什么conv1d为什么要期待3D输入,并且还得到了2d输入,所以也很奇怪。

class Net(nn.Module):

def __init__(self):

super().__init__()

self.fc1 = nn.Linear(CROP_SIZE*CROP_SIZE*3,512)

self.conv1d1 = nn.Conv1d(in_channels=512,out_channels=64,kernel_size=1,stride=2)

self.fc2 = nn.Linear(64,128)

self.conv1d2 = nn.Conv1d(in_channels=128,stride=2)

self.fc3 = nn.Linear(64,256)

self.conv1d3 = nn.Conv1d(in_channels=256,stride=2)

self.fc4 = nn.Linear(64,256)

self.fc4 = nn.Linear(256,128)

self.fc5 = nn.Linear(128,64)

self.fc6 = nn.Linear(64,32)

self.fc7 = nn.Linear(32,64)

self.fc8 = nn.Linear(64,frame['landmark_id'].nunique())

def forward(self,x):

x = F.relu(self.conv1d1(self.fc1(x)))

x = F.relu(self.conv1d2(self.fc2(x)))

x = F.relu(self.conv1d3(self.fc3(x)))

x = F.relu(self.fc4(x))

x = F.relu(self.fc5(x))

x = F.relu(self.fc6(x))

x = F.relu(self.fc7(x))

x = self.fc8(x)

return F.log_softmax(x,dim=1)

net = Net()

import torch.optim as optim

loss_function = nn.CrossEntropyLoss()

net.to(torch.device('cuda:0'))

for epoch in range(3): # 3 full passes over the data

optimizer = optim.Adam(net.parameters(),lr=0.001)

for data in tqdm(train_loader): # `data` is a batch of data

X = data['image'].to(device) # X is the batch of features

y = data['landmarks'].to(device) # y is the batch of targets.

optimizer.zero_grad() # sets gradients to 0 before loss calc. You will do this likely every step.

output = net(X.view(-1,CROP_SIZE*CROP_SIZE*3)) # pass in the reshaped batch

# print(np.argmax(output))

# print(y)

loss = F.nll_loss(output,y) # calc and grab the loss value

loss.backward() # apply this loss backwards thru the network's parameters

optimizer.step() # attempt to optimize weights to account for loss/gradients

print(loss) # print loss. We hope loss (a measure of wrong-ness) declines!

错误跟踪

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-42-f5ed7999ce57> in <module>

5 y = data['landmarks'].to(device) # y is the batch of targets.

6 optimizer.zero_grad() # sets gradients to 0 before loss calc. You will do this likely every step.

----> 7 output = net(X.view(-1,CROP_SIZE*CROP_SIZE*3)) # pass in the reshaped batch

8 # print(np.argmax(output))

9 # print(y)

/opt/conda/lib/python3.7/site-packages/torch/nn/modules/module.py in __call__(self,*input,**kwargs)

548 result = self._slow_forward(*input,**kwargs)

549 else:

--> 550 result = self.forward(*input,**kwargs)

551 for hook in self._forward_hooks.values():

552 hook_result = hook(self,input,result)

<ipython-input-37-6d3e34d425a0> in forward(self,x)

16

17 def forward(self,x):

---> 18 x = F.relu(self.conv1d1(self.fc1(x)))

19 x = F.relu(self.conv1d2(self.fc2(x)))

20 x = F.relu(self.conv1d3(self.fc3(x)))

/opt/conda/lib/python3.7/site-packages/torch/nn/modules/module.py in __call__(self,result)

/opt/conda/lib/python3.7/site-packages/torch/nn/modules/conv.py in forward(self,input)

210 _single(0),self.dilation,self.groups)

211 return F.conv1d(input,self.weight,self.bias,self.stride,--> 212 self.padding,self.groups)

213

214

RuntimeError: Expected 3-dimensional input for 3-dimensional weight [64,512,1],but got 2-dimensional input of size [4,512] instead

解决方法

您应该了解卷积的工作原理(例如,参见this answer)和一些神经网络基础知识(this tutorial from PyTorch)。

基本上,Conv1d期望输入形状为[batch,channels,features](其中features可能是一些时间步长,并且可能有所不同,请参见示例)。

nn.Linear的形状为[batch,features],因为它已完全连接并且每个输入要素都已连接到每个输出要素。

对于torch.nn.Linear,您可以自己验证这些形状:

import torch

layer = torch.nn.Linear(20,10)

data = torch.randn(64,20) # [batch,in_features]

layer(data).shape # [64,10],[batch,out_features]

对于Conv1d:

layer = torch.nn.Conv1d(in_channels=20,out_channels=10,kernel_size=3,padding=1)

data = torch.randn(64,20,15) # [batch,timesteps]

layer(data).shape # [64,10,15],out_features]

layer(torch.randn(32,25)).shape # [32,25]

顺便说一句。。在处理图像时,应改用torch.nn.Conv 2 d。

大多数 Pytorch 函数可处理批处理数据,即它们接受大小为(batch_size,shape)的输入。 @Szymon Maszke已经发布了与此相关的答案。

因此,在您的情况下,可以使用取消挤压和 sqeeze 功能来添加和删除多余的尺寸。

这是示例代码:

import torch

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super().__init__()

self.fc1 = nn.Linear(100,512)

self.conv1d1 = nn.Conv1d(in_channels=512,out_channels=64,kernel_size=1,stride=2)

self.fc2 = nn.Linear(64,128)

def forward(self,x):

x = self.fc1(x)

x = x.unsqueeze(dim=2)

x = F.relu(self.conv1d1(x))

x = x.squeeze()

x = self.fc2(x)

return x

net = Net()

bsize = 4

inp = torch.randn((bsize,100))

out = net(inp)

print(out.shape)

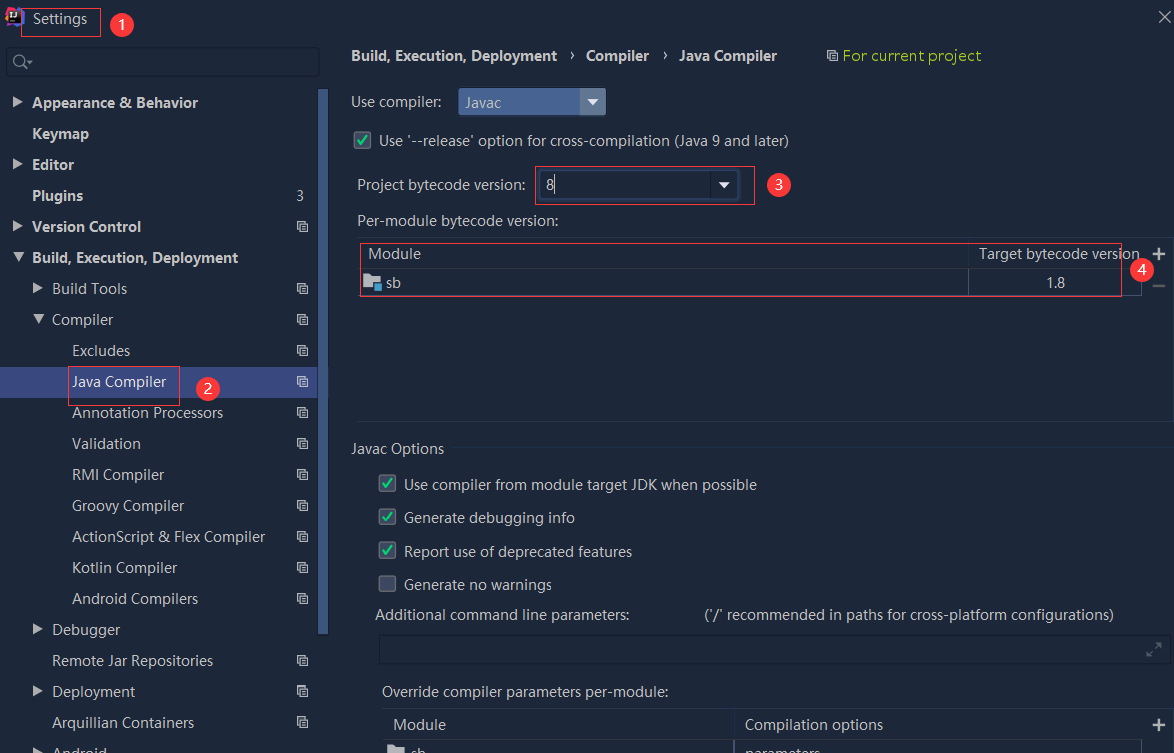

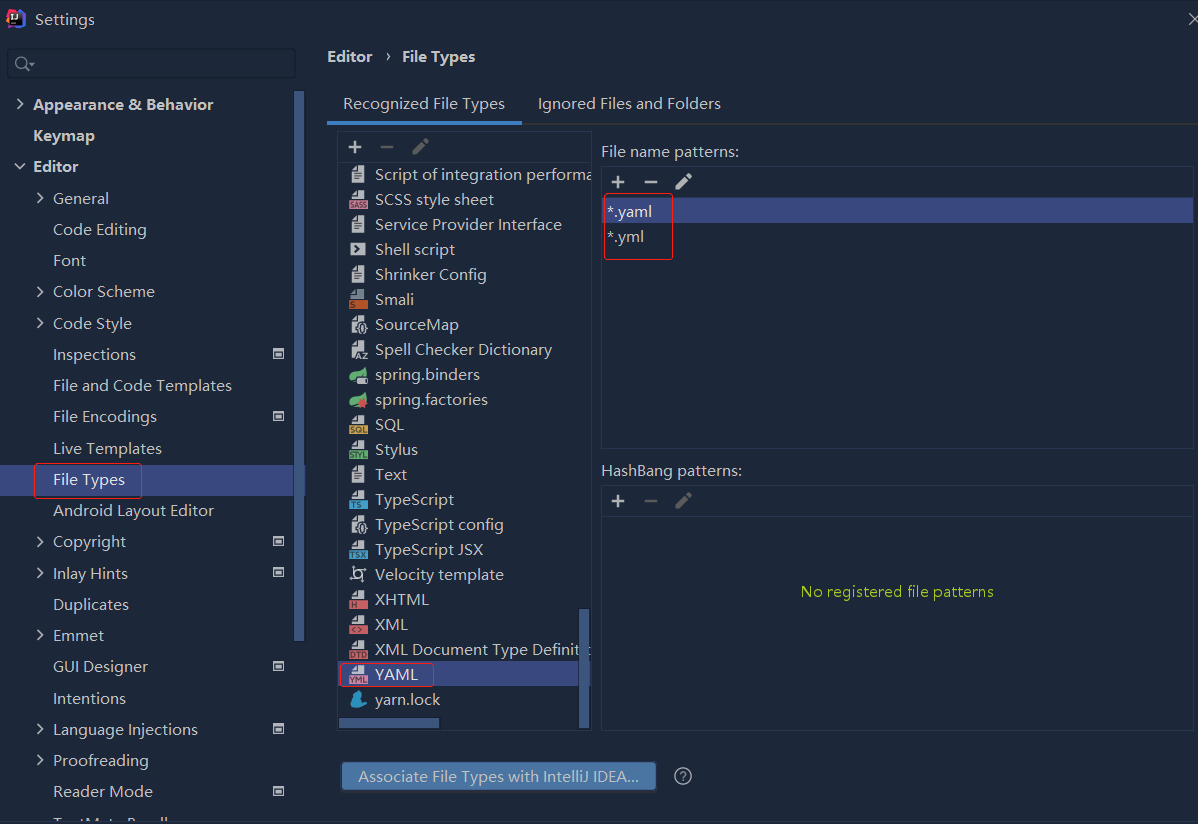

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

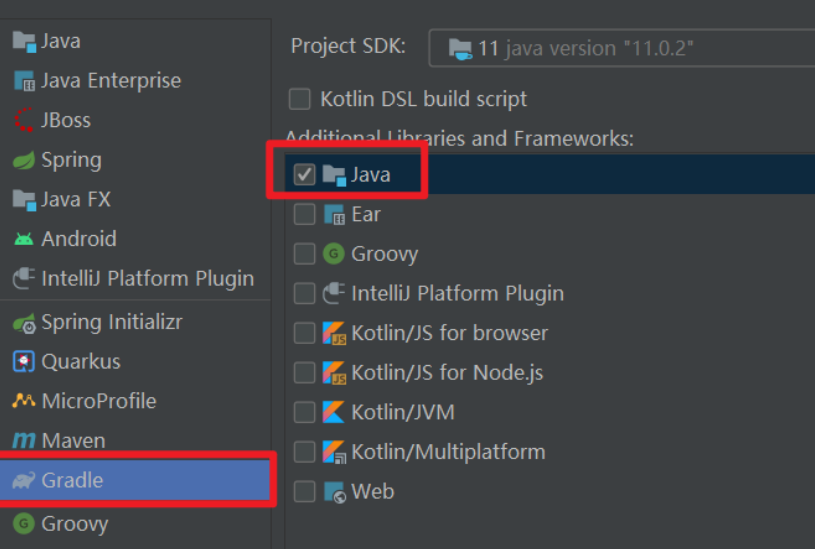

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...