问题描述

我正在尝试创建Twitter用户图,为此,我编写了以下代码:

import operator

import sys

import time

from urllib.error import URLError

from http.client import BadStatusLine

import json

import twitter

from functools import partial

from sys import maxsize as maxint

import itertools

import networkx

import matplotlib.pyplot as plt

G = networkx.Graph()

# Code and function taken from the twitter cookbook

def oauth_login():

CONSUMER_KEY = 'xxxx'

CONSUMER_SECRET = 'xxZD6r'

OAUTH_TOKEN = 'xxNRYl'

OAUTH_TOKEN_SECRET = 'xxHYJl'

auth = twitter.oauth.OAuth(OAUTH_TOKEN,OAUTH_TOKEN_SECRET,CONSUMER_KEY,CONSUMER_SECRET)

twitter_api = twitter.Twitter(auth=auth)

return twitter_api

# Code and function taken from the twitter cookbook

def make_twitter_request(twitter_api_func,max_errors=10,*args,**kw):

# A nested helper function that handles common HTTPErrors. Return an updated

# value for wait_period if the problem is a 500 level error. Block until the

# rate limit is reset if it's a rate limiting issue (429 error). Returns None

# for 401 and 404 errors,which requires special handling by the caller.

def handle_twitter_http_error(e,wait_period=2,sleep_when_rate_limited=True):

if wait_period > 3600: # Seconds

print('Too many retries. Quitting.',file=sys.stderr)

raise e

if e.e.code == 401:

print('Encountered 401 Error (Not Authorized)',file=sys.stderr)

return None

elif e.e.code == 404:

print('Encountered 404 Error (Not Found)',file=sys.stderr)

return None

elif e.e.code == 429:

print('Encountered 429 Error (Rate Limit Exceeded)',file=sys.stderr)

if sleep_when_rate_limited:

print("Retrying in 15 minutes...ZzZ...",file=sys.stderr)

sys.stderr.flush()

time.sleep(60 * 15 + 5)

print('...ZzZ...Awake now and trying again.',file=sys.stderr)

return 2

else:

raise e # Caller must handle the rate limiting issue

elif e.e.code in (500,502,503,504):

print('Encountered {0} Error. Retrying in {1} seconds'.format(e.e.code,wait_period),file=sys.stderr)

time.sleep(wait_period)

wait_period *= 1.5

return wait_period

else:

raise e

wait_period = 2

error_count = 0

while True:

try:

return twitter_api_func(*args,**kw)

except twitter.api.TwitterHTTPError as e:

error_count = 0

wait_period = handle_twitter_http_error(e,wait_period)

if wait_period is None:

return

except URLError as e:

error_count += 1

time.sleep(wait_period)

wait_period *= 1.5

print("URLError encountered. Continuing.",file=sys.stderr)

if error_count > max_errors:

print("Too many consecutive errors...bailing out.",file=sys.stderr)

raise

except BadStatusLine as e:

error_count += 1

time.sleep(wait_period)

wait_period *= 1.5

print("BadStatusLine encountered. Continuing.",file=sys.stderr)

raise

# Code and function taken from the twitter cookbook

def get_friends_followers_ids(twitter_api,screen_name=None,user_id=None,friends_limit=maxint,followers_limit=maxint):

# Must have either screen_name or user_id (logical xor)

assert (screen_name is not None) != (user_id is not None),"Must have screen_name or user_id,but not both"

# See https://developer.twitter.com/en/docs/twitter-api/v1/accounts-and-users/follow-search-get-

#users/api-reference/get-friends-ids for details

# on API parameters

get_friends_ids = partial(make_twitter_request,twitter_api.friends.ids,count=5000)

get_followers_ids = partial(make_twitter_request,twitter_api.followers.ids,count=5000)

friends_ids,followers_ids = [],[]

for twitter_api_func,limit,ids,label in [

[get_friends_ids,friends_limit,friends_ids,"friends"],[get_followers_ids,followers_limit,followers_ids,"followers"]

]:

if limit == 0: continue

cursor = -1

while cursor != 0:

# Use make_twitter_request via the partially bound callable...

if screen_name:

response = twitter_api_func(screen_name=screen_name,cursor=cursor)

else: # user_id

response = twitter_api_func(user_id=user_id,cursor=cursor)

if response is not None:

ids += response['ids']

cursor = response['next_cursor']

print('Fetched {0} total {1} ids for {2}'.format(len(ids),label,(user_id or screen_name)),file=sys.stderr)

# XXX: You may want to store data during each iteration to provide an

# an additional layer of protection from exceptional circumstances

if len(ids) >= limit or response is None:

break

# Do something useful with the IDs,like store them to disk...

return friends_ids[:friends_limit],followers_ids[:followers_limit]

# Code and function taken from the twitter cookbook

def get_user_profile(twitter_api,screen_names=None,user_ids=None):

# Must have either screen_name or user_id (logical xor)

assert (screen_names is not None) != (user_ids is not None)

items_to_info = {}

items = screen_names or user_ids

while len(items) > 0:

items_str = ','.join([str(item) for item in items[:100]])

items = items[100:]

if screen_names:

response = make_twitter_request(twitter_api.users.lookup,screen_name=items_str)

else: # user_ids

response = make_twitter_request(twitter_api.users.lookup,user_id=items_str)

for user_info in response:

if screen_names:

items_to_info[user_info['screen_name']] = user_info

else: # user_ids

items_to_info[user_info['id']] = user_info

return items_to_info

# Function to find reciprocal friends and sort them such that we get the top 5 friends

def reciprocal_friends(twitter_api,user_id=None):

friends_list_ids,followers_list_ids = get_friends_followers_ids(twitter_api,screen_name=screen_name,user_id=user_id,friends_limit=5000,followers_limit=5000)

friends_reciprocal = list(set(friends_list_ids) & set(followers_list_ids))

list_followers_count = []

user_profiles = {}

for each in friends_reciprocal:

user_profiles[each] = get_user_profile(twitter_api,user_ids=[each])[each]

list_followers_count.append(user_profiles[each]['followers_count'])

res = sorted(list_followers_count,reverse=True)

friends_count = {user_profiles[fr]['followers_count']: fr for fr in friends_reciprocal}

list_resciprocal = []

if len(res) < 6:

list_resciprocal = friends_reciprocal

else:

for i in range(5):

list_resciprocal.append(friends_count[res[i]])

return list_resciprocal

# This function finds reciprocal friends again and again till we achieve at least 100 nodes

def crawler(twitter_api,user_id=None):

rec_friends = reciprocal_friends(twitter_api,user_id=user_id)

edges = [(screen_name,x) for x in rec_friends]

G.add_edges_from(edges)

nodes = nxt_qu = rec_friends

if len(nodes) == 0:

print("No reciprocal friends")

return rec_friends

while G.number_of_nodes() < 101:

print("Queue Items : ",nxt_qu)

(queue,nxt_qu) = (nxt_qu,[])

for q in queue:

if G.number_of_nodes() >= 101:

break

print("ID Entered:",q)

res = reciprocal_friends(twitter_api,user_id=q)

edges = [(q,z) for z in res]

G.add_edges_from(edges)

nxt_qu += res

nodes += res

print(nodes)

# To Plot the graph

networkx.draw(G)

plt.savefig("graphresult.png")

plt.show()

# Printing the Output

print("No. of Edges: ",G.number_of_edges())

print("No. of Nodes: ",G.number_of_nodes())

print("Diameter : ",networkx.diameter(G))

print("Average Distance: ",networkx.average_shortest_path_length(G))

# To write the output into a file

f = open("output.txt","w")

f.write("No. of Nodes: " + str(G.number_of_nodes()))

f.write("\nNo. of Edges: " + str(G.number_of_edges()))

f.write("\nDiameter: " + str(networkx.diameter(G)))

f.write("\nAverage Distance: " + str(networkx.average_shortest_path_length(G)))

twitter_api = oauth_login()

crawler(twitter_api,screen_name="POTUS")

但是我经常遇到此错误,这使我的程序运行非常慢

输入的ID:60784269 为60784269获取了5000个朋友ID 为60784269获取了5000个关注者ID 遇到429错误(超出速率限制) 15分钟后重试... ZzZ ...

有没有办法解决这个问题?使代码运行更快? 我已经阅读了一些文件,但仍然没有清晰的图片。任何帮助表示赞赏。

解决方法

使用Public API无法解决速率限制限制。

尽管现在有一个API v2,它也使您能够吸引用户,并且不能在相同的速率限制下工作。

请注意,该解决方案将是临时的,因为Twitter有时会删除对API v1的访问。

您可以请求twitter来访问API的高级/企业级,但您需要为此付费。

您可以在此处查看速率限制文档:

设置时间 控制面板

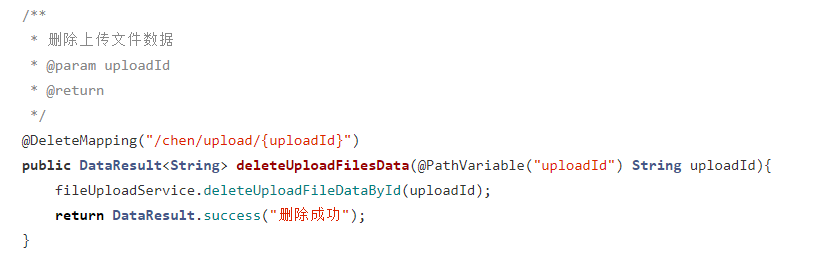

设置时间 控制面板 错误1:Request method ‘DELETE‘ not supported 错误还原:...

错误1:Request method ‘DELETE‘ not supported 错误还原:...