问题描述

我们正在开发需要使用 Azure 作为文件内容存储的文档微服务。 Azure Block Blob 似乎是一个合理的选择。文档服务的堆限制为 512MB (-Xmx512m)。

我没有成功地使用 azure-storage-blob:12.10.0-beta.1(也在 12.9.0 上测试过)使用有限堆上传流式文件。

尝试了以下方法:

- 使用

BlockBlobClient从 documentation 复制粘贴

BlockBlobClient blockBlobClient = blobContainerClient.getBlobClient("file").getBlockBlobClient();

File file = new File("file");

try (InputStream dataStream = new FileInputStream(file)) {

blockBlobClient.upload(dataStream,file.length(),true /* overwrite file */);

}

结果: java.io.IOException: mark/reset not supported - 即使文件输入流报告不支持此功能,SDK 也会尝试使用标记/重置。

- 添加

BufferedInputStream以缓解标记/重置问题(每个 advice):

BlockBlobClient blockBlobClient = blobContainerClient.getBlobClient("file").getBlockBlobClient();

File file = new File("file");

try (InputStream dataStream = new BufferedInputStream(new FileInputStream(file))) {

blockBlobClient.upload(dataStream,true /* overwrite file */);

}

结果: java.lang.OutOfMemoryError: Java heap space。我假设 SDK 尝试将所有 1.17GB 的文件内容加载到内存中。

- 将

BlockBlobClient替换为BlobClient并移除堆大小限制 (-Xmx512m):

BlobClient blobClient = blobContainerClient.getBlobClient("file");

File file = new File("file");

try (InputStream dataStream = new FileInputStream(file)) {

blobClient.upload(dataStream,true /* overwrite file */);

}

结果:使用了 1.5GB 的堆内存,所有文件内容都加载到内存中 + Reactor 一侧的一些缓冲

- 通过

BlobOutputStream切换到流式传输:

long blockSize = DataSize.ofMegabytes(4L).toBytes();

BlockBlobClient blockBlobClient = blobContainerClient.getBlobClient("file").getBlockBlobClient();

// create / erase blob

blockBlobClient.commitBlockList(List.of(),true);

BlockBlobOutputStreamOptions options = (new BlockBlobOutputStreamOptions()).setParallelTransferOptions(

(new ParallelTransferOptions()).setBlockSizeLong(blockSize).setMaxConcurrency(1).setMaxSingleUploadSizeLong(blockSize));

try (InputStream is = new FileInputStream("file")) {

try (OutputStream os = blockBlobClient.getBlobOutputStream(options)) {

IOUtils.copy(is,os); // uses 8KB buffer

}

}

结果:文件在上传过程中损坏。 Azure Web 门户显示 1.09GB,而不是预期的 1.17GB。从 Azure Web 门户手动下载文件可确认文件内容在上传过程中已损坏。内存占用显着减少,但文件损坏是个大问题。

问题:无法想出一个内存占用小的有效上传/下载解决方案

任何帮助将不胜感激!

解决方法

请尝试使用下面的代码上传/下载大文件,我已经使用大小约为 1.1 GB 的 .zip 文件进行了测试

上传文件:

public static void uploadFilesByChunk() {

String connString = "<conn str>";

String containerName = "<container name>";

String blobName = "UploadOne.zip";

String filePath = "D:/temp/" + blobName;

BlobServiceClient client = new BlobServiceClientBuilder().connectionString(connString).buildClient();

BlobClient blobClient = client.getBlobContainerClient(containerName).getBlobClient(blobName);

long blockSize = 2 * 1024 * 1024; //2MB

ParallelTransferOptions parallelTransferOptions = new ParallelTransferOptions()

.setBlockSizeLong(blockSize).setMaxConcurrency(2)

.setProgressReceiver(new ProgressReceiver() {

@Override

public void reportProgress(long bytesTransferred) {

System.out.println("uploaded:" + bytesTransferred);

}

});

BlobHttpHeaders headers = new BlobHttpHeaders().setContentLanguage("en-US").setContentType("binary");

blobClient.uploadFromFile(filePath,parallelTransferOptions,headers,null,AccessTier.HOT,new BlobRequestConditions(),Duration.ofMinutes(30));

}

下载文件:

public static void downLoadFilesByChunk() {

String connString = "<conn str>";

String containerName = "<container name>";

String blobName = "UploadOne.zip";

String filePath = "D:/temp/" + "DownloadOne.zip";

BlobServiceClient client = new BlobServiceClientBuilder().connectionString(connString).buildClient();

BlobClient blobClient = client.getBlobContainerClient(containerName).getBlobClient(blobName);

long blockSize = 2 * 1024 * 1024;

com.azure.storage.common.ParallelTransferOptions parallelTransferOptions = new com.azure.storage.common.ParallelTransferOptions()

.setBlockSizeLong(blockSize).setMaxConcurrency(2)

.setProgressReceiver(new com.azure.storage.common.ProgressReceiver() {

@Override

public void reportProgress(long bytesTransferred) {

System.out.println("dowloaded:" + bytesTransferred);

}

});

BlobDownloadToFileOptions options = new BlobDownloadToFileOptions(filePath)

.setParallelTransferOptions(parallelTransferOptions);

blobClient.downloadToFileWithResponse(options,Duration.ofMinutes(30),null);

}

设置时间 控制面板

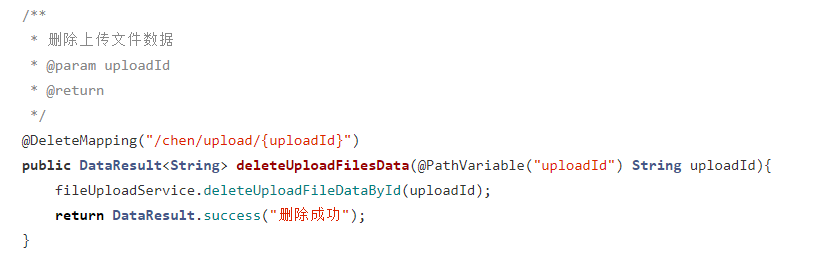

设置时间 控制面板 错误1:Request method ‘DELETE‘ not supported 错误还原:...

错误1:Request method ‘DELETE‘ not supported 错误还原:...