问题描述

池 default.rgw.buckets.data 已存储 501 GiB,但 USED 显示 3.5 TiB。

root@ceph-01:~# ceph df

--- RAW STORAGE ---

CLASS SIZE AVAIL USED RAW USED %RAW USED

hdd 196 TiB 193 TiB 3.5 TiB 3.6 TiB 1.85

TOTAL 196 TiB 193 TiB 3.5 TiB 3.6 TiB 1.85

--- POOLS ---

POOL ID PGS STORED OBJECTS USED %USED MAX AVAIL

device_health_metrics 1 1 19 KiB 12 56 KiB 0 61 TiB

.rgw.root 2 32 2.6 KiB 6 1.1 MiB 0 61 TiB

default.rgw.log 3 32 168 KiB 210 13 MiB 0 61 TiB

default.rgw.control 4 32 0 B 8 0 B 0 61 TiB

default.rgw.meta 5 8 4.8 KiB 11 1.9 MiB 0 61 TiB

default.rgw.buckets.index 6 8 1.6 GiB 211 4.7 GiB 0 61 TiB

default.rgw.buckets.data 10 128 501 GiB 5.36M 3.5 TiB 1.90 110 TiB

default.rgw.buckets.data 池正在使用纠删码:

root@ceph-01:~# ceph osd erasure-code-profile get EC_RGW_HOST

crush-device-class=hdd

crush-failure-domain=host

crush-root=default

jerasure-per-chunk-alignment=false

k=6

m=4

plugin=jerasure

technique=reed_sol_van

w=8

如果有人能帮助解释为什么它使用了 7 倍以上的空间,那将大有帮助。 版本控制被禁用。 ceph 版本 15.2.13(章鱼稳定版)。

解决方法

这与 bluestore_min_alloc_size_hdd=64K(八达通的默认值)有关。我将升级到 Pacific 并更改为 bluestore_min_alloc_size_hdd=4K 这应该会有所帮助。

Josh Baergen 在邮件列表中回复:

Hey Arkadiy,If the OSDs are on HDDs and were created with the default

bluestore_min_alloc_size_hdd,which is still 64KiB in Octopus,then in

effect data will be allocated from the pool in 640KiB chunks (64KiB *

(k+m)). 5.36M objects taking up 501GiB is an average object size of 98KiB

which results in a ratio of 6.53:1 allocated:stored,which is pretty close

to the 7:1 observed.

If my assumption about your configuration is correct,then the only way to

fix this is to adjust bluestore_min_alloc_size_hdd and recreate all your

OSDs,which will take a while...

Josh

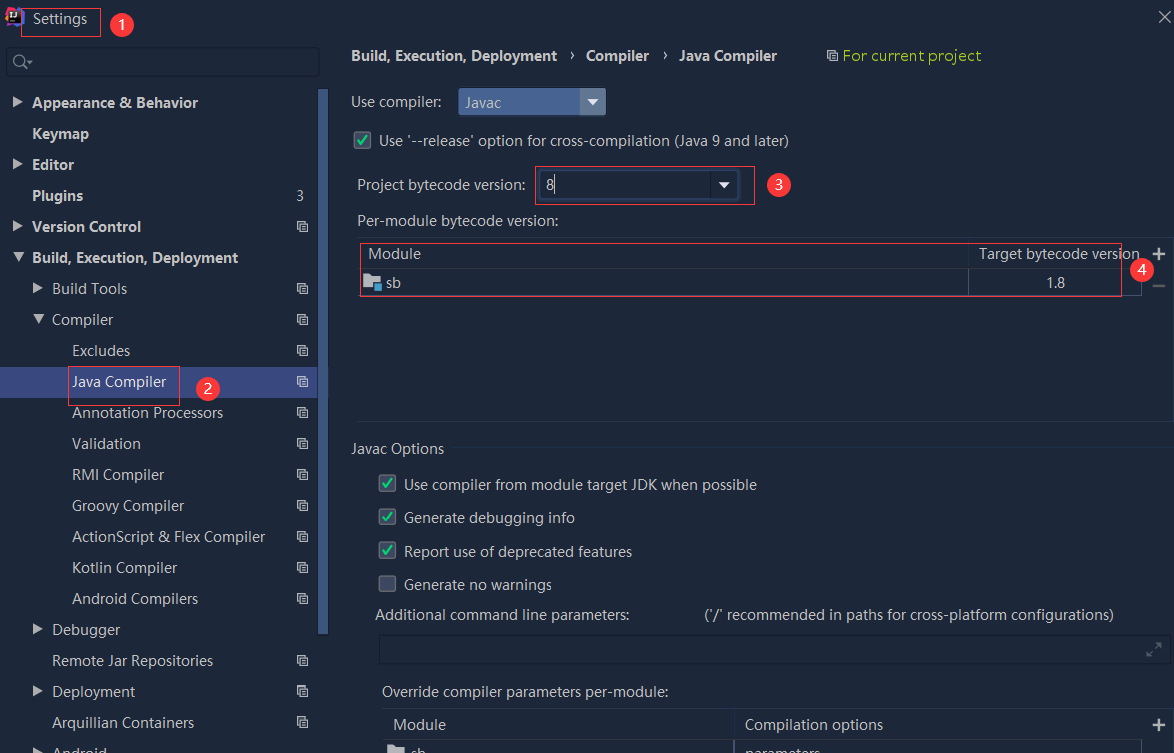

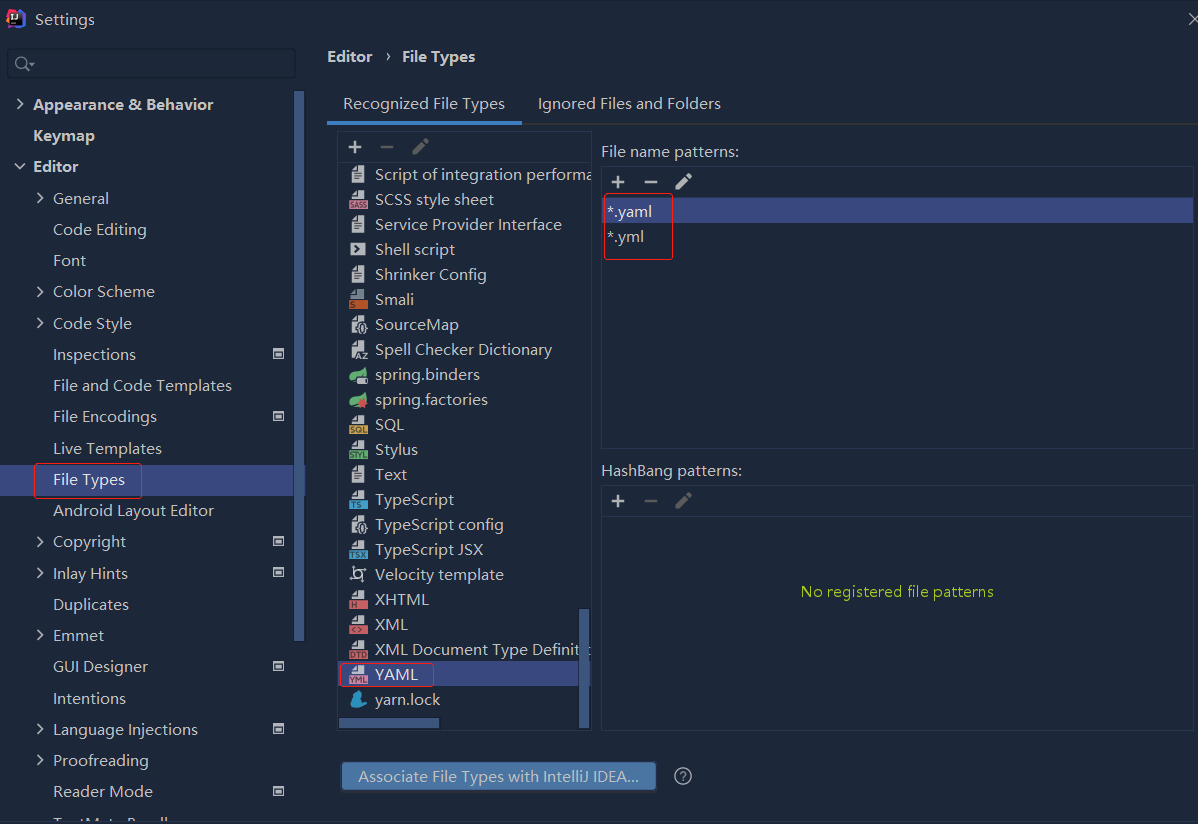

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

依赖报错 idea导入项目后依赖报错,解决方案:https://blog....

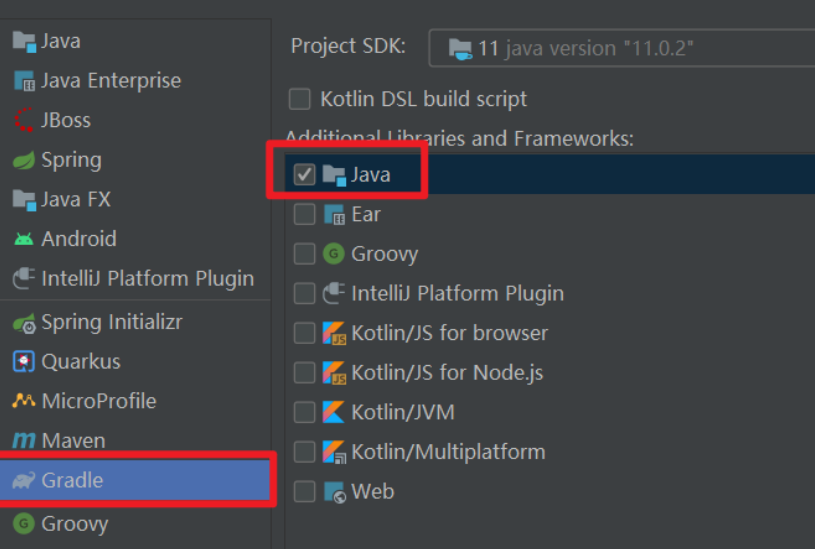

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...

错误1:gradle项目控制台输出为乱码 # 解决方案:https://bl...