提示:文章写完后,目录可以自动生成,如何生成可参考右边的帮助文档

前言

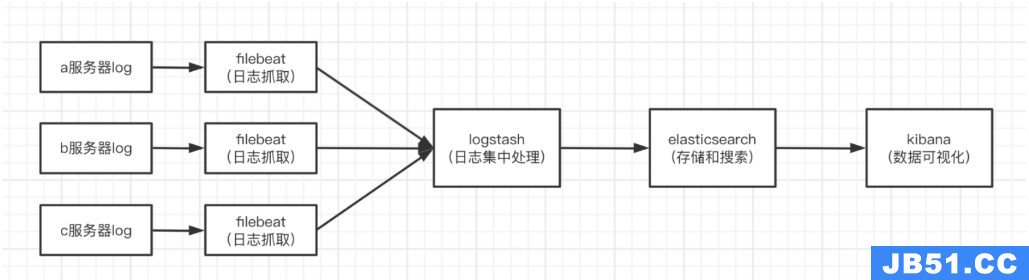

为了实现分布式日志数据统一收集,实现集中式查询和管理 故障排查 安全信息和事件管理

一、ELK是什么?

ELK 是三个开源项目的首字母缩写,这三个项目分别是:Elasticsearch、Logstash 和 Kibana。

• Elasticsearch 是一个搜索和分析引擎。

• Logstash 是服务器端数据处理管道,能够同时从多个来源采集数据,转换数据,然后将数据发送到诸如 Elasticsearch 等存储库中。

• Kibana 则可以让用户在 Elasticsearch 中使用图形和图表对数据进行可视化。

其中filbeat是安装到web端的服务器进行日志采集

二、安装步骤

注意好对应的版本!!! (该教程使用的是7.13.2版本)

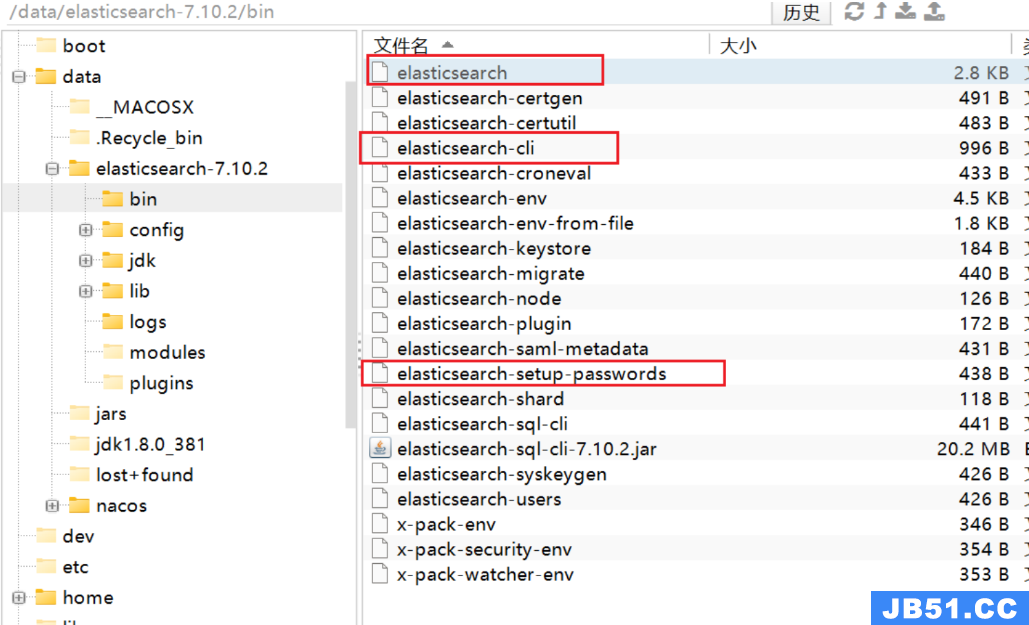

1.es(Elasticsearch)安装配置

由于一开始装的是7.10.2的所以截图中是7.10.2,版本不对应的话会出现版本冲突问题,后续统一调整为了7.13.2版本

创建es用户组:groupadd es

在es用户组下创建es用户:useradd es -g es -p es

进入es用户

[root@iZj6c49h0dw85252u6oxu0Z ~]# su es

下载安装包或者上传安装包

[es@iZj6c49h0dw85252u6oxu0Z data]$ wget https://artifacts.elastic.co/downloads/elasticsearch/eelasticsearch-7.13.2-linux-x86_64.tar.gz

解压

[es@iZj6c49h0dw85252u6oxu0Z data]$ tar -zxvf elasticsearch-7.13.2-linux-x86_64.tar.gz

赋予用户权限:chown -R es:es /data/elasticsearch-7.13.2

启动es

[es@iZj6c49h0dw85252u6oxu0Z es]$ cd elasticsearch-7.13.2/

[es@iZj6c49h0dw85252u6oxu0Z elasticsearch-6.5.0]$ bin/elasticsearch -d

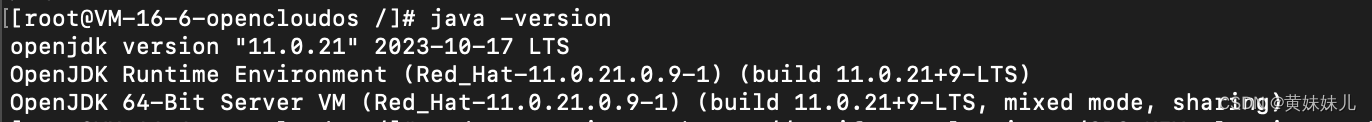

修改三个配置文件,文件中添加

export JAVA_HOME=/data/elasticsearch-7.13.2/jdk

export PATH=$JAVA_HOME/bin:$PATH

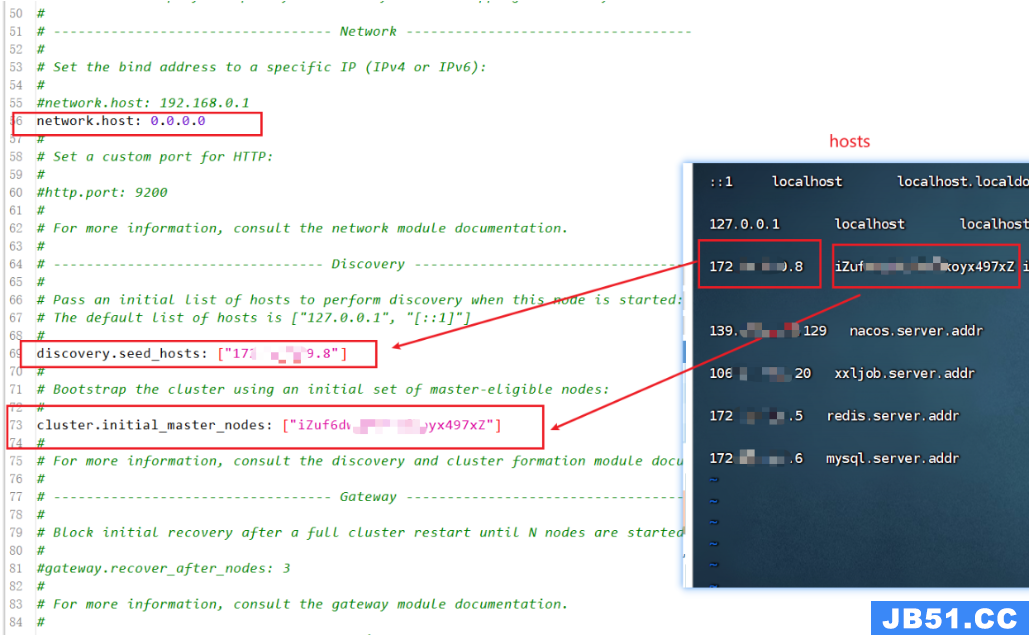

在/data/elasticsearch-7.13.2/config 中修改配置

服务器中验证 curl http://内网ip:9200

出现该画面就是按照配置成功启动了!

可以修改 /elasticsearch-7.13.2/config/jvm.options 文件

分配运行内存

-Xms4g

-Xmx4g

安装时遇到的问题:

错误问题

一

[root@izuf672oio5mc4fbyj0s0jz ~]# curl http://47.244.38.173:9200/

curl: (7) Failed connect to 47.244.38.173:9200; Connection refused

修改elasticsearch.yml文件,去掉注释并修改IP:network.host: 0.0.0.0,并开通入方向的阿里云访问规则,再次启动ES就可以了

[es@izuf672oio5mc4fbyj0s0jz elasticsearch-6.5.0]$ vi config/elasticsearch.yml

二

[1] bootstrap checks failed

[1]: max file descriptors [65535] for elasticsearch process is too low,increase to at least [65536]

root用户下修改/etc/security/limits.conf

:su root

vim /etc/security/limits.conf

1.修改内容:

root soft nofile 65535

root hard nofile 65535

* soft nofile 65536

* hard nofile 65536

2.修改/etc/sysctl.conf

最后新增vm.max_map_count=655360

修改完成后,执行 sysctl -p 命令,使配置生效

2.Logstash安装配置

上传并解压

tar -zxvf logstash-7.13.2-linux-x86_64.tar.gz

解压完之后进入/config,拷贝一份logstash-sample.conf到bin目录下,方便后面启动:

cp /data/logstash-7.13.2/config/logstash-sample.conf /data/logstash-7.13.2/config/logstash.conf

编辑以下内容拷贝到bin的logstash.conf

# Sample Logstash configuration for creating a simple

# Beats -> Logstash -> Elasticsearch pipeline.

input {

beats {

port => 5044

}

}

# log.file.path=/data/jars/logs/charge-server/charge-server-2023-08-29.5.log

# index => "%{[@metadata][beat]}}-%{+YYYY.MM.dd}"

# index => "%{[fields][log_type]}-%{+YYYY.MM.dd}"

output {

elasticsearch {

hosts => ["http://localhost:9200"]

index => "%{[fields][log_type]}-%{+YYYY.MM.dd}"

#user => "elastic"

#password => "changeme"

}

}

服务器后台启动

nohup bin/logstash -f config/logstash.conf > /dev/null 2>&1 &

应用命令启动(可实时看到启动日志)

bin/logstash -f config/logstash.conf

3.Kibana安装与配置

上传并解压

tar -zxvf kibana-7.13.2-linux-x86_64.tar.gz

编辑/config/kibana.yml

这里用的是默认端口5601,这里serverhost不能用localhost,不然外网访问不到,在配置文件的最后一行,还可以将系统设置为中文。

kibana也不能使用root用户启动

# Kibana is served by a back end server. This setting specifies the port to use.

#server.port: 5601

# Specifies the address to which the Kibana server will bind. IP addresses and host names are both valid values.

# The default is 'localhost',which usually means remote machines will not be able to connect.

# To allow connections from remote users,set this parameter to a non-loopback address.

server.host: "0.0.0.0"

# Specifies locale to be used for all localizable strings,dates and number formats.

# Supported languages are the following: English - en,by default,Chinese - zh-CN .

i18n.locale: "zh-CN"

#es的hosts

elasticsearch.hosts: ["http://192.xxx.x.211:9200"]

cd kibana-7.13.2-linux-x86_64/bin/

启动

./kibana --allow-root &

nohup ./kibana --allow-root &

日志路径

/data/ELK/kibana-7.13.2-linux-x86_64/config/node.options

4.Filbeat安装与配置

上传并解压

tar -zxvf filebeat-7.13.2-linux-x86_64.tar.gz

编辑/filebeat-7.13.2-linux-x86_64/filebeat.yml

filebeat.inputs:

- type: log

enabled: true

paths:

# 地址是服务的日志地址

- /data/jars/logs/base-server/*.log

#- c:\programdata\elasticsearch\logs\*

fields:

log_type: "base-server"

#多个服务就在后面追加

- type: log

enabled: true

paths:

- /data/jars/logs/finance-server/*.log

#- c:\programdata\elasticsearch\logs\*

fields:

log_type: "finance-server"

- type: filestream

# Change to true to enable this input configuration.

enabled: false

# Paths that should be crawled and fetched. Glob based paths.

paths:

- /var/log/*.log

#- c:\programdata\elasticsearch\logs\*

# ============================== Filebeat modules ==============================

filebeat.config.modules:

# Glob pattern for configuration loading

path: ${path.config}/modules.d/*.yml

# Set to true to enable config reloading

reload.enabled: false

# Period on which files under path should be checked for changes

#reload.period: 10s

# ======================= Elasticsearch template setting =======================

setup.template.settings:

index.number_of_shards: 1

#index.codec: best_compression

#_source.enabled: false

# =================================== Kibana ===================================

# Starting with Beats version 6.0.0,the dashboards are loaded via the Kibana API.

# This requires a Kibana endpoint configuration.

setup.kibana:

# Kibana Host

# Scheme and port can be left out and will be set to the default (http and 5601)

# In case you specify and additional path,the scheme is required: http://localhost:5601/path

# IPv6 addresses should always be defined as: https://[2001:db8::1]:5601

#host: "localhost:5601"

# Kibana Space ID

# ID of the Kibana Space into which the dashboards should be loaded. By default,# the Default Space will be used.

#space.id:

#日志是推送到Logstash,# ------------------------------ Logstash Output -------------------------------

output.logstash:

#The Logstash hosts

#配置安装Logstash所在的服务器ip

hosts: ["127.0.0.1:5044"]

# Optional SSL. By default is off.

# List of root certificates for HTTPS server verifications

#ssl.certificate_authorities: ["/etc/pki/root/ca.pem"]

# Certificate for SSL client authentication

#ssl.certificate: "/etc/pki/client/cert.pem"

# Client Certificate Key

#ssl.key: "/etc/pki/client/cert.key"

# ================================= Processors =================================

processors:

- add_host_metadata:

when.not.contains.tags: forwarded

- add_cloud_metadata: ~

- add_docker_metadata: ~

- add_kubernetes_metadata: ~

启动运行

nohup ./filebeat -e -c filebeat.yml > /dev/null 2>&1 &

./filebeat -e -c filebeat.yml

遇到的问题:

不定时间,filebeat就会自动退出或者ssh连接断开filebeat自动退出

1后台启动

`nohup ./filebeat -e -c filebeat.yml > /dev/null 2>&1 &`

2不要直接关闭终端,而是先执行命令exit后

3关闭shh连接终端

最后ip:5601访问kibana 至此安装完毕!

总结

例如:以上就是今天要讲的内容,本文仅仅简单介绍了ELK的安装,而ELK其他强大的功能需要继续学习

文章浏览阅读784次。react router redux antd eslint pretti...

文章浏览阅读784次。react router redux antd eslint pretti... 文章浏览阅读1.2k次,点赞23次,收藏24次。Centos 8 安装es_...

文章浏览阅读1.2k次,点赞23次,收藏24次。Centos 8 安装es_... 文章浏览阅读1.9k次,点赞2次,收藏7次。针对多数据源写入的...

文章浏览阅读1.9k次,点赞2次,收藏7次。针对多数据源写入的...