http://www.centoscn.com/CentosServer/cluster/2016/0515/7239.html

http://www.open-open.com/lib/view/open1451484695073.html

-

OpenStack:恒天云3.4

-

操作系统:centos 7.0

-

flannel: 0.5.5

-

Kubernetes: 1.2.0

-

Etcd版本: 2.2.2

-

Docker版本: 1.18.0

-

集群信息:

| Role |

Hostname |

IP Address |

|---|---|---|

| Master |

master |

10.0.222.2 |

| Node |

node1 |

10.0.222.3 |

| Node |

node2 |

10.0.222.4 |

| Node |

node3 |

10.0.222.5 |

| Node |

node4 |

10.0.222.6 |

master包含kube-apiserver kube-scheduler kube-controller-manager etcd四个组件

node包含kube-proxy和kubelet两个组件

2. kube-scheduler:位于master节点,负责资源调度,即pod建在哪个node节点。

3. kube-controller-manager:位于master节点,包含ReplicationManager,Endpointscontroller,Namespacecontroller,and Nodecontroller等。

4. etcd:分布式键值存储系统,共享整个集群的资源对象信息。

5. kubelet:位于node节点,负责维护在特定主机上运行的pod。

6. kube-proxy:位于node节点,它起的作用是一个服务代理的角色。

二 、安装步骤

准备工作

关闭防火墙

为了避免和Docker的iptables产生冲突,我们需要关闭node上的防火墙:

1 2 |

$ systemctl stop firewalld $ systemctl disable firewalld |

安装NTP

为了让各个服务器的时间保持一致,还需要为所有的服务器安装NTP:

$ yum -y install ntp $ systemctl start ntpd $ systemctl enable ntpd |

部署Master

安装etcd和kubernetes

1 |

$ yum -y install etcd kubernetes |

配置etcd

修改etcd的配置文件/etc/etcd/etcd.conf:

配置etcd中的网络

定义etcd中的网络配置,nodeN中的flannel service会拉取此配置

$ etcdctl mk /coreos.com/network/config '{"Network":"172.17.0.0/16"}'

|

配置Kubernetes API server

API_ADDRESS="--address=0.0.0.0" KUBE_API_PORT="--port=8080" KUBELET_PORT="--kubelet_port=10250" KUBE_ETCD_SERVERS="--etcd_servers=http://10.0.222.2:2379" KUBE_SERVICE_ADDRESSES="--portal_net=10.254.0.0/16" KUBE_ADMISSION_CONTROL="--admission_control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota" KUBE_API_ARGS="" |

这里需要注意原来KUBE_ADMISSION_CONTROL默认包含的ServiceAccount要删掉,不然启动API server的时候会报错。

启动服务

接下来,在Master上启动下面的服务:

$for SERVICES in etcd kube-apiserver kube-controller-manager kube-scheduler; do systemctl restart $SERVICES systemctl enable $SERVICES systemctl status $SERVICES done |

部署Node

安装Kubernetes和Flannel

配置Flannel

修改Flannel的配置文件/etc/sysconfig/flanneld:

1 2 3 |

FLANNEL_ETCD="http://10.0.222.2:2379" FLANNEL_ETCD_KEY="/coreos.com/network" FLANNEL_OPTIONS="--iface=ens3" |

这里需要注意FLANNEL_OPTIONS中的iface的值是你自己服务器的网卡,不同的服务器以及配置下和我的是不一样的。

启动Flannel

上传网络配置

在当前目录下创建一个config.json,内容如下:

{

"Network": "172.17.0.0/16","SubnetLen": 24,"Backend": {

"Type": "vxlan","VNI": 7890

}

}

|

然后将配置上传到etcd服务器上:

1 |

$ curl -L http://10.0.222.2:2379/v2/keys/coreos.com/network/config -XPUT --data-urlencode value@config.json |

修改Kubernetes配置

修改kubernetes默认的配置文件/etc/kubernetes/config:

修改kubelet配置

修改kubelet服务的配置文件/etc/kubernetes/kubelet:

1 2 3 4 5 6 |

KUBELET_ADDRESS="--address=0.0.0.0" KUBELET_PORT="--port=10250" # change the hostname to minion IP address KUBELET_HOSTNAME="--hostname_override=node1" KUBELET_API_SERVER="--api_servers=http://10.0.222.2:8080" KUBELET_ARGS="" |

不同node节点只需要更改KUBELET_HOSTNAME 为node的hostname即可。

启动node服务

创建快照,其他节点用快照安装(修改相应的hostname以及KUBELET_HOSTNAME即可)

查看集群nodes

部署完成之后,可以kubectl命令来查看整个集群的状态:

以下面的图来安装一个简单的静态内容的nginx应用:

首先,我们用复制器启动一个2个备份的nginx Pod。然后在前面挂Service,一个service只能被集群内部访问,一个能被集群外的节点访问。

1. 部署nginx pod 和复制器

#cat nginx-rc.yaml

apiVersion: v1

kind: ReplicationController

metadata:

name: nginx-controller

spec:

replicas: 2

selector:

name: nginx

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: nginx

ports:

- containerPort: 80

我们定义了一个nginx pod复制器,复制份数为2,我们使用nginx docker镜像。

执行下面的操作创建nginx pod复制器:

[root@master test]# kubectl create -f nginx-rc.yaml replicationcontrollers/nginx-controller记得先去下载gcr.io镜像,然后改名,否则会提示失败。由于还会下载nginx镜像,所以所创建的Pod需要等待一些时间才能处于running状态。

[root@master test]# kubectl get pods NAME READY STATUS RESTARTS AGE nginx 1/1 Running 0 1d nginx-controller-dkl3v 1/1 Running 0 14s nginx-controller-hxcq8 1/1 Running 0 14s

我们可以使用describe 命令查看pod的相关信息:

[root@master test]# kubectl describe pod nginx-controller-dkl3v

Name: nginx-controller-dkl3v

Namespace: default

Image(s): nginx

Node: 192.168.32.17/192.168.32.17

Labels: name=nginx

Status: Running

Reason:

Message:

IP: 172.17.67.2

Replication Controllers: nginx-controller (2/2 replicas created)

Containers:

nginx:

Image: nginx

State: Running

Started: Wed,30 Dec 2015 02:03:19 -0500

Ready: True

Restart Count: 0

Conditions:

Type Status

Ready True

Events:

FirstSeen LastSeen Count From SubobjectPath Reason Message

Wed,30 Dec 2015 02:03:14 -0500 Wed,30 Dec 2015 02:03:14 -0500 1 {scheduler } scheduled Successfully assigned nginx-controller-dkl3v to 192.168.32.17

Wed,30 Dec 2015 02:03:15 -0500 Wed,30 Dec 2015 02:03:15 -0500 1 {kubelet 192.168.32.17} implicitly required container POD pulled Pod container image "kubernetes/pause" already present on machine

Wed,30 Dec 2015 02:03:16 -0500 Wed,30 Dec 2015 02:03:16 -0500 1 {kubelet 192.168.32.17} implicitly required container POD created Created with docker id e88dffe46a28

Wed,30 Dec 2015 02:03:17 -0500 Wed,30 Dec 2015 02:03:17 -0500 1 {kubelet 192.168.32.17} implicitly required container POD started Started with docker id e88dffe46a28

Wed,30 Dec 2015 02:03:18 -0500 Wed,30 Dec 2015 02:03:18 -0500 1 {kubelet 192.168.32.17} spec.containers{nginx} created Created with docker id 25fcb6b4ce09

Wed,30 Dec 2015 02:03:19 -0500 Wed,30 Dec 2015 02:03:19 -0500 1 {kubelet 192.168.32.17} spec.containers{nginx} started Started with docker id 25fcb6b4ce09

2. 部署节点内部可访问的nginx service

Service的type有ClusterIP和NodePort之分,缺省是ClusterIP,这种类型的Service只能在集群内部访问。配置文件如下:

#cat nginx-service-clusterip.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-service-clusterip

spec:

ports:

- port: 8001

targetPort: 80

protocol: TCP

selector:

name: nginx

执行下面的命令创建service:

[root@master test]# kubectl create -f ./nginx-service-clusterip.yaml services/nginx-service-clusterip查看所创建的service:

[root@master test]# kubectl get service NAME LABELS SELECTOR IP(S) PORT(S) kubernetes component=apiserver,provider=kubernetes <none> 10.254.0.1 443/TCP nginx-service-clusterip <none> name=nginx 10.254.234.255 8001/TCP上面的输出告诉我们这个 Service的Cluster IP是10.254.234.255,端口是8001。下面我们验证这个PortalNet IP的工作情况:

在192.168.32.16上执行以下命令:

[root@minion1 ~]# curl -s 10.254.234.255:8001

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma,Arial,sans-serif;

}

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page,the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

从前面部署复制器的部分我们知道nginx Pod运行在17节点上。上面我们特意从16代理节点上访问我们的服务来体现Service Cluster IP在所有集群代理节点的可到达性。

3. 部署外部可访问的nginx service下面我们创建NodePort类型的Service,这种类型的Service在集群外部是可以访问。配置文件如下:

cat nginx-service-nodeport.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-service-nodeport

spec:

ports:

- port: 8000

targetPort: 80

protocol: TCP

type: NodePort

selector:

name: nginx

执行如下命令创建service并进行查看:

[root@master test]# kubectl create -f ./nginx-service-nodeport.yaml You have exposed your service on an external port on all nodes in your cluster. If you want to expose this service to the external internet,you may need to set up firewall rules for the service port(s) (tcp:31000) to serve traffic. See http://releases.k8s.io/HEAD/docs/user-guide/services-firewalls.md for more details. services/nginx-service-nodeport [root@master test]# kubectl get service NAME LABELS SELECTOR IP(S) PORT(S) kubernetes component=apiserver,provider=kubernetes <none> 10.254.0.1 443/TCP nginx-service-clusterip <none> name=nginx 10.254.234.255 8001/TCP nginx-service-nodeport <none> name=nginx 10.254.210.68 8000/TCP创建service时提示需要设置firewall rules,不用去管,不影响后续操作。

查看创建的service:

[root@master test]# kubectl describe service nginx-service-nodeport Name: nginx-service-nodeport Namespace: default Labels: <none> Selector: name=nginx Type: NodePort IP: 10.254.210.68 Port: <unnamed> 8000/TCP NodePort: <unnamed> 31000/TCP Endpoints: 172.17.67.2:80,172.17.67.3:80 Session Affinity: None No events.这个 Service的节点级别端口是31000 。下面我们验证这个 Service的工作情况:

[root@master test]# curl -s 192.168.32.16:31000

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma,the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

[root@master test]# curl -s 192.168.32.17:31000

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma,the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

不管是从16还是17,都能访问到我们的服务。

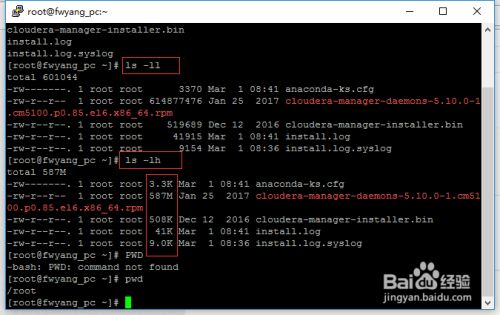

最简单的查看方法可以使用ls -ll、ls-lh命令进行查看,当使用...

最简单的查看方法可以使用ls -ll、ls-lh命令进行查看,当使用...